In the contemporary world today, computer vision applications make use of 4G technology and high-definition (HD) video calling on mobile phones. People frequently utilize 4G video calling to communicate with friends and family. The technology is capable of projecting minute elements from the real world, such as background, facial features, and behavior, among other things. We developed a video processing system that lets users alter the shape and look of facial features such as the eyebrows, eyes, nose, lip, jaw, and chin. Our work improves users’ facial look during live 4G video calls; the user sees the desired modified face feature in real-time, as if in a virtual mirror, and can then use it. Abstract environment.

## I. INTRODUCTION

Human faces represent detailed sources of information such as identity, age, gender, intention, and reaction (Response of expression). Also, humans have a great inclination towards their face attractiveness [8]. Thus, there exist many cosmetic surgeries to improve face attractiveness by modifying face appearance, face color, and shape. However cosmetic surgery is more expensive and painful, therefore only rich people can afford it [7] [9] [10]. Extensive research is being carried out in this field for improving facial aesthetics. But face aesthetics is subjective. Thus, we need some solution that can generate or predict face appearance before surgery [13] [14]. The computer vision domain provides research for the improvement of facial aesthetics in the digital domain which is virtual improvement in facial aesthetics in images and video. We proposed a real time face feature reshaping technique for eyes, eyebrows, jaw, chin, nose, and lips. So that user can see their manipulated appearance in real-time.

## II. PREVIOUS WORK

Face detection is the most important phase in computer vision problems. Michael J. Jones Paul Viola [30] proposed a face detection framework. We categorized the face morphing method into two types: Model-based and non-model-based methods. The model-based method includes various face shape models such as the Active appearance model [24] [29], the Active Shape model [24], the Constrained Local Model [21], the 3D morphable face model [2-3][8][18] and many more. The base face model [19] and surrey face model [19] are the most widely used 3D face model. The face shape model is constructed from the face, morph the model then fits onto the original face. The model construction and fitting are more difficult tasks that required lots of computation. The model-based method provides a good result for real-time processing so widely used in image and video morphing. D. Kasat et. al [9] proposed a real-time morphing system that morphs the face feature in real-time using a Kinect sensor to stream input video. The system was able to morph the face features such as the jaw, chin, nose, mouth, and eyes in real-time. The Active appearance model-based method and moving least square method [35] are used for image deformation. The system degraded the performance in some illumination conditions and produce delay. Yuan Lin et. al [3] proposed a face-swapping method that does not require the same pose and appearance of the source and target image. The 3D model-based approach is used therefore allowing any render angle of a pose. The 3D model is constructed from the user's uploaded image and the swapping method is used to replace the character of the face. The result is accurate when the target object has nonfrontal faces. But the method is not able to handle large illumination differences between the source image and target image. A non-model-based method such as face morphing using a critical point filter [6] finds the critical value of the face and modifies that value using a critical point filter (CPF). The filter can filter image properties such as depth value, color, and intensity. The method is developed for two face images of the frontal face. The landmark points used are few to curtail the processing time required for a greater number of landmarks and large image size.

## III. SYSTEM OVERVIEW

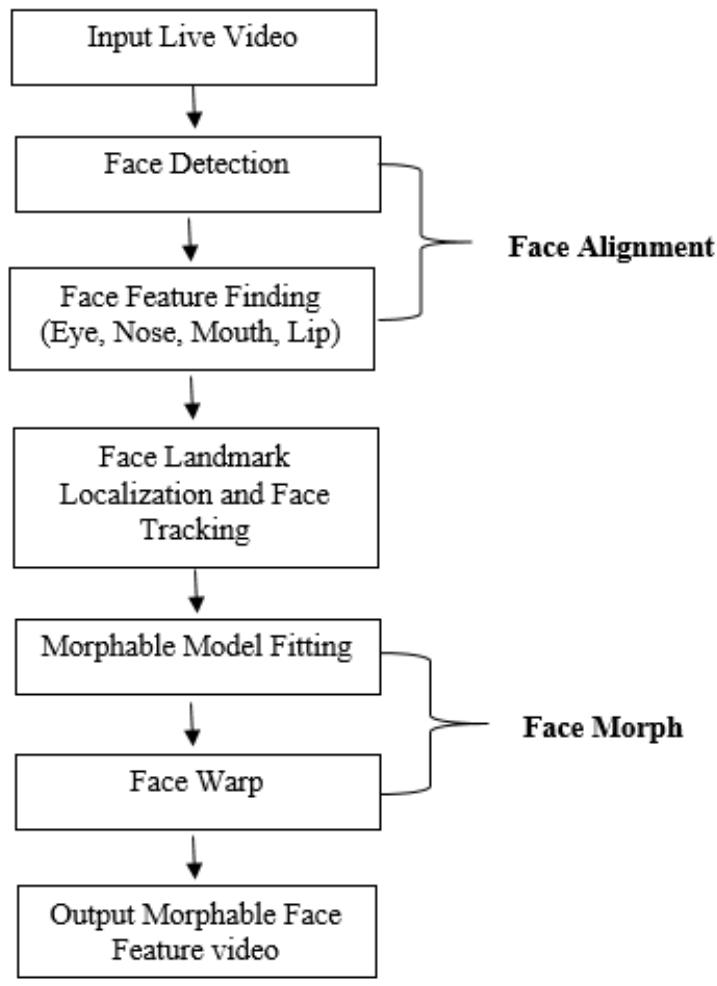

This system is implemented using OpenCV with python 3.7.0. The main objective of this work is to reshape individual features of the face like eyebrows, eyes, nose, lip, chin, jaw, etc. in real-time with minimum delay. An image deformation algorithm is thus applied only on the face area of window size $200 \times 200$. The window size is fixed to reduce the response delay. The system flowchart is illustrated in figure 1. The working of the system begins with the capture of the real-time video using the device camera. As input to the system, we took the RGB video stream captured by the Sony Laptop (device) front camera at a width of 900 pixels, and convert the RGB video frame into a grayscale image for image deformation operation. We assumed that there is only 1 person (referred to as the actor) in front of the device camera, and take as input a set of

face shape modification parameters from the user. Then, we detect the face using a face detection algorithm and identify the face features to localize the certain key feature point. Object detection using Histogram of Oriented Gradients with Linear SVM method [36] is being used to detect facial landmarks. Firstly, OpenCV is used to detect the face from the given input video frame, then pre-processed the image to maintain equal size with a width of 900 pixels.

Fig. 1: System Diagram of Proposed System

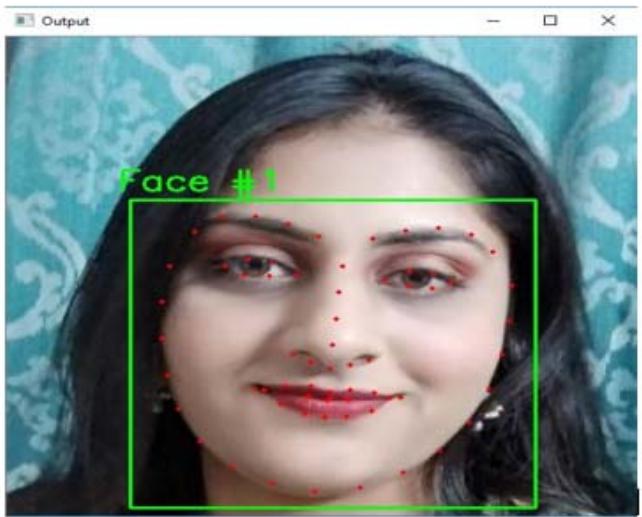

Therefore, we converted each video frame to have an equal size. The resolution of the input image allows us to detect more depth of video frames. In our system, we used 68 Primary and Secondary landmark points [33] as shown in figure 2. The landmark points are used to find the exact position of the face feature and used to reshape the features. The system extracts a set of x/y coordinates on the input face. These face landmark points are fed into the image deformation method that reshapes the face parameter as per the user requirement provided as input. The Facial feature is the lip, Right eyebrow, Left eyebrow, Right eye, Left eye, Nose, Jaw, and chin. Kazemi and Sullivan [33] proposed a One Millisecond Face Alignment with an Ensemble of Regression Trees based method used for face landmark detection. We used this method for accurate landmark detection on the user's face. We used a pre-trained facial landmark detector to

Fig. 2: Visualizing the 68 Facial Landmark Coordinates

estimate the location of 68 (x, y)-coordinates that map to facial structures on the face. The indexes of the 68 coordinates can be visualized in figure 2. We can see that the red dots mapped to specific facial features, including the jaw, chin, lips, nose, eyes, and eyebrows. The end result detected facial landmarks in real-time with high accuracy predictions. The Moving Least square (MLS) [35] method is used to reconstruct a surface from a set of landmark points. MLS created deformations using affine similarity, and rigid transformations. These deformations are realistic and give the user the impression of manipulating the facial features in real-time with high speed. The method generates a new image frame by warping the corresponding pixels of the source points to the positions of the destination points. Image deformation performs in each and every video frame and generates an output video in real-time. The output from the system is live and a morphed RGB video stream where the required face shape modifications are performed.

Fig. 3: Original image (left) and its deformation using the rigid MLS method (right). After deformation, the face eyebrow is reshape

(a)

(b)

(c)

(d)

(e)

(f)

(g) Fig. 4: (a) Original image (b) Left eyebrow is reshape (c) Right Eye Reshape (d) Nose Reshape (e) Lip Reshape (f) Chin Reshape (g) jaw Reshape

## IV. RESULT ANALYSIS

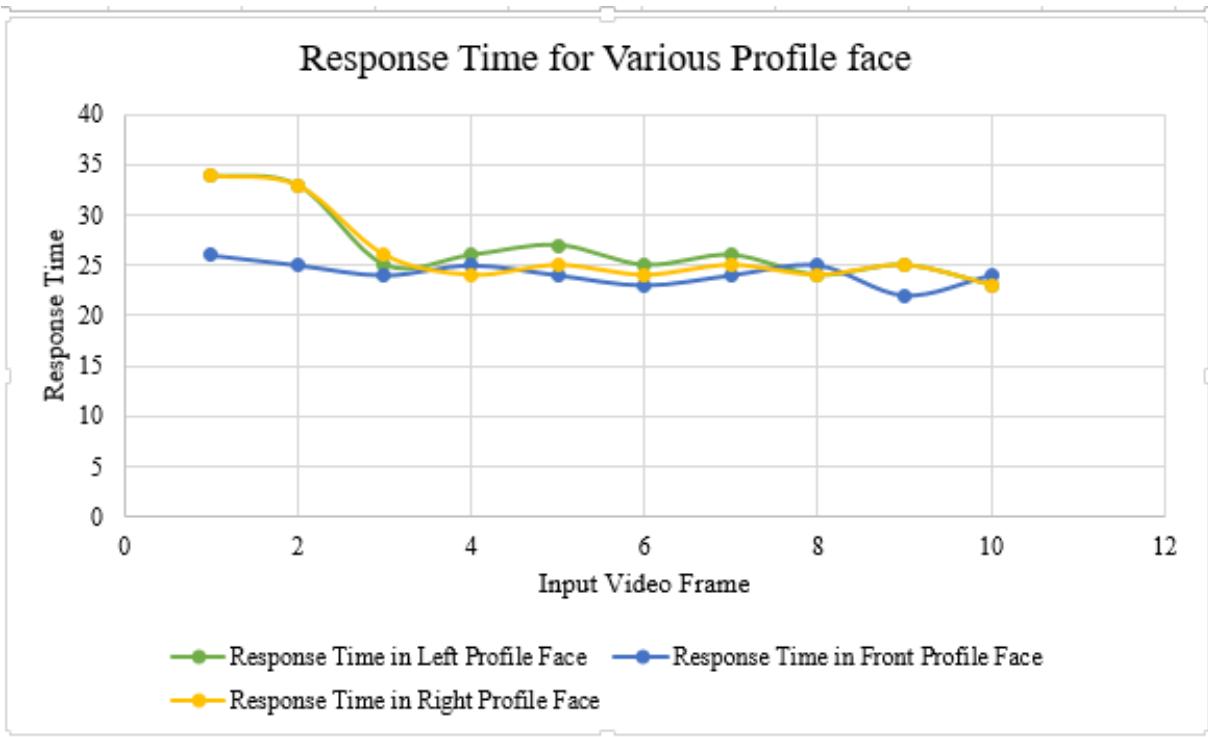

The result of the proposed work is projected in Figure 4 in which various feature such as eyebrow, eye, nose, lip, chin, and jaw are reshaped as per user requirement. The reshaped feature can be deployed by the common use for various applications such as in plastic beauty surgery in which user can visualize their face before cosmetic surgery. The user can change various parameters in real-time and visualize their face with a modified face feature. The system can also be used for face beautification applications, to have some fun with friends, to share on social media, and capture memorable moments. The best thing is we edit in real-time without using any external device. The system was evaluated for various profile face such as left, frontal, and right profile face. We also analyzed the time required to produce the output video. The result shows that in left and right profile face required more time to produce output video. Table 1 shows the Response time required to generate an output video of ten video frames in a millisecond. Figure 5 shows the time analysis graph of various profile faces.

## V. COMPARISON WITH PREVIOUS WORK

Work done by D. Kasat et al. [9], in which we can see similar effects (as shown in Figure 8) on face feature are reshaped using Kinect sensor for input video.

Table 1: Response Time for Output Video Frame in Various Profile Face

<table><tr><td>Video Frame</td><td>Response Time in Left Profile Face</td><td>Response Time in Front Profile Face</td><td>Response Time in Right Profile Face</td></tr><tr><td>1</td><td>34</td><td>26</td><td>34</td></tr><tr><td>2</td><td>33</td><td>25</td><td>33</td></tr><tr><td>3</td><td>25</td><td>24</td><td>26</td></tr><tr><td>4</td><td>26</td><td>25</td><td>24</td></tr><tr><td>5</td><td>27</td><td>24</td><td>25</td></tr><tr><td>6</td><td>25</td><td>23</td><td>24</td></tr><tr><td>7</td><td>26</td><td>24</td><td>25</td></tr><tr><td>8</td><td>24</td><td>25</td><td>24</td></tr><tr><td>9</td><td>25</td><td>22</td><td>25</td></tr><tr><td>10</td><td>23</td><td>24</td><td>23</td></tr></table>

Fig. 5: Response Time for Various Facial Positions

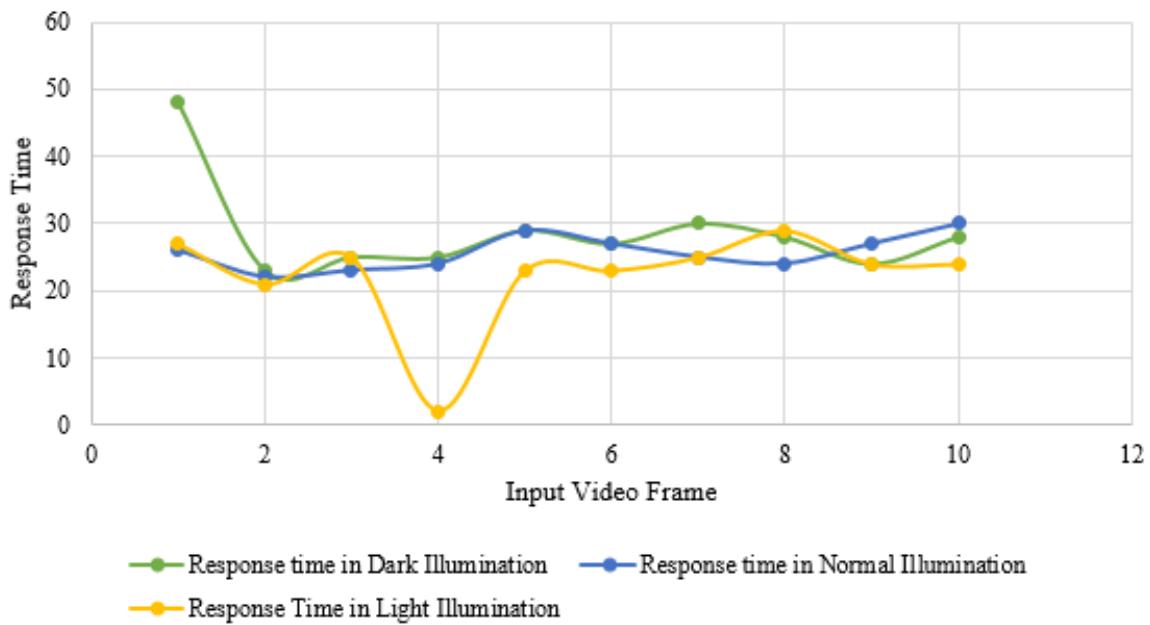

Table 2: Average Response Time for Output Video Frame in Various Illumination Conditions

<table><tr><td>Video Frame</td><td>Response time in Dark Illumination</td><td>Response time in Nor- mal Illumination</td><td>Response Time in Light Illumination</td></tr><tr><td>1</td><td>48</td><td>26</td><td>27</td></tr><tr><td>2</td><td>23</td><td>22</td><td>21</td></tr><tr><td>3</td><td>25</td><td>23</td><td>25</td></tr><tr><td>4</td><td>25</td><td>24</td><td>24</td></tr><tr><td>5</td><td>29</td><td>29</td><td>23</td></tr><tr><td>6</td><td>27</td><td>27</td><td>23</td></tr><tr><td>7</td><td>30</td><td>25</td><td>25</td></tr><tr><td>8</td><td>28</td><td>24</td><td>29</td></tr><tr><td>9</td><td>24</td><td>27</td><td>24</td></tr><tr><td>10</td><td>28</td><td>30</td><td>24</td></tr></table>

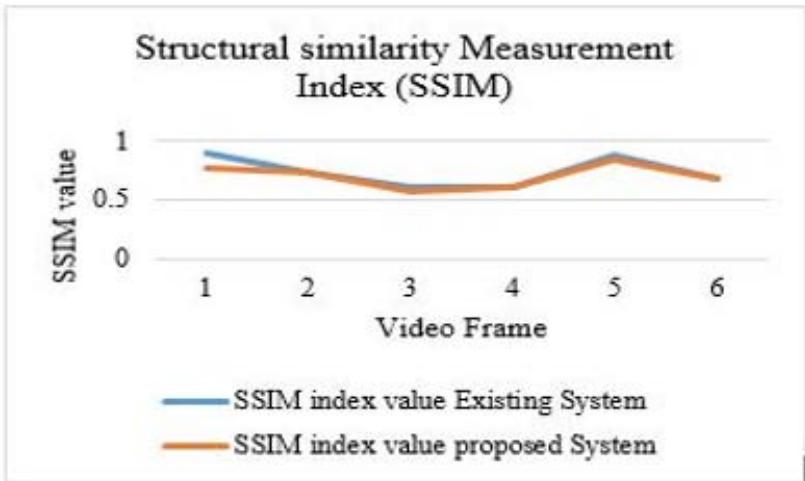

Response Time for Various Illumination Conditions Fig. 6: Time Graph of Various Illumination Conditions In our works, (as shown in Figure 4) the system allows users to reshape individual face parameters in real-time without using any external device with less delay. In the existing system, we need to configure and connect the Microsoft Kinect sensor with the device therefore, the system portability is less compared to the proposed system. The quality of output and input images compared with Structural Similarity Measurement Index (SSIM).

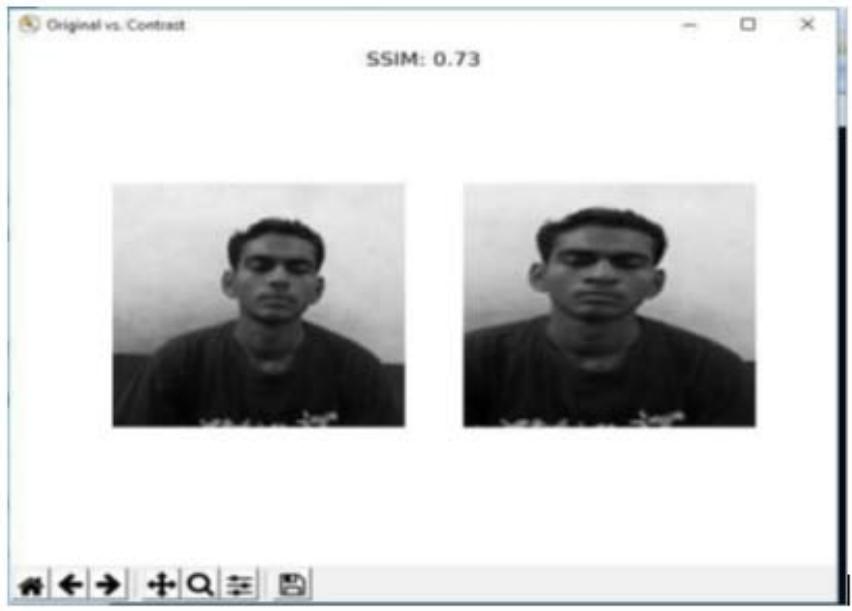

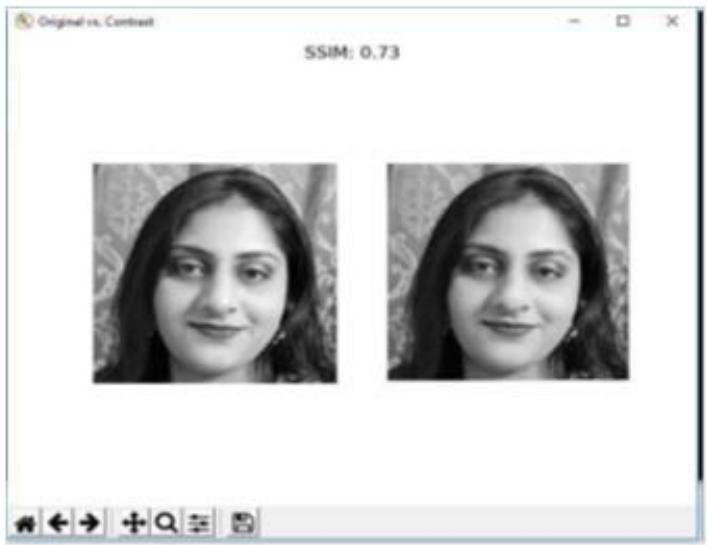

The SSIM value of an existing and proposed system is shown in table 3. The existing system provides good quality results because they used Microsoft Kinect sensor for input video that provides a 3D view and depth of capture image. In our system, we used a device front camera that is enough to be capable good quality video. The graphical representation of the SSIM value is shown in figure 9. The comparison of the original face and deformed face with SSIM value shows in figure 8 and figure 9.

Table 3: SSIM Value of Existing and Proposed System SSIM Value Existing System Proposed System

<table><tr><td>SSIM value</td><td>Existing System</td><td>Proposed System</td></tr><tr><td>Result 1</td><td>0.73</td><td>0.73</td></tr><tr><td>Result 2</td><td>0.89</td><td>0.77</td></tr><tr><td>Result 3</td><td>0.80</td><td>0.77</td></tr><tr><td>Result 4</td><td>0.74</td><td>0.74</td></tr></table>

Fig. 7: Structural Similarity Measurement Index Analysis

Fig. 8: Existing System (a) Original Image (b) Morphed Image with SSIM=0.73

Fig. 9: Proposed System (a) Original Image (b) Morphed Image with SSIM 0.73

## VI. CONCLUSION

A real-time face feature reshaping for video sequence is introduced which allows reshaping of specific features like eyebrow, eye, nose, lip, jaw, and chin of the face which cannot be achieved by existing morphing technique. We proposed for the first time, a real-time video morphing system without any use of an external device. Our system provides portability that can be in entertainment applications, games, film production, medical, and beautification fields. With full flexibility and good accuracy, the system allows to reshape face features in real-time. The comparison with the existing method using the SSIM index qualifies the quality of generated output. Also, the analysis of the time required for the output of various profile face and illumination condition justify the proposed system. In the future, we will make an expert system that is used in medical applications to visualize modified faces before plastic surgery.

Generating HTML Viewer...

References

35 Cites in Article

Xuanyi Dong,Yi Yang (2019). Teacher Supervises Students How to Learn From Partially Labeled Images for Facial Landmark Detection.

J Du,D Song,Y Tang,R Tong,M Tang (2018). Edutainment 2017 a visual and semantic representation of 3D face model for reshaping face in images.

Y Lin,S Wang,Q Lin,F Tang (2012). Face swapping under large pose variations: A 3D model based approach.

F Yang,E Shechtman,J Wang,L Bourdev,D Metaxas (2012). Face morphing using 3D-aware appearance optimization.

J Chen,Z Long,B Liu,X Li,Z Ye (2017). Image morphing using deformation and patch-based synthesis.

Jennisa Areeyapinan,Pizzanu Kanongchaiyos (2012). Face morphing using critical point filters.

A Feng,D Casas,A Shapiro (2015). Avatar reshaping and automatic rigging using a deformable model.

Stefano Melacci,Lorenzo Sarti,Marco Maggini,Marco Gori (2009). A template-based approach to automatic face enhancement.

Paul Koppen,Zhen-Hua Feng,Josef Kittler,Muhammad Awais,William Christmas,Xiao-Jun Wu,He-Feng Yin (2018). Gaussian mixture 3D morphable face model.

Koki Nagano,Jaewoo Seo,Jun Xing,Lingyu Wei,Zimo Li,Shunsuke Saito,Aviral Agarwal,Jens Fursund,Hao Li (2018). paGAN.

Luojun Lin,Lingyu Liang,Lianwen Jin (2018). R<sup>2</sup>-ResNeXt: A ResNeXt-Based Regression Model with Relative Ranking for Facial Beauty Prediction.

D Cristinacce,T Cootes (2008). Automatic feature localisation with constrained local models.

S Karungaru,M Fukumi,N Akamatsu (2007). Morphing face images using automatically specified features.

T Cootes,G Edwards,C Taylor (2001). Active appearance models.

Kwok-Wai Wan,Kin-Man Lam,Kit-Chong Ng (2005). An accurate active shape model for facial feature extraction.

Feng Min,Nong Sang,Zhefu Wang (2010). Automatic Face Replacement in Video Based on 2D Morphable Model.

J Boda,D Pandya (2018). A Survey on Image Matting Techniques.

Jagruti Boda,Dr. Kasat (2018). Real-Time Face Feature Reshapingwithout Cosmetic Surgery.

Steven Seitz,Charles Dyer (1996). View morphing.

Jean Kossaifi,Georgios Tzimiropoulos,Maja Pantic (2017). Fast and Exact Newton and Bidirectional Fitting of Active Appearance Models.

M Jones,P Viola (2003). Face Detection System Based on Viola - Jones Algorithm.

B Wu1,S Lao (2004). Fast Rotation Invariant Multi-View Face Detection Based on Real Adaboost.

Rr Sejati,Rodhiyah Mardhiyyah (2019). Deteksi Wajah Berbasis Facial Landmark Menggunakan OpenCV Dan Dlib.

Vahid Kazemi,Josephine Sullivan (2014). One millisecond face alignment with an ensemble of regression trees.

N Dalal,B Triggs (2005). Histograms of Oriented Gradients for Human Detection.

Scott Schaefer,Travis Mcphail,Joe Warren (2006). Image deformation using moving least squares.

Explore published articles in an immersive Augmented Reality environment. Our platform converts research papers into interactive 3D books, allowing readers to view and interact with content using AR and VR compatible devices.

Your published article is automatically converted into a realistic 3D book. Flip through pages and read research papers in a more engaging and interactive format.

Our website is actively being updated, and changes may occur frequently. Please clear your browser cache if needed. For feedback or error reporting, please email [email protected]

Thank you for connecting with us. We will respond to you shortly.