## I. INTRODUCTION

In recent years, more and more scholars at home and abroad devote themselves to multimodal discourse analysis (MDA). Chinese as well as foreign scholars study multimodal discourse involving many language categories and topics. Kress & Van Leeuwen and O'Toole extended the concept of meta-functioninto images and proposed the conceptual and theoretical framework of visual grammar. On this basis, Royce,O'Halloran and Martinec analyzed and explained the specific text of visual and auditory multimodal. Since the 1990s, more and more domestic linguists have paid attention to the study of multimodal discourses in different genres, including advertisements, poems, films and new media. However, there are few multimodal discourses involved in product launch. Apple's product launches, in particular, are the focus of mass media's attention. In order to achieve better results, product launches are often elaborately designed and make full use of various modes and media such as language, voice, gesture and video at the same time. These different modes interact with each other to vividly express the meaning of design from the company and the designers.

Based on Systemic Functional Linguistics, this paper attempts to study the synergy of dynamic multimodal discourse modes in Apple's product launches. Theoretically, firstly, the research of product launch from multimodal perspective provides a new method to conduce the research of enterprise launch conference. Second, it draws the application of systematic functional grammar to the multimodal discourse analysis of production promotion release conference. Practically, through the analysis of multimodal discourse in product launch events, this study provides some enlightenment for sellers to understand how multimodal discourse can be accepted and understood by the audience, so as to give full play to the positive impact of product launch events and help merchants to capture the different tastes of the audience.

The remainder of the paper is structured as follows. Section 2 reviews multimodal discourse analysis and product launch conferences at home and abord. The methodology and findings of the study are presented in Section 3 and 4 respectively. Finally, Section 5 concludes the study.

## II. LITERATURE REVIEW

### a) Previous Studies of Multimodality Discourse Analysis

## i. Previous Studies of Multimodality Discourse Analysis Abord

After more than 20 years, a large number of research achievements have been made. The mainstream foreign perspectives on multimodal discourse analysis include: social semiotics multimodal discourse analysis, system-functional multimodal discourse analysis (SF-MDA), multimodal interaction analysis, and multimodal corpus analysis. As for the multimodal study of social semiotics, Saussure believes that symbols have social characteristics. Halliday (1978) believed that language is a kind of social symbol. Social semioticians believe that the choice of symbol resources is determined by the intention of the symbol interpreter, which means that the choice of symbols determines the expression of meaning, and the various modes have diversified potential meanings at the same time.

Systemic functional multimodal discourse analysis is also derived from Halliday's Systemic Functional Grammar, which is the core framework of the analysis method. Unlike the approach of social symbolic marking (top down), the contextual approach and specific ideological orientation, the SF-MDA is from the bottom to up. Related research includes a range of other multimodal texts, for instance, hypermedia (Djonov, 2007), television discourse (Bednarek and Martin, 2010), online newspaper (Knox, 2013), and language and gestures for teaching (Fei Victor Lim, 2019).

Multimodal interactive analysis is developed on the basis of media discourse analysis and absorbs some ideas from social semiotics. It focuses on how interactors use multiple symbol patterns to realize social activities and emphasizes the prominence of identity in interaction (Norris, 2004; Norris & Jones, 2005; Ron Scollon, 2001; Ron Scollon & Suzanne Wong Scollon, 2003).

Corpus analysis, systemic functional linguistics and semiotics consist of the multimodal corpus analysis to verify the hypothesis of meaning generation in multimodal discourse research. Bateman (2014), on behalf of this school, believes that it is very important to pay attention to how to analyze cases in the early development of multimodal research theory. Currently, the most frequently used multimodal database tools are Anvil, Elan, etc. However, the annotation of multimodal discourse is time-consuming and laborious, which leads to the inadequate multimodal research based on the large corpora.

## ii. Previous Studies of Multimodality Discourse Analysis at Home

In China, multimodal discourse analysis started from Social Semiotics Analysis of Multimodal Discourse (Li, 2003), which elaborated the visual grammar specifically. Following research is mainly focused on the three aspects: first, as for the research on multimodal discourse analysis based on SFL and social semiotics, a large number of scholars have devoted themselves into applying other relevant theoriesto the case studies. For example, Zhang and Mu (2012) discussed the construction of multimodal functionalstylistics theoretical framework through the analysis of graphics and articles on the basis of functional stylistics theoretical research. Feng, Zhang and Wang (2016) used the theory of rhetorical structure to the study of advertising.

There are few studies on multimodal interaction analysis in China. Zhang and Wang (2016) comprehensively introduced the basic theory and method of this analysis, and discussed the characteristics and deficiencies of this method. They propose a comprehensive framework of multimodal interaction analysis and analyze the teaching activities in English classroom.

Multimodal corpus analysis is still not common in China. Wang and Zhang (2016) found that the existing research methods of SFL cannot be directly applied to the description of multimodal genre features which needs more improvement. Hu and Liu's (2015) analysis of the construction of the multimodal corpus both at home and abroad, which found out that the present research situation and focus more on modal interpretation corpus, especially from the aspects of effective collection, quality requirements, the multi-level annotation model the non-language factors (including the phenomenon of language expression and gestures), shaft alignment in time, and the perspectives of the reliability evaluation of the label.

### b) Previous Studies of Product Launch Conference

i. Previous Studies of Product Launch Conference Abord

- Most researches on product launches focus on how to make effective and engaging presentations. Lucas, whose masterpiece The Art of Public Speaking is known as the "bible of Public Speaking", explained how a good speech should be prepared in terms of topic selection, understanding of the audience and collection materials. The Art of Lecturing, written by Parham Aarabi, offers a practical recommendation for effective university lectures and business presentations. In iKeynote-Representation, Rhetoric and Visual Communication by Steve Jobs In His Keynote ar Macworld 2007, Kast (2008) elaborated how Steve Jobs interacted with resources and various modes through rhetorical devices, and delivered persuasive messages. Product launch is playing an increasingly important role in today's business environment. ii. Previous Studies of Product Launch Conference at Home

At the same time, there are also some domestic research on product display. Cai and Zhou (2007) expounded the importance of speech in international business, classified it according to the purpose of product launch, and gave relevant suggestions for effective presentation. Liu (2013) conducted a further investigation of the successful speech strategy of Steve Jobs at apple, which is that in a brief introduction to the business after the classification and structure of the speech, the author discussed the features of language Steve Jobs used, and also talked about his effective skills before and in the process of the demonstration. Gao and Zhang (2015) pointed out the significant meaning of mobile phone product launch. This paper puts forward three suggestions for holding the product launch conference. The authors found that product launches can help companies become competitive, capture the taste of consumers, and be able to prone the potential market among rivals.

## III. METHODOLOGY

### a) Theoretical Framework

Systematic Functional Linguistic (SFL) is one of the most prevalent linguistic schools around the world. It was first proposed by British linguistic J.R. Firth and the further developed by Halliday, M.A.K (1985). In this study, the content level which includes the meaning level and form level will be applied. In the discourse meaning, the interpersonal meaning in the product launch conference will primarily be dug out, and as for the form level, the paper will try to figure out all the forms existing in the conference as well as their relationships.

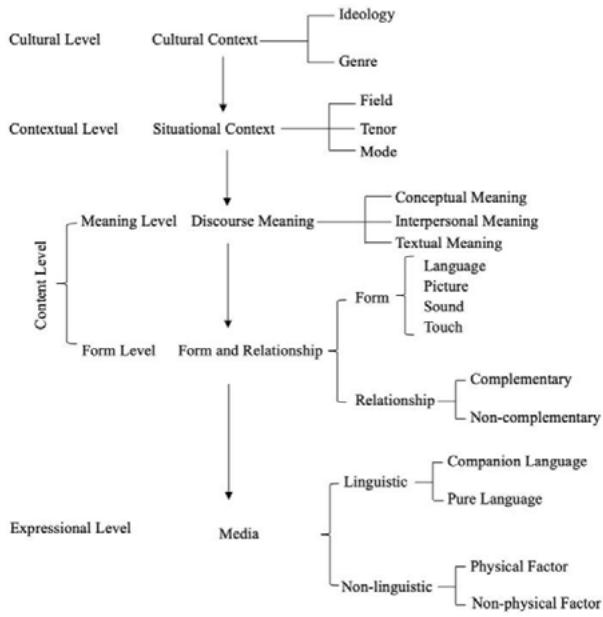

Figure 3.1: Synthetic Theoretical Framework of Multimodal Discourse Analysis (Zhang, 2009a: 28) In 2009, Zhang Delu proposed the intermodal relationship in his article An Exploration of the Synthetic Theoretical Framework of Multimodal Discourse Analysis which expounds the concepts and detailed examples of intermodal relationship. The multimodal discourse mode

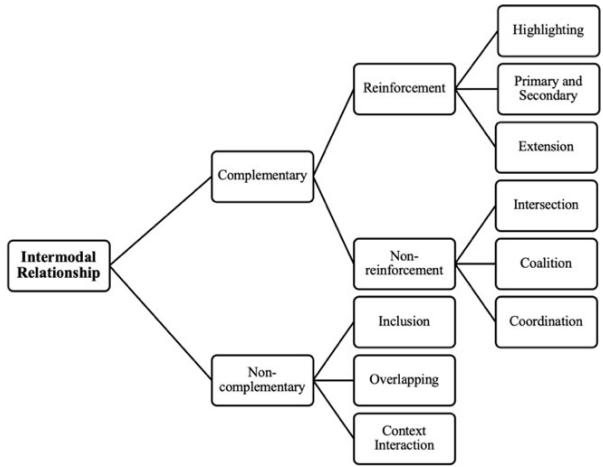

is that when one modal discourse cannot fully express its meaning, its meaning needs to be added by another one. The relationship between such modes is called "complementary relationship", while the others are called "non-complementary relationship".

Figure 3.2: Zhang Delu's Inter-Modal Modes and Relationship (Zhang Delu, 2009)

### b) Research Question

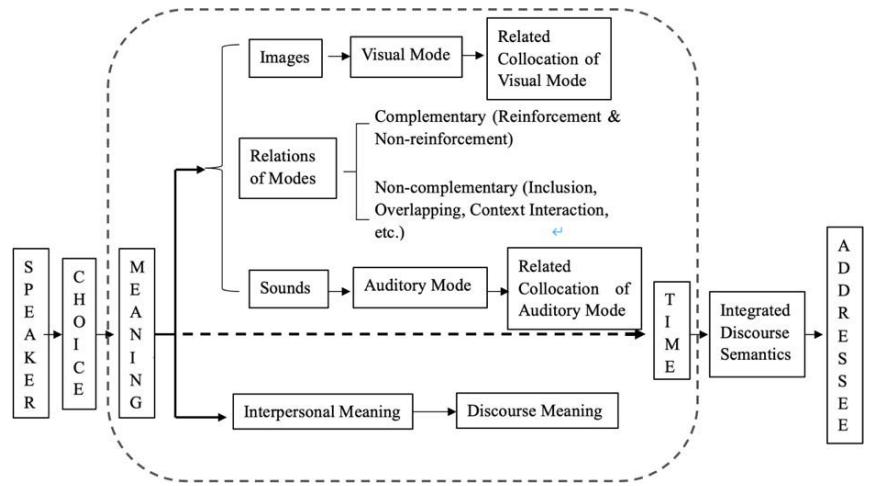

According to the systematic functional framework and Zhang Delu's intermodal relationship combing with concept of Halliday's interpersonal meaning, this paper aims to design a dynamic multimodal discourse analysis framework for Apple Product Launch Conference to figure out the following specific questions:

### c) Research Procedure

The study starts with figuring out the different modes in Apple Event 2018, then according to Zhang Delu's definition of the intermodal relationship between different modes, identify and determine the relationship between different modes of the launch part of iPhone in the product release conference of Apple. Besides, based on the first two stages, how different models interact and cooperate with each other to generate interpersonal meaning during period of the Apple's product launch conference will be further discussed.

Figure 3.3: Synthetic Theoretical Framework for Multimodal Discourse Analysis

### d) Data Collection and Analysis

The corpus was selected from the 2018 Apple product launch event once a year in autumn, and the selected new product launch event was obtained from the Apple official from YouTube. At the same time, the computer screenshot function was employed to capture videos and pictures containing multi-modes, and Xunfei listening software was used to convert video track into text, which constituted the corpus of this study.

The corpus selected in this paper is a dynamic multimodal corpus of about one hour and five minutes long (37'02"—1h 41'55") from Apple's press conference on September 13, 2018, which includes audio, video and spoken demonstration.

## IV. RESULTS AND DISCUSSION

a) Distribution of Different Modes in Apple Product Launch Conference 2018 The following table is the data collected through the corpus.

Table 4.1: Frequency of Different Mode

<table><tr><td>Mode</td><td>Frequency</td></tr><tr><td>Oral Presentation</td><td>540</td></tr><tr><td>Music</td><td>5</td></tr><tr><td>Pictures</td><td>221</td></tr><tr><td>Animations</td><td>16</td></tr><tr><td>Texts</td><td>67</td></tr></table>

In the Product Launch Conference, the interaction and cooperation of the visual modes and the auditory modes are important and indispensable. In the whole process, the oral presentation is in the dominant place, which is the primary sector and mode in terms of quantity and quality. Besides, there are a few music to support the effect of the auditory modes. The visual modes mainly have their impact on the conference through the means of texts, pictures and videos. And the visual modes have two effects to auditory modes: first, they point out the essential part of the conference to make the key information more explicit; second, they complement the auditory modes to make up some absent and ambiguous informationto the audience.

### b) Different Modes' Relations in Apple Product Launch Conference

## i. Combination of Different Modes in Apple Product Launch Conference

In this corpus, there are text, pictures and video (animation) belonging to the visual mode, as well as music and oral presentation belonging to the auditory mode. Therefore, there would be 21 types of combinations of these five mediums.

Table 4.2: Frequency of Different Combination

<table><tr><td>Combination</td><td>Frequency</td></tr><tr><td>Text + Oral Presentation</td><td>81</td></tr><tr><td>Picture + Oral Presentation</td><td>179</td></tr><tr><td>Animation + Oral Presentation</td><td>48</td></tr><tr><td>Text + Music</td><td>0</td></tr><tr><td>Picture + Music</td><td>0</td></tr><tr><td>Animation + Music</td><td>2</td></tr><tr><td>Text + Oral Presentation + Picture</td><td>191</td></tr><tr><td>Text + Oral Presentation + Animation</td><td>5</td></tr><tr><td>Oral Presentation + Picture + Animation</td><td>0</td></tr><tr><td>Text + Music + Picture</td><td>0</td></tr><tr><td>Music + Text + Animation</td><td>0</td></tr><tr><td>Music + Picture + Animation</td><td>0</td></tr><tr><td>Oral Presentation + Music + Text</td><td>0</td></tr><tr><td>Oral Presentation + Music + Animation</td><td>27</td></tr><tr><td>Oral Presentation + Music+ Picture</td><td>0</td></tr><tr><td>Oral Presentation + Music + Text + Picture</td><td>0</td></tr><tr><td>Oral Presentation + Music + Text + Animation</td><td>1</td></tr><tr><td>Oral Presentation + Music + Picture + Animation</td><td>0</td></tr><tr><td>Oral Presentation + Picture + Text + Animation</td><td>0</td></tr><tr><td>Music + Picture + Text + Animation</td><td>0</td></tr><tr><td>Music + Picture + Text + Animation + Oral Presentation</td><td>0</td></tr></table>

In this study, there are only eight collocations at the Apple event, and they are listed in the table 4.2.It can be found that at the site of Apple's press conference, oral presentation is the main auditory mode media, so there are many collocations with it, which are five groups of collocations. And it can be found that the company in order to create a typical product texture, every time the animations appear with oral presentation or music.

In general, in order to ensure the clarity and simplicity of the message, it is rare to use multiple media at the same time. As can be seen from the results, the combination of two media is the most common collocation, which is 211 times in total. The three media were matched for 25 times, and the four media were matched for 1 time, while the five media did not appear together at the same time during the whole conference.

## ii. Different Combination in Complementary Relation

In order to figure out the similarities and differences among different frequency of the multimodal relations and the synergies between the auditory mode and visual mode in Apple Product Launch Conference, the figures are concluded as follows.

### 1. Reinforced Relation

In the reinforced relationship, one mode acts as the major channel of communication, while other modes play merely the complementary role. In the following part, the most commonly used two relations will be illustrated further with examples.

Table 4.3: Frequency of Different Combination in Complementary Relationship

<table><tr><td>Relationship</td><td>Combination</td><td>Frequency</td></tr><tr><td rowspan="4">Highlighting</td><td>Text + Oral Presentation</td><td>60</td></tr><tr><td>Picture + Oral Presentation</td><td>33</td></tr><tr><td>Animation + Oral Presentation</td><td>14</td></tr><tr><td>Text + Oral Presentation + Picture</td><td>48</td></tr><tr><td rowspan="4">Primary and Secondary</td><td>Picture + Oral Presentation</td><td>68</td></tr><tr><td>Animation + Oral Presentation</td><td>10</td></tr><tr><td>Text + Oral Presentation + Picture</td><td>119</td></tr><tr><td>Text + Oral Presentation + Animation</td><td>5</td></tr><tr><td rowspan="3">Extension</td><td>Text + Oral Presentation</td><td>7</td></tr><tr><td>Picture + Oral Presentation</td><td>4</td></tr><tr><td>Text + Oral Presentation + Picture</td><td>2</td></tr><tr><td>Intersection</td><td>None</td><td>None</td></tr><tr><td rowspan="2">Coalition</td><td>Oral Presentation + Music + Animation</td><td>19</td></tr><tr><td>Oral Presentation + Music + Text + Animation</td><td>1</td></tr><tr><td rowspan="6">Coordination</td><td>Text + Oral Presentation</td><td>1</td></tr><tr><td>Picture + Oral Presentation</td><td>64</td></tr><tr><td>Animation + Oral Presentation</td><td>24</td></tr><tr><td>Animation + Music</td><td>2</td></tr><tr><td>Text + Oral Presentation + Picture</td><td>12</td></tr><tr><td>Oral Presentation + Music + Animation</td><td>1</td></tr></table>

#### (1) Highlighting Relation

The highlighting relationship appears 155 times in total, and the main purpose of this kind of relationship is to provide the background in order to make the speakers' information emphasized. As we can see from the table 4.3, there are 60 times belonging to the combination of Oral presentation and Text. In this situation, the texts mainly serve as the background information to emphasize the theme or the section of the conference to tell the audience what will be introduced in the part. For instance,

(1) Now let's talk about iPhone.

This is the first sentence in the whole introduction part of new iPhone. And the text "iPhone" appeared in the background board, whose aim is to inform the audience that we are going to enter the introduction part of iPhone and to emphasize what the speaker's intention to attract the attention from the audience.

#### (2) Primary and Secondary Relation

The primary and secondary relationship is the main modes of supplementing the meaning during the conference, which has 202 times in total. From the table 4.3, we can make a conclusion that the combination of Oral presentation, Picture and Text gets the most frequency in primary and secondary relationship, which is almost twice as the second higher combination of Oral presentation and Picture. For instance,

From this example, it can be seen that if one watches the picture only without the sound assistance, what the picture intends to communicate can barely be understood. Unless the audience heard what the speaker supplemented the material that the new iPhone XS made of or the text on the screen. The auditorymode will fail to play its function without the support of the picture. The It in this sentence can hardly be understood what it refers to so as to need the help of the picture to show its subject. Therefore, it can be concluded that the auditory mode serves as the primary means and the visual mode secondary, whose function is to complement and reinforce the auditory mode.

#### 2. Non-reinforced Relation

The non-reinforced relationship can be defined as that neither of the two modes can be omitted and they interact with each other closely. There is no appearance of the intersection relationship during the conference, 20 coalition relationship in the conference, and The coordination relationship which has been used for 105 times in Apple Event 2018.

The coordination relationship contains diverse modes to conduct the complete meaning in the discourse.

## iii. Different Combination in Non-Complementary Relation

For instance,

(485) It's a 12 megapixel, wide angle camera, the exact same wide-angle camera in the XS and XS Max, so it's our new generation sensor that's larger with bigger pixels, optical image stabilization, twice as many focus pixels, faster f1.8 aperture, Apple design lens and the new improved true tone flash as well.

In this example, there are two modes here, the visual mode and the auditory mode, and the main mode is the auditory mode as the meaning is primarily expressed by the speaker. However, the audience is exposed to too many technical jargons, which are obscure for layman even in written form, let alone in auditory form. Therefore, the oral presentation in this section can not convey the meaning alone, and these technical jargons are well illustrated by the picture and texts showed on the screen. Neither of the two modes can convey the complete meaning on its own term.

Table 4.4: Frequency of Different Combination in Non-complementary Relationship

<table><tr><td>Relationship</td><td>Combination</td><td>Frequency</td></tr><tr><td>Inclusion</td><td>Picture + Oral Presentation</td><td>6</td></tr><tr><td rowspan="3">Overlapping</td><td>Text + Oral Presentation</td><td>13</td></tr><tr><td>Picture + Oral Presentation</td><td>4</td></tr><tr><td>Text + Oral Presentation + Picture</td><td>10</td></tr><tr><td>Context Interaction</td><td>Oral Presentation + Music + Animation</td><td>7</td></tr></table>

The table 4.4 indicated the frequency of different combination in non-complementary relationship in Apple Event 2018. The non-complementary relation refers to a relationship in which the second mode does not have contribution to the entire meaning. And the noncomplementary relationship can be further divided into three kinds: inclusion, overlapping and context interaction relationships.

The inclusion relationship is not frequently used during the Apple Event 2018, which only appeared 6 times totally. 7 times of context interaction relation in total which all are the combination of Oral presentation, Animation and Music.

From table 4.4, it can be concluded that there are 27 times appearing in the conference and 13 times for the combination of Oral presentation and Text. The speakers were likely to say something unrelated to the topic during the transition phase like the greeting words, which is mainly led by the text. The speakers were also willing to emphasize the content by putting words on the screen while telling the audience exactly the same words. Therefore, it is normal to see that the frequency of the combination of Oral presentation and Text is the highest in the overlapping relationship.

### c) Analysis of the Interpersonal Metafunction

According to Halliday's systematic functional grammar, modality plays an important role in explaining the relationship among speakers. No matter it is verbal or nonverbal, it has an influence on the interpersonal relationship. Due to the limited time and experience, the study mainly selected the modality "can" as the subject to dig out the interpersonal meaning in the Apple Event 2018.

As for the interpersonal metafunction in complementary relationship, the modal operator can appears 57 times in the complementary relationship, including highlighting relationship 10 times, primary and secondary relationship 25 times and coordination relationship 22 times. It can be noticed that the modal operator most exists in primary and secondary relationship, which functions as the main approach of explanation during the product launch conference. It means that the speakers are clinging to use reinforcement relationship to conduct a positive meaning for the audience.

As for the interpersonal metafunction in noncomplementary relationship, the modal operator can't appears four times in the non-complementary relationship, which all belong to the type of overlapping relationship. The non-complementary relationship means that the only one mode has already expressed the whole meaning and there is no need for other modes to supplement. The use of modal operator can help to express positive information to the audience, while some low modal operator with high politeness in overlapping relationship conduct less meaning during the conference.

## V. CONCLUSION

It is clear that the Apple Event has applied rich semiotic resources which can be represented by multimodal features mainly including visual and auditory modes. Through the detailed analysis, the oral presentation by speakers is the main media during the conference, which means that the auditory mode severs as the primary mode to construct the meaning to the audience. And the visual modes are complementary to the auditory modes. It is a complementary relation between the visual and auditory modes in the most occasions, especially the non-reinforcement relation. Based on the interpersonal function, it is apparent that the low modal operator can has the high inclination to the complementary relationship, which plays an important role in constructing the positive attitude and evaluation of the speakers. With little Question and no Command, the application of modal operator indicates that the discourse is of high politeness.

In a word, every mode has its place in expressing and conveying meaning, whose various collocation and interaction makes the whole Event successfully held. And the use of the low modal operator establishes a closer relationship between the speaker and the audience and try to persuade and encourage the audienceto cultivate the sense of purchase.

One of the limitations of this study is that the paper only employed the iPhone Launch part as its corpus. And it is not enough to find out the exact distribution rules of the synergies between the auditory and visual modes, which may be not persuasive and evident to some extent, also the entire study of SFL has not been conducted duo to limited knowledge and experience. Further research must conduct larger-scale studies to ensure the objectivity of the study. In addition, in this study, only the auditory and visual modes are analyzed, much more comprehensive studies can be carried out to analyze the other modes whose collocation as well as interaction should be further analyzed through technical means rather than artificial ways. Third, there are few criteria for researchers to divide the dynamic discourses, so framework of the transcribing of the dynamic multimodal discourse should be further studied.

Generating HTML Viewer...

References

44 Cites in Article

Roland Barthes (1977). Reading Pop Culture as Intellectual Obligation.

Anthony Baldry,Paul Thibault (2006). Applications of multimodal concordances.

J Bateman (2014). Using multimodal corpora for multimodal research.

M Bednarek,J (2010). Martin New Discourse on Language: Functional Perspectives on Multimodality, Identity,and Affiliation.

Emilia Djonov (2017). Website hierarchy and the interaction between content organization, webpage and navigation design: A systemic functional hypermedia discourse analysis perspective.

Fei Victor,Lim (2017). Investigating intersemiosis: A systemic functional multimodal discourse analysis of the relationship between language and gesture in classroom discourse.

M Halliday (1978). Language as Social Semiotic: The Social Interpretation of Language and Meaning.

M Halliday (1994). An Introduction to Functional Grammar.

Rick Iedema (2003). Multimodality, resemiotization: extending the analysis of discourse as multi-semiotic practice.

J Kador (2006). 50 High-impact Speeches and Remarks.

B Kast (2007). iKeynote-Representation, Rhetoric and Visual Communication by Steve Jobs in His Keynote at Macworld.

G Kress,T Leeuwen (2006). Reading Images: The Grammar of Visual Design.

J Knox (2013). Online newspapers: structure and layout.

V Lim Fei (2004). Developing an integrative multi-semiotic model.

D Machin (2007). Introduction to multimodal analysis.

J Martin (1992). English Text: System and Structure.

No ethics committee approval was required for this article type.

Data Availability

Not applicable for this article.

How to Cite This Article

Liu Sijiao. 2026. \u201cA Study of Different Modes’ Synergy from the Perspective of Multimodal Discourse: Taking Apple Product Launch Conferences as an Example\u201d. Global Journal of Human-Social Science - G: Linguistics & Education GJHSS-G Volume 23 (GJHSS Volume 23 Issue G3): .

Explore published articles in an immersive Augmented Reality environment. Our platform converts research papers into interactive 3D books, allowing readers to view and interact with content using AR and VR compatible devices.

Your published article is automatically converted into a realistic 3D book. Flip through pages and read research papers in a more engaging and interactive format.

Our website is actively being updated, and changes may occur frequently. Please clear your browser cache if needed. For feedback or error reporting, please email [email protected]

Thank you for connecting with us. We will respond to you shortly.