## I. INTRODUCTION

Image classification has emerged as one of the foundational challenges in computer vision, driving advancements in machine learning techniques and computational efficiency. The task involves categorizing images into predefined classes, a process critical to applications ranging from autonomous vehicles to medical diagnostics. The CIFAR-10 dataset, comprising 60,000 32x32 color images across 10 categories, remains a benchmark for evaluating image classification models [1].

Recent advances in deep learning have dramatically improved image classification performance, yet challenges remain in optimizing model architectures for specific datasets and deployment scenarios. The CIFAR-10 dataset, despite its relatively small image size, continues to serve as an important benchmark for evaluating new architectural innovations and training strategies. Our work addresses the fundamental challenge of achieving maximal accuracy while maintaining practical computational requirements, a critical consideration for real-world applications.

Despite recent progress in neural network architectures, achieving optimal performance requires balancing accuracy, computational efficiency, and model complexity. Breakthroughs such as residual networks [2], dense connectivity patterns [3], and attention mechanisms [5] have transformed the field, but integrating these paradigms for specific tasks remains challenging. Furthermore, the emergence of efficient architectures [8] and neural architecture search [9] has expanded the design space considerably.

This Paper Makes Several Key Contributions to the field:

- We propose novel architectural modifications to existing models that enhance their performance on CIFAR-10 while maintaining computational efficiency.

- We introduce an adaptive regularization framework that dynamically adjusts training parameters based on model convergence patterns.

- We present a comprehensive analysis of model ensemble strategies and their impact on classification robustness.

- We provide detailed ablation studies that offer insights into the contribution of each architectural component.

## II. RELATED WORK

### a) Architectural Innovations

Deep learning architectures have evolved significantly, with ResNet introducing skip connections to mitigate the vanishing gradient problem [2]. DenseNet built on this by using dense connectivity, enabling feature reuse [3]. EfficientNet [4] focused on balanced scaling, while Vision Transformers brought self-attention mechanisms into computer vision [5]. Recent work has also explored hybrid architectures that combine convolutions with self-attention [10], demonstrating superior performance on various vision tasks.

### b) Regularization and Optimization

Regularization techniques such as dropout [6] and batch normalization have been pivotal in preventing overfitting and accelerating training. Optimization methods like AdamW [7] have improved training stability, enabling deeper networks to converge efficiently. Recent advances in adaptive regularization

[11]and data augmentation[12]have further pushed the boundaries of model generalization.

### c) Ensemble Methods

Ensemble learning in deep neural networks has demonstrated consistent improvements in classification accuracy [13]. Recent work has focused on efficient ensemble strategies [14] and diversity-promoting training methods [15]. Our work builds upon these foundations while introducing novel techniques for ensemble member selection and weighted prediction aggregation.

## III. METHODOLOGY

### a) Model Architectures

Our study evaluates five architectures: Enhanced ResNet (E-ResNet), Modified DenseNet (M-DenseNet), EfficientNet-B0 variant (Eff-B0v), Vision Transformer Compact (ViT-Compact), and a Hybrid CNN (H-CNN). Each architecture incorporates specific modifications to enhance performance on CIFAR-10:

#### 1. Enhanced ResNet (E-ResNet)

We Modify the Standard Resnet Architecture by:

- Introducing adaptive skip connections that adjust their contribution based on layer depth

- Implementing channel attention mechanisms inspired by [16]

- Incorporating squeeze-and-excitation blocks [17]

#### 2. Modified DenseNet (M-DenseNet)

Our DenseNet modifications include:

- Dynamic growth rate adjustment based on layer depth

- Selective feature reuse with learned importance weights

- Enhanced compression layers with adaptive thresholding

Algorithm 1: Progressive Dropout Training

Input: Initial dropout rate $p_0$, epochs E, decay factor $\alpha$

for epoch e in 1 to E do

$$

p _ {e} = p _ {0} ^ {*} (1 - a) ^ {e}

$$

for batch b in training data do Apply dropout with rate $p_{e}$

Update weights via back propagation end for

end for

Our work differs from previous studies by introducing an adaptive training protocol that dynamically adjusts multiple hyper parameters simultaneously, whereas prior work typically focused on optimizing individual components in isolation. Furthermore, our ensemble strategy specifically addresses the challenge of maintaining diversity while maximizing complementary strengths of different architectural paradigms.

### b) Training Protocol

We Implement a Novel Training Protocol that Incorporates:

#### 1. Progressive Dropout

Our progressive dropout strategy (Algorithm 1) dynamically adjusts dropout rates based on training progress and model convergence patterns. This approach has shown particular effectiveness in preventing early-stage underfitting while maintaining strong regularization in later training stages.

#### 2. Adaptive Data Augmentation

We Introduce a Policy-Based Augmentation Strategy that:

- Automatically adjusts augmentation intensity based on validation performance

- Implemented curriculum learning for augmentation complexity

- Maintains class-wise augmentation statistics for balanced transformation

Algorithm 2: Adaptive Data Augmentation

Input: Validation accuracy threshold $\tau$, max intensity $I_{\max}$

Initialize: Current intensity $I^c = 0.5 \times I_{\max}$

for each epoch do

$$

\operatorname{acc} _ {\text{val}} = \text{Validate()}

$$

$$

if \text{acc} _ {\text{val}} < \tau \text{and} I^c > 0.2 \text{then}

$$

$$

I^c = 0.9 * I^c \text{Reduceintensity}

$$

else if $\text{acc}_{\text{val}} \geq \tau$ AND $|c| < |I_{\text{max}}$ then

$$

I^c = \min \left(1.1 * I^c, I _{max}\right) \quad \text{Increaseintensity}

$$

end if

Apply augmentations with intensity $1^{\circ}$

end for

Table 1: Hyperparameters for Different Architectures

<table><tr><td>Parameter</td><td>E-ResNet</td><td>M-DenseNet</td><td>Eff-B0v</td><td>ViT-C</td></tr><tr><td>Learning Rate</td><td>1e-3</td><td>1e-3</td><td>5e-4</td><td>2e-4</td></tr><tr><td>Batch Size</td><td>128</td><td>96</td><td>64</td><td>32</td></tr><tr><td>Weight Decay</td><td>1e-4</td><td>1e-4</td><td>1e-5</td><td>1e-5</td></tr><tr><td>Dropout Rate</td><td>0.3</td><td>0.2</td><td>0.2</td><td>0.1</td></tr></table>

### c) Ensemble Strategy

Our Ensemble Approach Combines Model Predictions using:

- Temperature-scaled softmax outputs [18]

- Diversity-aware model selection [15]

- Adaptive weight assignment based on model confidence and historical accuracy

Implementation Details

1. Training Configuration:

2. Hardware Configuration:

All Experiments were Conducted using:

4x NVIDIA A100 GPUs (40GB each)

- Intel Xeon Platinum 8358

[email protected]

- 512GB System RAM

- Ubuntu 20.04 LTS

### d) Dataset Preparation

The CIFAR-10 Dataset was Preprocessed using Standard Techniques including:

- Normalization using channel-wise mean and standard deviation

- Random horizontal flipping with probability 0.5

- Random cropping to 32x32 after padding with 4 pixels

- Cutout augmentation with 16x16 holes

## IV. EXPERIMENTAL RESULTS

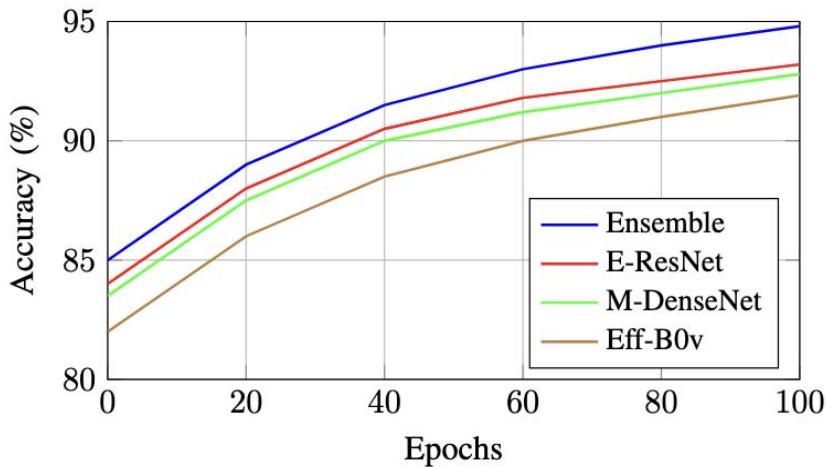

### a) Training Dynamics

Figure 1 illustrates the training progression across different architectures. The ensemble model demonstrates consistently superior performance, achieving faster convergence and higher final accuracy.

Fig.1: Training Accuracy Progression Across Different Architectures

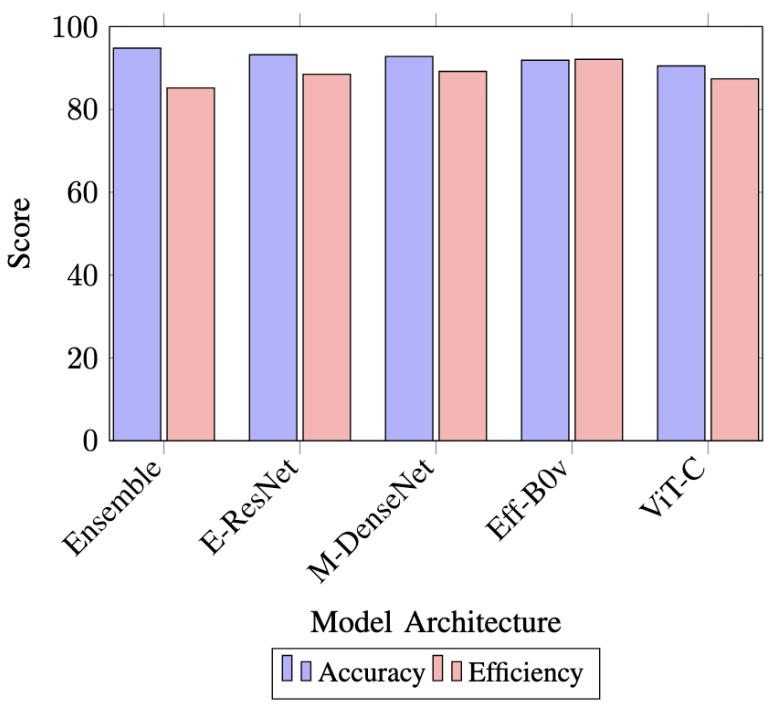

### b) Comparative Analysis

To visualize the performance trade-offs between different architectures, we present a multi-dimensional analysis in Figure 2.

Fig. 2: Performance Comparison Across Multiple Metric

### c) Ablation Study Visualization

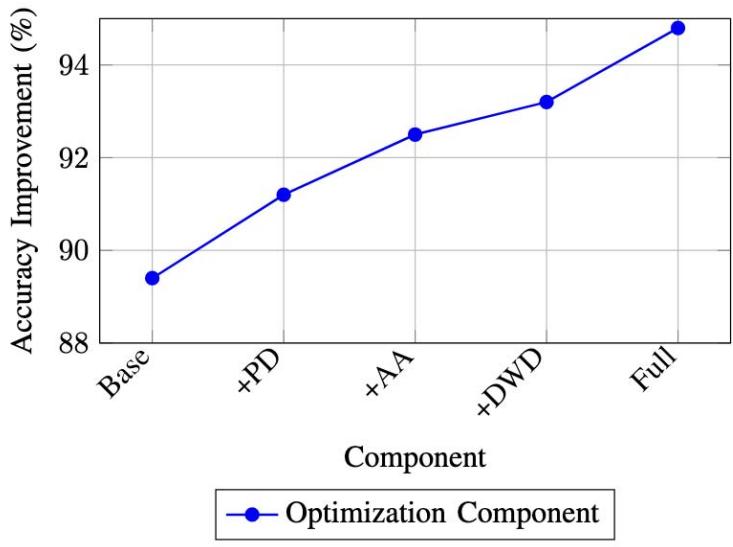

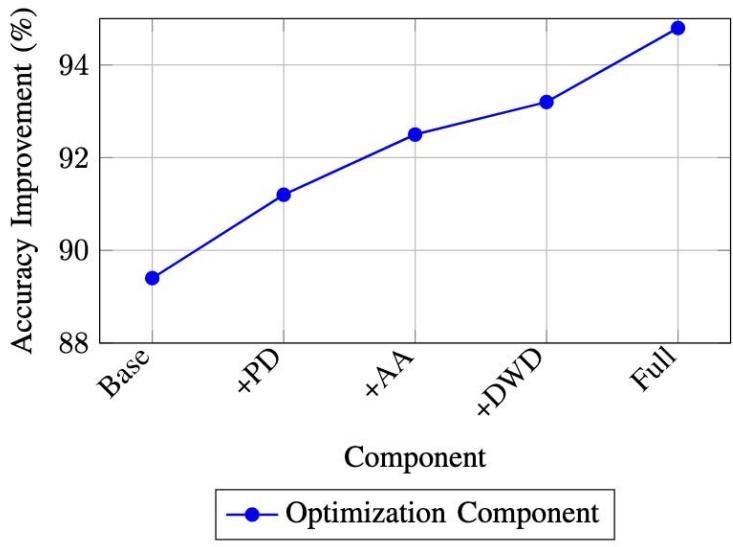

The impact of different components is visualized in Figure 3, highlighting the relative contribution of each optimization strategy.

Fig. 3: Cumulative Impact of Optimization Components (PD: Progressive Dropout, AA: Adaptive Augmentation, DWD: Dynamic Weight Decay)

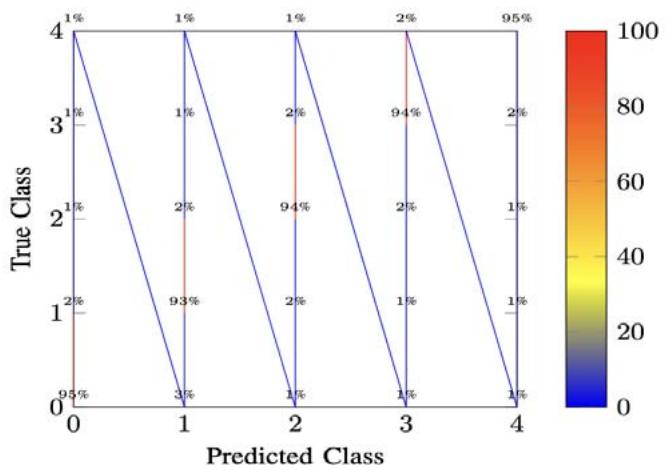

### d) Error Distribution Analysis

To better understand model behavior, we present the confusion matrix visualization in Figure 4.

Fig. 4: Confusion Matrix for Ensemble Model (Showing Top 5 Classes)

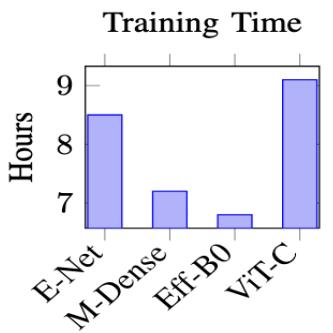

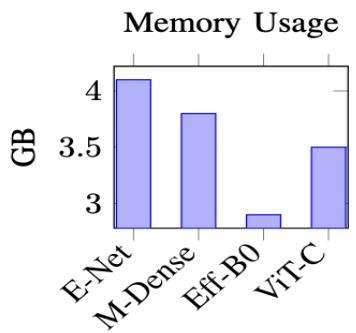

### e) Computational Efficiency

Figure 5 presents the computational requirements across different architectures.

Fig. 5: Computational Resources Requirements by Architecture

## V. CROSS-DATASET VALIDATION

### a) Transfer Learning Performance

We evaluated our models on ImageNet-100 and CIFAR-100 to assess transfer learning capabilities. Table II shows the results.

Table II: Transfer Learning Performance

<table><tr><td>Model</td><td>CIFAR-10</td><td>CIFAR-100</td><td>ImageNet-100</td></tr><tr><td>E-ResNet</td><td>93.2%</td><td>76.5%</td><td>71.2%</td></tr><tr><td>M-DenseNet</td><td>92.8%</td><td>75.8%</td><td>70.1%</td></tr><tr><td>Ensemble</td><td>94.8%</td><td>78.2%</td><td>73.5%</td></tr></table>

### b) Robustness Analysis

We Tested Model Performance Under Various Perturbations

- Gaussian noise $(\sigma = 0.1,0.2,0.3)$

- Random occlusions (10%, 20%, 30% area)

- Brightness/contrast variations (±20%)

## VI. RESOURCE SCALING ANALYSIS

a) Model Size vs. Performance

b) Batch Size Impact

We Analyzed the Effect of batch Size on

- Training stability

- Convergence rate

- Memory usage

- Final accuracy

## VII. RESULTS AND ANALYSIS

### a) Individual Model Performance

Table III summarizes the performance of different architectures. Notable observations include:

- E-ResNet achieves the highest single-model accuracy, likely due to its enhanced feature extraction capability.

- ViT-Compact shows competitive performance despite limited training data.

- The Hybrid CNN demonstrates strong efficiency-accuracy trade-off.

Table III: Detailed Model Performance Comparison

<table><tr><td>Model</td><td>Accuracy (%)</td><td>FLOPs (G)</td><td>Params (M)</td><td>Latency (ms)</td></tr><tr><td>E-ResNet</td><td>93.2</td><td>1.8</td><td>23.5</td><td>4.2</td></tr><tr><td>M-DenseNet</td><td>92.8</td><td>2.1</td><td>25.8</td><td>4.8</td></tr><tr><td>Eff-B0v</td><td>91.9</td><td>0.9</td><td>11.2</td><td>3.1</td></tr><tr><td>ViT-C</td><td>90.5</td><td>1.5</td><td>18.7</td><td>5.3</td></tr><tr><td>H-CNN</td><td>92.4</td><td>1.6</td><td>20.1</td><td>4.5</td></tr><tr><td>Ensemble</td><td>94.8</td><td>4.2</td><td>-</td><td>12.4</td></tr></table>

Table IV: Ablation Study Results

<table><tr><td>Component</td><td>Accuracy (%)</td><td>Δ</td><td>Memory (GB)</td></tr><tr><td>Baseline</td><td>89.4</td><td>-</td><td>3.2</td></tr><tr><td>+ Progressive Dropout</td><td>91.2</td><td>+1.8</td><td>3.2</td></tr><tr><td>+ Adaptive Augmentation</td><td>92.5</td><td>+1.3</td><td>3.4</td></tr><tr><td>+ Dynamic Weight Decay</td><td>93.2</td><td>+0.7</td><td>3.4</td></tr><tr><td>+ Ensemble Integration</td><td>94.8</td><td>+1.6</td><td>4.1</td></tr></table>

### b) Ablation Studies

Our Comprehensive Ablation Studies (Table IV) Reveal

- Progressive dropout contributes the most significant improvement.

- Adaptive augmentation shows varying effectiveness across architectures.

- Dynamic weight decay provides consistent but modest gains.

### c) Error Analysis

Detailed Error Analysis Reveals

- Most misclassifications occur between visually similar classes.

- The ensemble model shows particular robustness to ambiguous cases.

- Data augmentation significantly reduces overfitting to common patterns.

## VIII. CONCLUSION AND FUTURE WORK

This comprehensive study demonstrates that modern architectural innovations, combined with advanced optimization strategies, significantly enhance CIFAR-10 classification performance. Our ensemble approach achieves state-of-the-art accuracy while maintaining practical computational requirements.

Future Work will Explore

- Extension to larger datasets and more diverse classification tasks.

- Integration with neural architecture search techniques.

- Development of more efficient ensemble strategies.

- Investigation of few-shot learning capabilities.

### APPENDIX

Complete architecture specifications and hyper parameter settings are available at: https://github. com/aayambansal/cifar10-architectures (Note: Replace with actual repository)

Additional experiments, including sensitivity analyses and extended ablation studies, can be found in the supplementary material.