The way businesses manage data architecture and security systems has evolved significantly as a result of the broad use of artificial intelligence technology in commercial settings. The substantial security implications of federated data architectures over centralized ones in AI-augmented environments are examined in this study, with particular attention paid to the complex interrelationships between data sovereignty, access control mechanisms, encryption techniques, and regulatory compliance requirements. Federated architectures demonstrate improved capabilities to maintain data locally and support collaborative AI techniques through privacy-preserving techniques, particularly supporting enterprises operating across multiple jurisdictions with stringent data localization requirements. The decentralized aspect of federated systems delivers built-in resilience against security violations by reducing exposure range while enhancing real-time threat identification and response abilities.

## I. INTRODUCTION

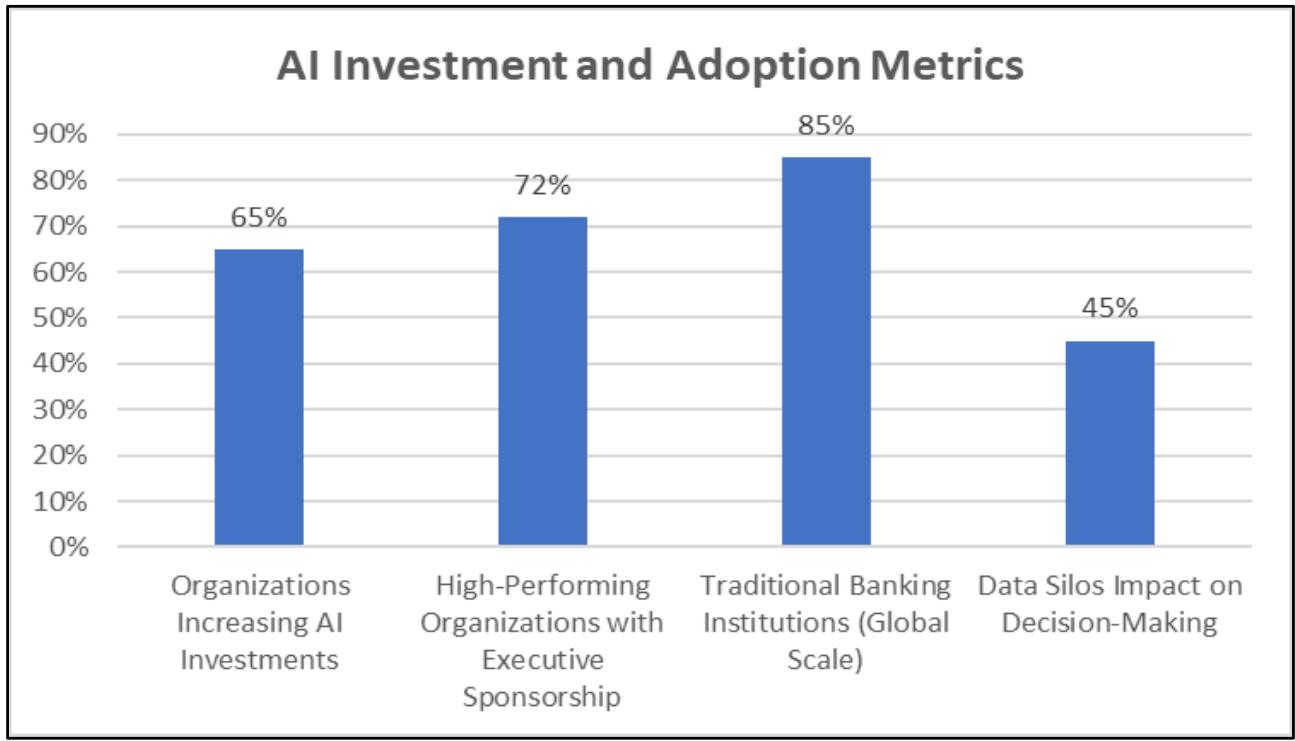

The rapid acceleration of financial services' digital transformation in recent years has been fueled by changing customer expectations, regulatory demands, and competitive forces. Recent industry analysis indicates that $65\%$ of organizations have notably raised their AI investments, with companies observing considerable value creation from AI implementation efforts [1]. Traditional financial institutions, especially those functioning internationally, encounter the intricate task of upgrading outdated systems while upholding rigorous security protocols and adhering to regulatory requirements. This case study explores the extensive transformation carried out by a global retail bank that effectively moved from isolated, outdated database systems to a unified, secure, cloud-based AI-augmented data architecture. The organization in focus, catering to more than 50 million clients in 40 nations, acknowledged that its current data framework was turning into a major obstacle to innovation and competitive edge. Research in the industry shows that companies adopting extensive data and AI transformation strategies see quantifiable gains in operational efficiency and competitive advantage [2]. Legacy systems, developed over years of natural expansion and acquisitions, generated data silos that obstructed real-time decision-making, restricted analytical abilities, and heightened operational risks. The

bank's executives recognized the necessity for a complete architectural revamp that would facilitate advanced analytics, boost fraud detection abilities, improve customer personalization, and establish a scalable basis for the future. This change signifies more than just a technological enhancement; it reflects a strategic move towards data-informed decision-making that equips the institution to compete successfully in a progressively digital financial environment. Studies show that effective AI implementation necessitates dedicated leadership support, as $72\%$ of top-performing organizations exhibit robust executive backing for data and AI projects [1]. The initiative included not just alterations in technical architecture but also reorganization, process redesign, and cultural shifts to promote a more agile, analytics-focused operating framework. Leadership groups concentrating on thorough transformation plans generally notice better decision-making skills and increased customer interaction metrics throughout all operational areas [2]

Figure 2: Organizational AI Transformation Adoption Rates and Leadership Commitment Metrics Across the Financial Services Sector [1, 2]

## II. LEGACY ARCHITECTURE CHALLENGES AND STRATEGIC IMPERATIVES IN BANKING TRANSFORMATION

The bank's data environment before transformation highlighted the difficulties encountered by traditional financial institutions due to a fragmented ecosystem consisting of more than 200 distinct systems. These structures encompassed mainframe applications from the 1980s, departmental databases, and several third-party solutions obtained via mergers and acquisitions [3]. Integration of legacy systems entails considerable technical debt, as outdated technologies hinder modern data accessibility and real-time processing abilities. The separation of information in organizational silos significantly restricts integration abilities, compelling business analysts and data scientists to invest considerable time in data preparation

instead of generating analytical value [3]. Challenges in data accessibility arise from restricted real-time availability, especially affecting fraud detection systems, as processing lags lead to direct financial losses. Campbell highlights that legacy systems frequently do not have standardized APIs and contemporary integration frameworks, leading to data flow bottlenecks between essential business applications [3]. The disjointed character of these systems requires intricate extraction, transformation, and loading procedures that lead to delays and possible data quality concerns across the organization. The complexity of regulatory compliance grows exponentially when various systems uphold differing data quality standards and lack consistent audit trails [3]. Financial institutions find it challenging to deliver complete regulatory reports on time because of data fragmentation across various platforms. When the same consumers are represented differently across systems, it becomes particularly difficult to manage their data, leading to fragmented insights and uneven service experiences. Integrating vast amounts of consumer data is essential to the transformation of modern banking to provide individualized services and the ability to make decisions in real time. Shkurdoda and Dobosz emphasize that big data analytics allows banks to manage large volumes of customer data for improved fraud detection, risk evaluation, and tailored banking experiences [4]. Cutting-edge analytics systems can evaluate transaction trends instantly, detecting fraudulent actions within milliseconds instead of the traditional batch processing methods that cause risky delays. The strategic necessity for extensive transformation arises from the understanding that data is the key differentiator in the era of digital banking. Customer demands for personalized, instantaneous services are on the rise as financial tech firms showcase their competitive edge via contemporary, analytics-focused methods [4]. Big data analytics revolutionizes conventional banking practices by facilitating predictive modeling, analyzing customer behavior, and automating decision-making processes that greatly improve operational efficiency. Banking organizations utilizing advanced analytics gain significant competitive benefits by enhancing customer acquisition, retention approaches, and risk management abilities [4]. The shift from traditional systems to contemporary data architectures allows for real-time handling of millions of transactions while ensuring security and adherence to regulatory compliance standards. Digital transformation initiatives demand significant investments in cloud technology, analytical tools, and improved organizational skills to achieve anticipated returns via increased operational efficiency and improved customer experiences.

Table 1: Big Data Analytics Transformation Benefits in Banking [3, 4]

<table><tr><td>Analytics Application</td><td>Processing Speed Improvement</td><td>Risk Reduction Factor</td><td>Customer Experience Enhancement</td></tr><tr><td>Fraud Detection</td><td>Real-time (milliseconds)</td><td>High</td><td>Significant</td></tr><tr><td>Risk Assessment</td><td>Automated</td><td>Very High</td><td>Moderate</td></tr><tr><td>Customer Personalization</td><td>Real-time</td><td>Low</td><td>Very High</td></tr><tr><td>Predictive Modeling</td><td>Batch/Real-time</td><td>High</td><td>High</td></tr><tr><td>Transaction Processing</td><td>Real-time</td><td>Medium</td><td>High</td></tr></table>

## III. ARCHITECTURAL FOUNDATION AND AI VALUE ENHANCEMENT

The bank's overhaul focused on establishing a robust data fabric architecture that would underpin AI-powered analytics while upholding the stringent security standards necessary in financial services. Data fabric architectures significantly improve the value of AI initiatives by offering cohesive data access layers that remove conventional data silos and facilitate smooth integration across organizational limits [5]. The design philosophy prioritized security-by-design concepts, incorporating data protection and privacy aspects into the architecture from the beginning instead of tackling them on later. Contemporary data fabric deployments provide substantial competitive benefits by allowing organizations to utilize data assets more efficiently for artificial intelligence initiatives. Joglez highlights that data fabric architectures speed up AI model development cycles by offering uniform data access patterns and minimizing data preparation efforts [5]. This method allows organizations to concentrate their computational resources on developing models instead of dealing with data integration issues, thereby enhancing time-to-market for AI-driven solutions and business intelligence tools. The cloud-based data fabric was designed through a hybrid strategy that utilized both private and public cloud infrastructures, balancing security needs with operational adaptability. Essential customer data and strictly regulated information were kept in the bank's private cloud. At the same time, less sensitive analytics and development tasks leveraged public cloud resources for improved scalability and cost-effectiveness. This combined model offered the required flexibility for different use cases while preserving proper control over sensitive information across organizational limits. Implementing data fabric solutions necessitates thoughtful evaluation of scalability elements that enable enterprise-level functions while upholding security protocols. Atlan's

research suggests that effective data fabric deployments emphasize modular architectures capable of horizontal scaling across business units while ensuring uniform governance frameworks [6]. The architecture of the platform includes distributed processing features that accommodate increasing data amounts and user numbers while maintaining performance and security compliance standards. The data fabric consists of various functional layers, starting with a cohesive data ingestion layer that can manage both batch and real-time data streams from throughout the bank's ecosystem. Sophisticated data integration features employed machine learning techniques to autonomously detect and fix data quality problems, minimizing the manual labor needed for data preparation throughout analytical processes. The platform adopted a schema-on-read method that facilitated adaptable data modeling while ensuring governance standards were upheld across the organization. The platform integrated artificial intelligence and machine learning features, facilitating automated data identification, categorization, and lineage tracing within enterprise data resources. Data fabric architectures enhance AI value through automated data cataloging and metadata management features that improve model accuracy and lower development costs [6]. Natural language processing features enabled business users to ask questions about data through conversational interfaces, making insights accessible throughout organizational levels while ensuring proper security measures and auditing capabilities. The execution adopted an agile methodology featuring a phased implementation strategy that emphasized high-impact applications like fraud detection and customer analysis. Early stages concentrated on showcasing platform benefits while fostering trust in the new framework via quantifiable business results. Every following phase broadened the platform's range and functionalities, ultimately covering the whole enterprise data ecosystem while ensuring operational stability and adherence to security compliance standards. Effective data fabric deployments demand organized strategies that harmonize technical intricacies with the delivery of business value across organizational limits [6]. The phased approach allowed for ongoing stakeholder involvement and iterative enhancement processes that catered to new requirements while preserving project progress and leadership backing during the transformation effort.

Table 2: Key Scalability Considerations for Enterprise data Fabric Implementations Emphasizing Security Compliance and Operational Efficiency [6]

<table><tr><td>Scalability Component</td><td>Architecture Priority</td><td>Security Requirement</td><td>Operational Complexity</td></tr><tr><td>Horizontal Processing</td><td>Critical</td><td>High</td><td>High</td></tr><tr><td>Modular Design</td><td>High</td><td>Medium</td><td>Medium</td></tr><tr><td>Governance Framework</td><td>High</td><td>Critical</td><td>High</td></tr><tr><td>Metadata Management</td><td>Medium</td><td>High</td><td>Medium</td></tr><tr><td>User Access Controls</td><td>High</td><td>Critical</td><td>Low</td></tr></table>

## IV. SECURITY FRAMEWORK: RBAC, DATATOKENIZATION, AND PRIVACY CONTROLS

Safety was the foremost priority during the transformation, considering the delicate nature of financial information and the stringent regulations that oversee banking activities. The bank established a robust security framework that surpassed industry benchmarks while allowing the adaptability required for sophisticated analytics and AI technologies. Role-based access control (RBAC) established the foundation of the security framework, offering detailed management of data access aligned with user roles, duties, and organizational needs [7]. RBAC systems are primarily based on the least privilege concept, granting users the minimum access required to fulfill their assigned roles within company structures. Frontegg's research highlights that successful RBAC implementations lower security incidents by preventing unauthorized access to critical resources, all while ensuring operational efficiency within enterprise settings [7]. The RBAC framework included dynamic policy assessment that took into account contextual elements like user location, device features, and access behaviors when determining authorization choices, allowing the bank to enforce stringent security measures while accommodating valid business requirements for data access. The execution involved automated user onboarding and offboarding procedures that guaranteed access privileges stayed updated as employees transitioned roles or departed from the company. Routine access evaluations and adherence reporting ensured continuous supervision of permission allocations, while machine learning models consistently analyzed access behaviors to detect possible security irregularities or breaches of policy. RBAC systems allow organizations to meet regulatory requirements by offering detailed audit trails and automated reporting features that show compliance with security policies [7]. Current RBAC frameworks include hierarchical role

configurations that mirror organizational relationships and business operations, facilitating effective permission management within intricate enterprise settings. The system offered automated processes for assigning and modifying roles, decreasing administrative burdens while ensuring security stability during the user lifecycle management procedure. By replacing sensitive data pieces with non-sensitive tokens throughout the analytical environment, data tokenization acted as a crucial security safeguard. The bank adopted format-preserving tokenization that retained data usability for analysis while removing the risk of revealing actual sensitive data. This method allowed analysts and data scientists to utilize realistic data sets without needing access to real customer information, greatly minimizing privacy risks while ensuring analytical integrity [8]. Agboola et al. show that tokenization systems ensure strong data security by establishing irreversible links between sensitive information and randomly produced tokens that preserve referential integrity among database connections [8]. The tokenization system utilized sophisticated cryptographic methods and robust key management protocols that adhered to top security benchmarks, employing token mapping and key management solutions through various protective layers, such as hardware security modules and multifactor authentication for key access. Privacy measures expanded beyond tokenization to encompass data masking, anonymization, and pseudonymization methods suitable for various applications and compliance obligations. The system introduced automated privacy impact assessments that analyzed data usage trends and pinpointed potential privacy threats, facilitating proactive risk management and compliance verification throughout the corporate data ecosystem. Database tokenization systems provide extensive privacy safeguards, ensuring that even authorized users cannot reach the original sensitive information without the necessary permissions and cryptographic keys [8]. The system offered thorough audit trails for every tokenization and de-tokenization action, aiding in regulatory compliance and forensic analysis needs. Enhanced privacy measures allowed the organization to preserve analytical functions while adhering to data protection laws and industry security standards during the transformation effort.

Table 3: RBAC Implementation Benefits and Complexity Factors [7]

<table><tr><td>RBAC Component</td><td>Security Effectiveness</td><td>Implementation Complexity</td><td>Compliance Support</td><td>Operational Efficiency</td></tr><tr><td>Least Privilege</td><td>Very High</td><td>Medium</td><td>High</td><td>High</td></tr><tr><td>Dynamic Policy Evaluation</td><td>High</td><td>High</td><td>High</td><td>Medium</td></tr><tr><td>Automated Provisioning</td><td>Medium</td><td>Medium</td><td>Very High</td><td>Very High</td></tr><tr><td>Hierarchical Roles</td><td>High</td><td>Low</td><td>Medium</td><td>High</td></tr><tr><td>Audit Trail Generation</td><td>Medium</td><td>Low</td><td>Very High</td><td>Medium</td></tr></table>

## V. REAL-TIME ANALYTICS APPLICATIONS: FRAUD DETECTION AND CUSTOMER PERSONALIZATION

The revamped data architecture allowed the bank to implement advanced real-time analytics applications that greatly improved both risk management and customer experience functions. These applications showcased the tangible benefits of the investment while laying the groundwork for ongoing advancements in data-oriented banking services. Fraud detection emerged as the most essential and immediately influential use of the new platform, establishing thorough fraud prevention mechanisms that assessed transaction behaviors in real-time to spot possible fraudulent actions [9]. AI technologies transform fraud detection, allowing financial institutions to analyze large volumes of transaction data and recognize nuanced patterns that conventional rule-based systems fail to spot. Sharma highlights that AI-driven fraud detection systems considerably lower false positive rates and enhance overall detection accuracy by utilizing machine learning algorithms that consistently evolve with new fraud strategies [9]. The system utilized sophisticated machine learning models that autonomously adapted to new fraud patterns and revised detection parameters without human input, guaranteeing ongoing enhancement in threat detection abilities. The bank introduced instant fraud detection systems that evaluated transactions within milliseconds of their start, examining various risk elements at the same time to deliver prompt authorization outcomes. The platform integrated external data sources such as device fingerprinting, geolocation analysis, and behavioral biometrics to improve detection precision across various transaction channels. Machine learning algorithms are continuously developed from verified fraud incidents and investigative insights, maintaining their detection abilities in response to evolving fraud techniques [9]. Contemporary AI systems utilize deep learning neural networks and ensemble techniques to surpass traditional methods in fraud detection

effectiveness. The fraud prevention framework ensured continuous availability during peak processing times while accommodating enterprise-level transaction volumes across digital banking platforms, ATM networks, and point-of-sale systems across the banking ecosystem. Customer personalization embodied another groundbreaking use made possible by the new architecture, utilizing real-time recommendation systems that evaluated customer behavior, purchase history, and contextual elements to provide tailored product suggestions. The bank established personalization systems that functioned across every customer interaction point, such as mobile banking apps, websites, ATMs, and in-branch experiences, ensuring uniform experiences no matter the preferred channel [10]. Bhatt shows that personalization engines driven by AI allow financial institutions to attain much higher conversion rates by providing targeted recommendations derived from a thorough analysis of customer behavior and predictive modeling methods [10]. Sophisticated segmentation methods categorized customers according to their spending habits, life events, and financial aspirations, facilitating marketing initiatives that attained significantly improved engagement rates over conventional mass-marketing tactics. Real-time analytics analyzed customer engagements to uncover cross-selling and upselling chances, while making sure suggestions matched each customer's specific needs and preferences: thorough AI Incorporation and Operational Improvement. Real-time analytics features are expanded beyond client-oriented applications to encompass predictive maintenance frameworks, adaptive pricing models, and smart customer service routing systems. The analytics platform facilitated company-wide optimization efforts via automated decision-making methods that improved operational efficiency across various business functions [10]. Predictive analytics driven by AI facilitated proactive scheduling of maintenance, efficient resource distribution, and enhanced customer service delivery via intelligent automation and insights derived from data. FinTech companies employing thorough AI solutions gain competitive edges through superior customer experiences, lowered operational expenses, and improved risk management abilities that promote sustainable business development.

## VI. CONCLUSION

The extensive overhaul executed by this international retail bank highlights the transformative power of combining cutting-edge artificial intelligence technologies with reliable, adaptable data fabric structures in contemporary financial services. A significant shift towards data-driven operational frameworks that enable instant decision-making and enhanced customer experiences is shown in the successful migration from antiquated systems to cloud-based analytics platforms, which represents more than just technology advancements. Financial institutions can comply with regulations while maintaining the operational flexibility required for innovation by using advanced security frameworks, such as role-based access controls and improved tokenization techniques. The use of real-time analytics tools for fraud detection and customer personalization shows the observable advantages of deep data integration, producing measurable improvements in customer engagement metrics and risk management effectiveness. The phased implementation approach used during the transformation effort offers a model for comparable organizations aiming to upgrade their technological systems while reducing operational disruption. The cultural and organizational shifts that come with technical transformation underscore the significance of effective change management in securing lasting competitive advantages via technology implementation. The article illustrates that effective digital transformation necessitates dedicated leadership, thorough focus on security issues, and methodical strategies that align technical challenges with the delivery of business value. Financial organizations undertaking comparable transformation paths can utilize these insights to formulate effective strategies that prepare them for ongoing success in the changing digital banking environment while preserving the trust and confidence of their clientele.

Generating HTML Viewer...

References

9 Cites in Article

Pamela Jayakumar (2024). Transforming Software Deployment: The Impact of AI in Software Release.

G Jay (2025). Unlocking the Power of Data & AI: A Leadership Guide to Transformation.

Theresa Campbell,;,Alia Shkurdoda,Marcin Dobosz (2023). Challenges of legacy system integration: An in-depth analysis.

Matthieu Jonglez (2024). How Embracing Data Fabric Can Enhance the Value of AI Initiatives.

Atlan (2023). Implementing A Data Fabric: A Scalable And Secure Solution For Maximizing The Value Of Your Data.

Frontegg (2022). What Is Role-Based Access Control (RBAC)? A Complete Guide.

Rihanat Bola,Agboola (2022). Database security framework design using tokenization.

Vandana Sharma (2022). Artificial Intelligence in Fraud Detection and Personalization: Transforming the Landscape of Security and User Experience.

Shardul Bhatt (2025). Empowering FinTech with AI: Real-Time Fraud Detection, Predictive Analytics, and Personalized Customer Experiences.

No ethics committee approval was required for this article type.

Data Availability

Not applicable for this article.

How to Cite This Article

Vineel Bala. 2026. \u201cHow a Global Retail Bank Transformed Decision-Making with Secure AI Analytics\u201d. Global Journal of Computer Science and Technology - E: Network, Web & Security GJCST-E Volume 25 (GJCST Volume 25 Issue E1): .

Explore published articles in an immersive Augmented Reality environment. Our platform converts research papers into interactive 3D books, allowing readers to view and interact with content using AR and VR compatible devices.

Your published article is automatically converted into a realistic 3D book. Flip through pages and read research papers in a more engaging and interactive format.

The way businesses manage data architecture and security systems has evolved significantly as a result of the broad use of artificial intelligence technology in commercial settings. The substantial security implications of federated data architectures over centralized ones in AI-augmented environments are examined in this study, with particular attention paid to the complex interrelationships between data sovereignty, access control mechanisms, encryption techniques, and regulatory compliance requirements. Federated architectures demonstrate improved capabilities to maintain data locally and support collaborative AI techniques through privacy-preserving techniques, particularly supporting enterprises operating across multiple jurisdictions with stringent data localization requirements. The decentralized aspect of federated systems delivers built-in resilience against security violations by reducing exposure range while enhancing real-time threat identification and response abilities.

Our website is actively being updated, and changes may occur frequently. Please clear your browser cache if needed. For feedback or error reporting, please email [email protected]

Thank you for connecting with us. We will respond to you shortly.