In the main article of 2021, we showed that there is no any “relativistic time acceleration” in GPS satellite orbit, and the frequency of signals increases due to the acceleration of photons in the Earth’s gravitational field. This article shows that the so-called “relativistic correction” does not work in principle, even if we imagine that the frequency of atomic clocks increases in orbit, as relativists claim.

Funding

No external funding was declared for this work.

Conflict of Interest

The authors declare no conflict of interest.

Ethical Approval

No ethics committee approval was required for this article type.

Data Availability

Not applicable for this article.

Sokolov Vitali. 2026. \u201cIs the Atomic Clock Accelerating in Satellite Orbit?\u201d. Global Journal of Science Frontier Research - A: Physics & Space Science GJSFR-A Volume 25 (GJSFR Volume 25 Issue A3): .

## I. INTRODUCTION

To measure time in clocks, various phenomena are used - from the oscillations of a pendulum and the oscillations of a quartz crystal to the radiation of atoms, and in the 17th century, Römer even used the periods of eclipses of Jupiter's satellite as a very accurate clock in his experiments to measure the speed of light. What all these phenomena have in common is that all clocks use some periodic process and they differ in the size of the period (from 42 and a half hours in Römer's "clock" to nanoseconds in atomic clocks) and the stability of the period (the instability of the atomic clocks of GPS satellites is one second per million years).

The change in frequency detected during the first launches of GPS satellites was declared by relativists to be confirmation of "time dilation" in moving systems and in the gravitational field:

Clocks in orbit run faster (since "time flows faster there") and therefore, if they are not slowed down before launch, they will go forward by 38 microseconds in a day, that is, GPS will not be able to And a "relativistic correction" was introduced into the satellite clocks: before launching into orbit, their frequency was reduced by $4.57\mathrm{Hz}$ and the clocks began to emit a frequency of 10,229,999,995.43 Hz instead of 10,239,000,000 Hz.

After the launch into orbit, a signal with a frequency of 10,239,000,000 Hz began to arrive on

Earth. Why? Because in orbit the clock "accelerated" and began to "tick" faster: 10,229,999,995. $43 + 4.57 =$ 10,230,000,000 Hz.

The relativists calmed down and continue to claim that the relativistic correction solved the problem and without this correction the GPS system would not be able to work.

## II. WHAT'S REALLY GOING ON

Before launching into orbit, the clock's frequency was reduced by 4.57 Hz and it began to emit a frequency of 10,229,999,995.43 Hz instead of 10,239,000,000 Hz. " Was their synchronization disrupted?

Let's first answer the question: Which clocks run faster - those that operate at a frequency of, for example, $1,000 \, \text{Hz}$, or those that operate at a frequency of $= 1,100 \, \text{Hz}$? go forward by 38 microseconds in a day, that is, GPS will not be able to

How will relativists answer this question?

The correct answer, of course, is this:

a clock with a frequency of $1,000\mathrm{Hz}$ and a clock with a frequency of $1,100\mathrm{Hz}$ run at the same speed and show the same time.

Let's look at a simple example.

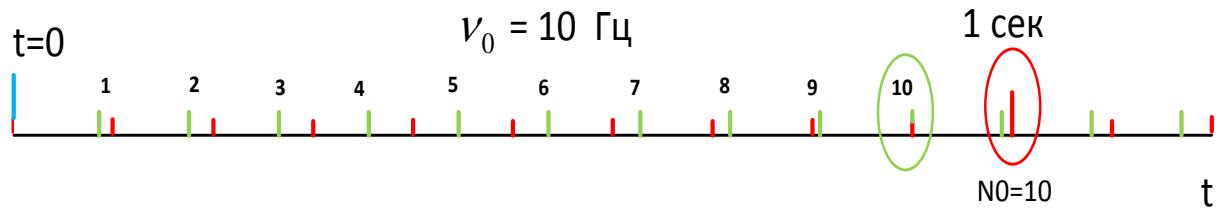

In Fig. 1, the clock operates at a frequency of $10\mathrm{Hz}$. This means that the generator produces a pulse every 0.1 sec (period $t_0 = 01$ sec). And the counter provided in the circuit, set to the number $N = 10$, shows 1 sec, 2 sec, etc. after every 10 pulses.

Obviously, the clock reading depends both on the frequency of the pulse generator and on the number of pulses the counter is set to.

Fig. 1

If you change (for example, decrease) only the period (pulses are marked with numbers 1,2,3...), but leave the counter set to the $N = 10$, the pulses will go more often and every tenth pulse will change the time reading earlier, that is, the clock will go faster (Fig. 1).

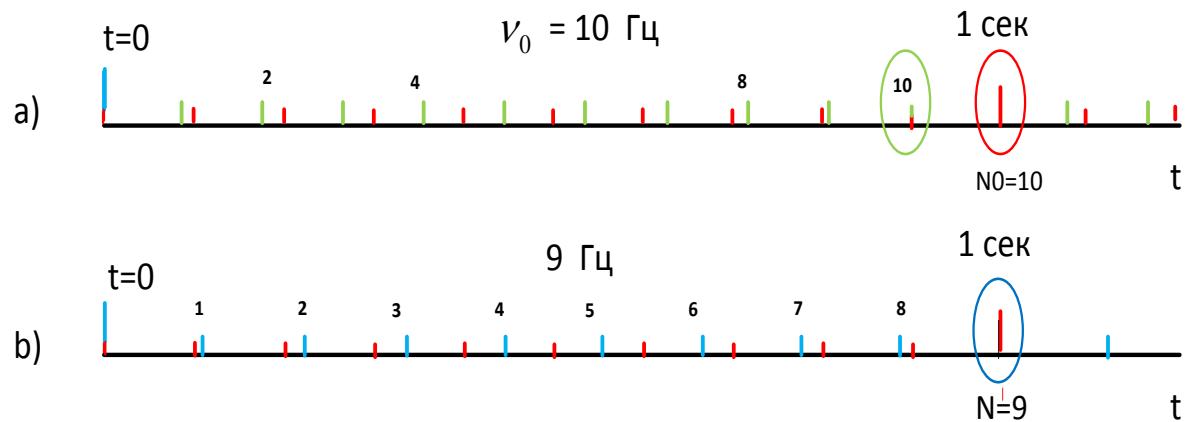

But if you change not only the pulse period, but also change the counter setting accordingly (from

$N = 10$ to $N = 11$ ), the second pulses will come out at the same moment as before the changes, and the clock speed will not change.

Fig. 2

Changing the frequency of an atomic clock - provided that the pulse counter is adjusted accordingly - does not affect the clock speed and the clock remains synchronous with other clocks.

The GPS satellite clocks were "relativistically corrected" before they were launched into orbit, changing their frequency from 10,239,000,000 Hz to 10,229,999,995.43 Hz. But this was done under an obviously necessary condition: after the correction was introduced, the clocks had to remain synchronous with other clocks on Earth operating at a frequency of 10,230 MHz. Therefore, at the same time as the frequency was changed, the clock settings were also changed.

"Relativistic correction" was introduced in GPS satellite clocks before they were launched into orbit. The frequency was changed, as they proposed, from 10,239,000,000 Hz to 10,229,999,995.43 Hz, but this was done under an obviously necessary condition: after the correction was introduced, the clock must remain synchronous with other clocks on Earth operating at a frequency of 10,230 MHz. Therefore, at the same time as the frequency was changed, the clock's setting was also changed.

And after the clock was launched into orbit, the signal began to arrive on the ground not with a frequency of 10,229,999,995.43 Hz, to which the clock was set, but with a frequency increased to 10,239,000,000 Hz.

But since, according to relativists, the speed and frequency of photons cannot change during the time of movement from the satellite to the Earth, relativists concluded that the observed change in the frequency of the signal can only be explained by the fact that in orbit - since "time flows faster" there - the clock runs at an increased frequency and because of this increase in frequency, the atomic clock go forward by 45 μsec per day. It is a beautiful, but erroneous explanation.

Does the speed of a clock depend only on the fact that clocks in orbit "tick" faster, as relativists assume? Of course not. And here's why.

Before launching into orbit, the clock is retuned from a frequency of 10,230,000,000 Hz to a frequency of 10,229,999,995.43 Hz, and at the same time - in order not to disrupt the synchronicity of the clock with the control center clock - the counter setting is changed (by analogy with Fig. 2, b) instead of $N = 10$, the clock is set to $N = 9$. The atomic clock circuit has a binary counter, at the output of which the frequency is reduced to 1 hertz - to a second tick.

And after these changes, relativists decided that their "correction" works: a clock with a reduced frequency is launched into orbit, but due to the "acceleration of time" in orbit, they "tick" faster instead of the frequency of 10,229,999,995. 43 Hz, their frequency turns out to be equal to 10,230,000,000 Hz and the signal comes to Earth with this frequency.

But, as shown above, in order for the clock - with a changed frequency - to run at the same speed after being put into orbit as before the launch, i.e. to remain synchronous with the control center clock, in addition to changing the frequency, the counter setting must also be changed. But due to the relativistic "acceleration of time" in orbit, the setting cannot change in any way and remains the same as the engineers set before the launch (as in Fig. 2, b, $N = 9$ ).

Even if, as relativists mistakenly assume, the clock will "tick" faster in orbit, due to the fact that the counter setting on the orbiter does not change and remains the same as before the launch, at a frequency of 10,230,000,000 Hz the clock speed cannot be equal to that to which it was set before the launch.

## III. CONCLUSION

The relativists' assertion that atomic clocks in orbit change their speed and signal frequency is erroneous. The introduction of the so-called "relativistic correction" only led to a change in the GPS satellite signal frequency to a more convenient value of 10,230,000,000 Hz, but has nothing to do with ensuring the operability of the GPS navigation system.

Even if we imagine such an unrealistic situation that after launching into orbit the frequency of the atomic clock, as relativists claim, changes and therefore the synchronicity of the clock is disrupted, the "relativistic correction" in principle cannot restore the synchronicity of the clock. The only correct explanation is ours given in the work Is the atomic clock accelerating in satellite orbit?:

- After launch into orbit, the speed of the atomic clock does not change in any way,

- The clock emits a signal at a frequency of 10 230 MHz, to which it was tuned before launch,

- Photons during their movement from the satellite to the Earth in the gravitational field increase their speed of movement and due to the increase in speed in accordance with the Doppler effect, their frequency increases proportionally.

Before launch, the atomic clock is tuned to a frequency of 10,229,999,995.43 Hz so that a signal of a more convenient frequency of 10,230 MHz comes from the satellite to receivers on Earth, that is, the correction has nothing to do with the theory of relativity and cannot be considered as confirmation of this erroneous theory.

### Article 2021

Is the atomic clock accelerating in satellite orbit?

It is generally accepted that the relativistic "time dilation" is confirmed with high accuracy in the GPS system. Professor Neil Ashby was one of the first to state this: "The GPS system, in fact, is the embodiment of Einstein's views on space and time and cannot function properly without taking into account fundamental relativistic principles.... The basic principle by which GPS navigation works is - this is a simple application of the second postulate of the special theory of relativity, namely, the constancy of the speed of light [1].

Numerous authors, referring to his works, repeat:

- Due to the fact that GPS satellites move at a speed of $3.874 \, \text{km/s}$, the clock of the GOS satellite run $8.349 \times 10^{11}$ slower than Earth's clock and therefore are 7214 nanoseconds a day behind,

- Due to a decrease in the gravitational potential, GPS satellite clocks run $5.307 \times 100$ faster than Earth's, and therefore ahead of them by 45,850 nanoseconds per day.

- In total, these two effects give 45850 - 7214 = 38596 ns /day i.e. GPS satellite clock for every 24 hours go ahead by 38.636 microseconds and in the positioning, this should result in an error of $11.4 \mathrm{~km}$.

And they argue that without taking into account the postulate of invariance, the clocks of the satellites cannot be synchronized, that the GPS receiver determines its coordinates only as a result of comparing the time on its clock and the time indicated in the satellite signal, and the Sagnac effect is generally one of the most confusing relativistic effects that can lead to errors of hundreds of nanoseconds. And as evidence of "slowing down or speeding up time" in satellite clocks, changes in signal frequencies after the launch of GPS satellites are considered [3].

The Ives-Stilwell experiment (1938) and the Pound-Rebka experiment (1960) are considered the main confirmations of the relativistic "time dilation". The conclusion about time dilation in these experiments was made only because the wave theory of light, in principle, did not allow any changes in frequencies if the source was stationary relative to the receiver, especially since the speed of light - in accordance with the postulate of invariance - was assumed to be constant in magnitude.

Some disagreed with the strange conclusion about time dilation, but no other explanation for the frequency change was found. So, L. Brillouin, who recognized the postulate of the invariance of the speed of light, theoretically proved that the local time of the atomic clock in the Pound-Rebka experiment practically does not depend on such a small change in the gravitational potential, but he could not explain why the frequency changes: "we do not know how to explain it" [2].

In our works [4-7] it is shown that the wave theory of light, adopted else in the 17th century, could not explain these experiments in principle, but at the same time they are simply explained if light is considered not as waves in ether, but as a stream of photons, each of which has its own frequency and - contrary to the postulate of invariance - relative to the receiver can move at different speeds.

In the Ives-Stilwell experiment, in the direction perpendicular to the direction of motion of the source, only those photons go that are emitted by the source a little back and, after the vector addition of the velocities, change direction and move towards the receiver with an initial velocity less than C. Due to a decrease in velocity, the frequency of photons decreases and this decrease in frequency is explained not by the mystical "time dilation" in a moving source, but only by a decrease in the speed of the photons.

Note: Ives was a staunch antirelativist (as was and Louis Essen, the inventor of the cesium clocs) and refused to admit that he had rendered decisive support to the SRT. [9] In the Pound-Rebka experiment, it was found that a Mössbauer receiver receives a signal of frequency $\nu_{0}$ when the source is located next to it, but some kind of mismatch occurs and reception becomes impossible when the receiver is located at an altitude of $22\mathrm{m}$.

Relativists explain this mismatch as follows.

Since, in accordance with the wave theory, the frequency of radiation on the path from a stationary source to a receiver cannot change, only the receiver can change with a change in altitude: in accordance with SRT, at an altitude "time flows faster", the receiver becomes more high-frequency and therefore can receive not $\nu_{0}$, but a higher frequency. And the experiment showed that the receiver really turns out to be matched and receives a signal if it is moving to the source at a certain speed, since in this case, in accordance with the Doppler effect, the receiver sees that the frequency turns out greater than $\nu_{0}$.

That is, relativists assert: when passing to a weaker gravitational field, nothing changes in the radiation, but the receiver changes. And they explain the change in the receiver by the fact that at a higher altitude "time flows faster" and that is why it becomes more high-frequency.

We explain the Pound-Rebka experiment as follows:

- The source emits photons of frequency $\nu_{\mathrm{o}}$

- In emptiness, photons move upward with an initial speed $C$,

- Under the influence of the gravitational field, the speed of their movement decreases,

- Photons arrive at the receiver at a speed less than C,

- Therefore, the receiver sees a reduced frequency and cannot receive these photons,

- Signal reception becomes possible if the receiver moves towards the photons at a certain speed, since in this case the photons of frequency $\mathbf{v}_0$ meet with the receiver at a speed $C$ and the receiver sees the frequency $\nu_0$.

The situation does not fundamentally change due to the fact that photons actually move not in the emptiness, but in air. Photons are re-emitted by air atoms, between re-radiation they move in emptiness with a constant frequency and, under the influence of gravity, reduce the speed of movement. With each re emission, the frequency of the photons decreases, and their speed relative to the re-emitting atoms at the moment of re-emission becomes equal to c. The resulting decrease in the frequency of the photons is the same as if they passed the path from the source to the receiver in the emptiness.

Thus, signal reception in the Pound-Rebka experiment turns out to be impossible not because at altitude "time flows faster" and the receiver becomes more high-frequency, but because the frequency of electromagnetic radiation decreases: the speed of photons, when they move away from the gravitating mass, decreases and the receiver, instead of radiation of frequency $\mathbf{v} \mathbf{o}$, sees radiation of a lower frequency [5].

#### In the GPS system

The GPS system, where satellites move fast enough and the gravitational field is much less than on the surface of the Earth, is considered by relativists as a new confirmation of the effects of "time dilation". Because the frequency of signals received from satellites depends on the speed of the satellite and on the altitude of the orbit, relativists conclude:

- A low-frequency signal comes to the receiver on the ground, because the atomic clock of the GPS satellite slows down the speed due to the fact that it moves with orbital speed,

- A signal of an increased frequency comes to the receiver on the ground, because the atomic clock of the GPS satellite increases its speed and goes faster due to a decrease in the gravitational potential

- As a result, every 24 hours the satellite clock goes ahead by 38.636 microseconds.

The frequency of signals received on the ground actually depends on the speed of movement and the altitude of the satellite's orbit, but, as we showed in [5], these changes are explained on the basis of purely classical concepts and are not related to the myths of the theory of relativity about "time dilation" or "gravitational acceleration of time" in the GPS satellite clock.

The speed of the satellite and the height of its orbit have different effects on the frequency of the electromagnetic signal, and therefore we consider these phenomena separately.

Because of the speed of the satellite

If no relativistic correction is entered, the atomic clock of the GPS satellite runs at the same speed as the clock on earth. There is no high-speed "time dilation" in the GPS satellite clock, and they emit a signal at a frequency of 10.23 GHz, to which they were tuned before launching into orbit [5]. But at the control center on ground, a low frequency signal arrives. The frequency decreases not because clocks run slower in orbit, but because the transverse Doppler effect occurs.

The satellite is moving at a speed of $3.874 \, \text{km/s}$, due to the transverse Doppler effect, the frequency in relative terms decreases by 8.349 257 e-11 and a signal with a frequency reduced by 0.854 Hz arrives at the control center: 10 230 000 000 - 0.854 = 10 229 999 999.146 Hz.

Such a change in frequency occurs due to the fact that at the moment when the photons leave the transmitter, their speed $C$ is vectorially summed with the satellite speed $3.874 \, \text{km/s}$ and turns out to be $0.025 \, \text{m/s}$ less than $C$. And therefore the signal frequency proportional decreases by $0.854 \, \text{Hz}$.

That is, just as in the Ives-Stilwell experiment, the decrease in frequency is explained not by the mystical "time dilation", but by the decrease in the speed of the photons at the moment they leave the moving source.

#### Because of the change in gravity

If no relativistic correction is entered, the atomic clock of the GPS satellite runs at the same speed as the clock on earth. There is no gravitational "time acceleration" in the GPS satellite clock, and they emit a signal at a frequency of 10.23 GHz, to which they were tuned before launching into orbit [3,5]. But to the ground, more high frequency signal arrives 10 230 000 005. 5189 Hz. The frequency increases not because the clocks run faster in orbit, but because photons in the gravitational field move with acceleration and increase the speed of movement.

The satellite moves at an altitude of $20,184 \mathrm{~km}$, where the gravitational field is almost 20 times weaker than on the Earth's surface, and therefore the photons move with acceleration ranging from an initial value of $0.565 \mathrm{~m} / \mathrm{s}^{2}$ to $9.8 \mathrm{~m} / \mathrm{s}^{2}$, as shown in Fig1 in our work {5}. If we imagine that the signal goes in absolute emptiness, its speed increases by $0.161734 \mathrm{~m} / \mathrm{s}$ and turns out to be equal to $2997924581617 \mathrm{~m} / \mathrm{s}$ and is more than $\mathrm{C} = 299792458 \mathrm{~m} / \mathrm{s}$.

An increase in the speed of movement of photons by 0.161734 \\mathrm{m/s} (in relative values of 5.3948 \\mathrm{e-10}) leads to a proportional increase in frequency by 5.5189 \\mathrm{Hz}. Therefore, if before launching into orbit in the atomic clock, you do not enter a correction, the satellite emits a frequency of 10.23 \\mathrm{GHz}, but a signal of an increased frequency of 1023000005.5189 \\mathrm{Hz} arrives at the receiver on Earth.

Due to the fact that the signal goes not in a void, but in a rarefied atmosphere, the time it takes for the signal to arrive from the satellite increases, but the frequency also increases by $5.5189\mathrm{Hz}$.

## IV. RESULTING FREQUENCY CHANGE

Due to the speed of the satellite's orbital motion, the signal frequency decreases by 8.349 e-11, and due to a change in the gravitational potential, it rises by

5.3948 e-10. The resulting change in frequency in relative units is determined by the difference

$$

\begin{array}{l} 5.3 9 4 8 \mathrm{e} - 1 0 - 8.3 4 9 \mathrm{e} - 1 1 = 4.4 5 5 9 9 \mathrm{e} - 1 0 \text{and isequalto} \\1 0, 2 3 0, 0 0 0, 0 0 0 \times 4.4 5 5 9 9 \times 1 0 - 1 0 = 4.6 6 4 7 7 7 7 \mathrm{G H z} \end{array}

$$

Thus, if no correction is introduced in the satellite clock, instead of the frequency 10 230 GHz a frequency of 10 230 000 004.66 Hz arrives from the satellite to the control center, and it is higher by 4.66 Hz and similarly the satellite receives a frequency of 10,229,999,995.33 Hz, less by 4.66 Hz.

Before launching into orbit, an amendment is introduced into the satellite atomic clock - they are tuned to a lower frequency 10 230 000 000 - 4.664 77 = 10 229 999 995.33 Hz

## V. RESULTING FREQUENCY CHANGE

Due to the speed of the satellite's orbital motion, the signal frequency decreases by 8.349 e-11, and due to a change in the gravitational potential, it rises by 5.3948 e-10. The resulting change in frequency in relative units is determined by the difference

$$

\begin{array}{l} 5.3 9 4 8 \mathrm{e} - 1 0 - 8.3 4 9 \mathrm{e} - 1 1 = 4.4 5 5 9 9 \mathrm{e} - 1 0 \text{and isequalto} \\1 0, 2 3 0, 0 0 0, 0 0 0 \times 4.4 5 5 9 9 \mathrm{e} - 1 0 = 4.6 6 4 7 7 7 7 \mathrm{H z} \end{array}

$$

Thus, if no correction is introduced in the satellite clock, instead of the frequency 10 230 GHz, a frequency of 10 230 000 004.66 Hz which is higher by 4.66 Hz arrives from the satellite on ground and similarly the satellite receives from control center a frequency of 10,229,999,995.33 Hz, less by 4.66 Hz.

Before launching into orbit, an amendment is introduced into the satellite atomic clock - they are tuned to a lower frequency 10 230 000 000 - 4.664 77 = 10 229 999 995.33 Hz

The GPS satellite clock operates at a frequency of 10,229,999,995.33 Hz and at this frequency the satellite emits a signal. During 0.067 seconds, while the signal goes from the satellite to the receiver on Earth, the signal frequency increases by 4. 66 Hz and all receivers on the ground receive the frequency of 10.23 GHz.

Accordingly, the control center sends a signal to the satellite at a frequency of 10.23 GHz, while the signal goes to the satellite, the signal frequency decreases to 10,229,999,995.33 Hz and the satellite receives this signal.

This decrease in the satellite clock frequency by $4.66\mathrm{Hz}$ is called the relativistic correction, but, as we understand, this correction has nothing to do with the "time dilation" fantasies and is introduced into the GPS satellite clock only for the convenience of communication.

Relativists argue that without the introduction of this correction, the GPS satellite clock for every 24 hours goes ahead by 38.636 microseconds, and they emphasize that in the positioning system this should lead to a huge error of $11.4\mathrm{km}$.

But what does "the clock go forward" mean? In relation to the earth clock?

First, if all satellites clocks are strictly synchronized and equally move forward, the coordinates of the receiver are also accurately determined by the difference in the arrival times of signals from different satellites (the same time differences are obtained from the time stamps in the messages of the satellites), that is, the positioning accuracy does not decrease. And where are these 11.4 km?

Secondly, if the clocs lags or goes forward, it is easy to check by comparing its readings with other clocs. And in the GPS system with its most accurate atomic clock there is such an opportunity: every 12 hours the satellite passes over the same control point and you can ask it what time his clock shows and compare with the exact clock. If the correction is not introduced before the launch, there will be no difference, and this will prove the absence of "relativistic time acceleration"

The above frequency changes in the GPS system were obtained by classical methods without using Lorentz transformations or formulas of the general theory of relativity. These changes completely coincide with those that we were able to find in open publications, and this proves that relativistic calculations and "time dilation" to the GPS system have nothing to do and are redundant

In the main article of 2021, we showed that there is no any “relativistic time acceleration” in GPS satellite orbit, and the frequency of signals increases due to the acceleration of photons in the Earth’s gravitational field. This article shows that the so-called “relativistic correction” does not work in principle, even if we imagine that the frequency of atomic clocks increases in orbit, as relativists claim.

Our website is actively being updated, and changes may occur frequently. Please clear your browser cache if needed. For feedback or error reporting, please email [email protected]

×

This Page is Under Development

We are currently updating this article page for a better experience.

Thank you for connecting with us. We will respond to you shortly.