## I. INTRODUCTION

Technological advancement has shown a vital impact in current world trends. It has enabled communication information processing and collaboration among various aspects from personal use to departmental collaborations in workplace organisation. Therefore, it is important to understand the concepts of Information Technology (IT) infrastructure taking roles in evolving for the landscape of adaptability of information technology for the ever-changing demandson organisational needs. IT infrastructure components such as the hardware, software and the operating systems requires to have well designed IT infrastructure enhancing productivity, security, and accessibility. Hence adapting to technological demands and changes with innovation. [1] In the 21st century, the information world has evolved from the physical presence making information itself one of the most important aspects in the current world. Whether it be on customers, users' organisational information has derived from raw input of data which is collected, organised and processed. Therefore, it is important to have the availability to store this information in specific locations such as a storage device in a computer such as a hard drive or a solid-state drive. However, to store massive exabytes of data and information it would require an abundance of storage to store these large data often known as big data. Data centers provide the infrastructure which includes networking and power for big data applications to rely on.

### a) Importance of Cooling Data Centers

Data centers hold a variety of IT infrastructure equipment which tends to generate heat in the hardware. Overheating is the biggest threat to data centers. When the equipment starts generating heat those servers in a data center reduce in performance making it slower to perform tasks and certain damages can occur internally causing the hardware's lifespan to reduce. Therefore, preventing heat can ensure reliability in the data centers which needs to operate at ideal temperatures to reduce maintenance cost and maintain the reliability and uptime of data centers. [2]

Another point to add in maintenance is optimal performance levels of data integrity. The heat generated can cause these data centers to perform at such a slower rate reducing their efficiency and processing speed hence data integrity has a high chance of getting compromised, increasing the risk of making those data corrupted or even lost.

On the other hand, demand is ever growing for informational needs in current world therefore organisations will have to maintain and install most service resulting in a higher need of IT infrastructure to house these servers in a data center. This increased need of more service can cramp up and increase the server density to give services such as networking, security cloud storage, cloud computing, etc.

Finally, cooling systems improve the data centers energy efficiency when maintaining operations in optimal temperatures further improving reliability on the hardware. This can give the longevity on the hardware servers in the data centers which can hence enhance performance preventing throttling and processing speeds of the hardware such as the CPU or GPU As a result, cooling the data centers has their plethora of reasons and with the growth of demand on data also increases the importance of the cooling systems in data centers as well.

### b) Challenges in Cooling Systems

According to [3], there are 3 main components in the data center. They are-

- IT infrastructure

- Cooling systems

- Power Distribution Unit (PDU)

As the importance of data centers cooling system provide energy efficiency within the hardware of the servers, it still has a high energy consumption to the data center overall itself as the power the computers, network, and servers all to store and process data. Global data center electricity consumption shows a rough estimate between 220-320 TWh. This represents almost $1.3\%$ of global demands [3]. This comprise of higher energy costs for an organisation to maintain with the addition of cooling systems.

There are other challenges as followed.

Infrastructure: Accounting to higher cooling system consumption takes a toll on a high operational cost. Contributing 320 TWh, the cooling system takes up to $50\%$ of the power to keep the temperature at an optimal level. Therefore, with higher data demands comes with increased server density in the data center which could make an even higher cooling demand to keep those servers in check for temperature. This can also lead to these data centers to have retrofitting facilities. Older datacenters will be redundant as they are not able to support such modern cooling systems like liquid cooling hence these facilities will be expensive to revamp or relocate and even complex to manage data migration [3].

Environmental Factors: Location plays a huge role in data centers whether it be a start-up cost or an energy cost. Data centers in warmer climates would consume more cooling as the cooling system demand in these regions will be high. Further, this can also have thermal pollution. Further this also accounts for carbon emission as for power they require the full based Poly extraction which can cause a destruction to habitats and ecosystems [4].

Regulatory Compliance: The growth of data demand over the course of the years large organisations have implemented their own data centers scattered across a country or outsourcing it two other countries for a low cost therefore it is easily exploitable in terms of energy and emission. Data center compliances must adhere on set up legal impositions. Therefore, certain frameworks must govern this to ensure that the data handling and the energy consumption can ensure that these data centers achieve the CIA triad (Confidentiality, Integrity, Availability).

ISO 50001 - The International Organization for Standardization chose this as an energy management standard to make organisation commit to efficient energy management. To set targets and implement energy saving innovations. Especially, ISO 50001: 2018 investigates green data centers providing energy saving plans and targets [5].

- A shrae Thermal Guidelines-this regulation is set by the American Society of Heat, Refrigerating and Air Conditioning Engineers to follow thermal guidelines for data centers.

- This standard helps the balance between hardware performance and cooling needed.

- Recommended that data center has a recommended temperature between 18 to $27^{\circ} \mathrm{C}$ and a recommended humidity between 20 to $80\%$ [5]

PUE- the Power Usage Effectiveness measures efficiency in energy in data centers. This is the calculation of the total energy that the facility uses by the energy used in IT hardware [6].

- PUE= Total Energy used/ IT equipment energy usage

- An ideal PUE measurement is 1.0. Lower the PUE, better the efficiency. This ensures PUE minimises waste energy from cooling.

### c) Overview of Traditional VS Modern Cooling solutions

The study on cooling system is to find what are the optimal cooling systems required for data centers and how it can be effective in the future with comparison to traditional and modern techniques and also future trends on how it will be possible to effectively manage these data centers.

Table 1

<table><tr><td></td><td>Traditional Cooling Methods</td><td>Modern Cooling Solutions</td></tr><tr><td>Cooling Methods</td><td>Air-Based</td><td>Liquid-based</td></tr><tr><td>Efficiency</td><td>Moderately efficient although high energy consumption. Prone to issues of Overheating, reliability, and performance.</td><td>Significantly efficient as it operates without the risk of overheating especially required in a higher density data center.</td></tr><tr><td>Scalability</td><td>Easier to scale up by adding more units of AC however densities will increase the cost of scale.</td><td>More complex to implement thermal load however manages efficiently without significant scale up in energy use. And adaptable to growth and future proofing.</td></tr><tr><td>Environmental Factors</td><td>Due to heavy consumption of energy with AC's and fans, ensures high amount of greenhouse gas emissions to the environment.</td><td>Eco friendly, comparatively lower energy consumption which results to a reduced carbon emission.</td></tr><tr><td>Cost</td><td>Easy and cheaper to start up, however long-term scale up with cooling can adapt to high energy consumption.</td><td>Excessive cost and investment and install specialised equipment however maintenance and energy costs significantly drop as it is energy efficient.</td></tr><tr><td>Examples</td><td>CRAC/CRAH, Raised floor, Chiller system</td><td>Liquid cooling, AI driven Cooling</td></tr></table>

## II. BACKGROUND STUDY

### a) Early Cooling Methods

According to [8] Data centers have been operational and being in the industry since the 1970s and ever since the beginning of these innovations it has been a prone aspect to generate heat. Therefore, for the past 50 years there has been significant improvement in efficiency of cooling systems on how it has been operational with AC and ventilation systems. As mentioned earlier with Computer Room Air Conditioner (CRAC) and Computer Room Air Handler (CRAH) control the temperature of the data centers with pressurised air further this also had raised floor systems to be delivered making CRAC the most efficient and prominent way of maintaining temperatures in the data centers. To this day, CRAC and CRAH I still operating in data centers where CRAC performance with raised floor systems of servers however has been inefficient due to the rising costs on maintenance. The density created has significantly impacted the cooling systems making it difficult to maintain temperature at an optimal level between 18 to $27^{\circ}\mathrm{C}$.

### b) Hyperscale of Data Centers

To maintain demand on data companies in the current world have significant demand for storage as

### c) Evolution of Cooling Methods

well. Information is one of the crucial nontangible assets that any person can hold on to, but which can also have reduced in value over time. Therefore these companies will have to maintain their dim and on data centers well also make it efficient on storage facilities especially in high performing and operating companies such as Google, Microsoft and AWS, the hyper scale of data centers have made these large companies around the world to have that data centers to operate more than 40 MW.

These companies can have over 5000 servers physically especially to maximise their server capacity with high density server racks. This can help with maximising storage space, increase performance, and have a higher-powered AI chip for their storage to provide services.

It inevitably comes about having to consume a vast amount of power to run and operate the datacenters therefore looking into the power supplies with renewable energy like solar and wind power can help move away from fossil Ford powered data centers to make them energy sustainable. With the help of recycling old chips and hardware the transformation on the data centers can be potentially efficient and reduce on waste [9].

Table 2

<table><tr><td>1950-1970</td><td>Air conditioning and Ventilation systems (IBM was the first to introduce liquid cooling for their System 360 Model 91 computers) [10]</td></tr><tr><td>1980s</td><td>Raised floors with CRAC and CRAH</td></tr><tr><td>1990-2000</td><td>Server density increased which introduced hot/cold aisle containment and Chiller cooling systems</td></tr><tr><td>2010s</td><td>Liquid cooling became the trend with immersion cooling.</td></tr><tr><td>Present</td><td>Advance liquid immersion cooling andevaporation cooling with integration of IOTon AI</td></tr></table>

## III. APPLICATION AND USAGE

### a) Traditional Cooling Methods

## i. Hot/Cold Aisle Containment & Raised Floor Cooling

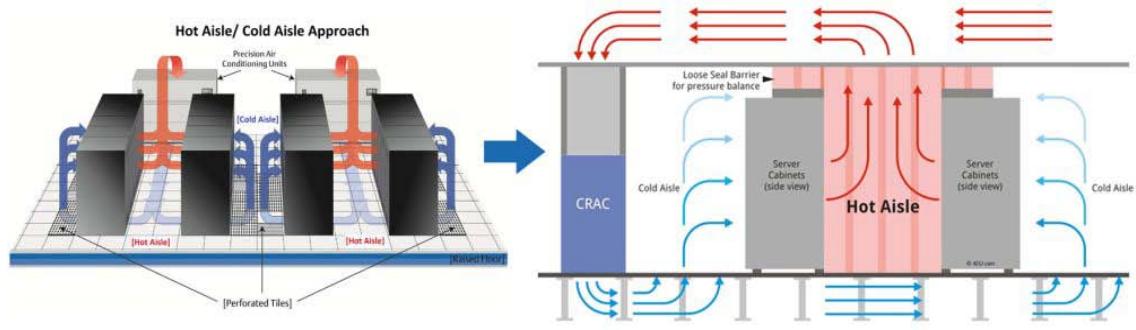

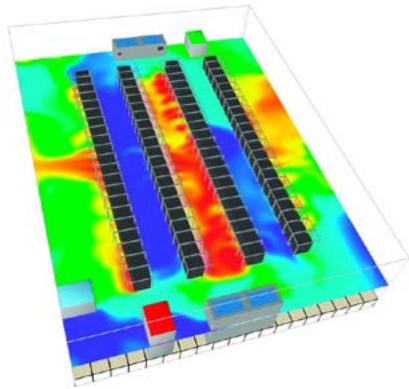

Figure 1

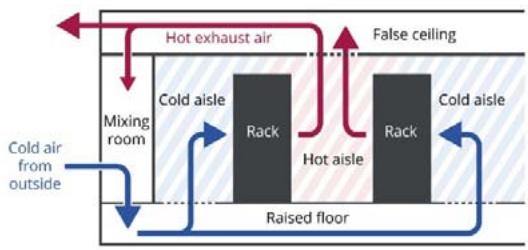

This has a strategic organising layout for the server racks in the data centers to ensure there is efficiency in the cooling method. This investigates an improved energy consumption where it has reduced costs with cooling systems with an effective air flow management. According to [12] the hot and cold aisle containment revolves an alternating row of server racks facilitating cold air intakes and hot air exhaustion on the

opposite direction. Further this also involves an innovation in server organisation by using raised floors. [13]

Figure 2

## ii. CRAC & CRAH

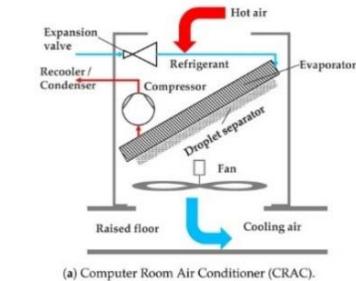

CRAC- Computer Room Air Conditioner is almost align with traditional air conditioning designed to maintain an optimal temperature in the data centers for their operations allowing key air distribution and humidity control in the server rooms for the data centers. CRAC in takes the warm air from the environment using a direct expansion on refrigeration cycle where the air is cooled through a cooling coil that has a refrigerant. The coil is kept cold by the compression where the excess heat is ejected through glycol mix with water and ambient air.

CRAH- Computer Room Air Handler are like CRAC. Instead of refrigerant, it has the chilled water that uses the fan to blow over the cooling coils to remove excess heat. This fills up with chilled water rather than the refrigerant which draws in the warm air from the computer room itself which is then transferred to regulate fan speed and ensure that the temperatures are at the optimal level to be stable allowing variability with humidity as well. [14]

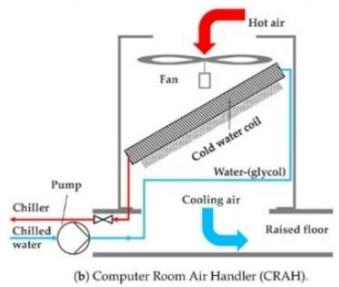

iii. Chiller based Figure 3

The chiller-based cooling system draws in both water-cooled chillers and air-cooled towers. The chilled water from the water-based chillers must be between 8 to $15^{\circ}\mathrm{C}$ which is pumped through pipes to manage CRAH units. The cooling tower takes in the warm water from that she loves and eject the waste heat to the atmosphere that removes vapour compression and then uses the absorption refrigeration cycle that diverts the chilly air back to the chiller to start the process all over again. The humidity is $100\%$ transferred to the Airstream to minimise thermal pollution it reuses the circulated water. [15]

Based on [15], in a similar basis especially in the winter months the water side economizer uses the evaporating cooling system to produce chilled water instead of the water-based chiller as it operates on ambient conditions. However, the water side economizer generates heat and therefore the outside air is sufficient to cool the condenser for a heat change between the loop from the cooling tower to the servers in the data centers.

### b) Advanced Cooling Techniques

i. AI Driven Cooling Systems Figure 4 In [16], ensures that the air flow in the data center does not restrict the CRAC units. This measures with thermal imaging as shown in the picture above and allowing sensors to record the temperature in the server/computer rooms in the data center. AI adjusts airflow dynamically using real time data recorded by these sensors with thermal imaging ensuring that temperature and humidity is maintained at an optimal level grasping to cut the cooling cost by $40\%$. Using thermal optimization mentioned in [17] shows that it makes manual optimization on consistent temperatures to be obsolete and therefore has made an improved performance in the data centers with data collection and analysis integrated with CRAC cooling systems.

ii. Free Cooling Figure 5 As simple as it sounds the free cooling system principle follows drawing frigid air from the outside to maintain air flow for the cooling systems, this helps naturally cooling the data centers from the outside. however regardless of free cooling system it still uses pumps and fans to distribute the frigid air through the server racks in the data centers. Later it dissipates the warm air back into the environment outside the data center which has a significant recyclable cooling system. As a result, to improve efficiency and to significantly reduce energy cost using passive air from the outside. [27]

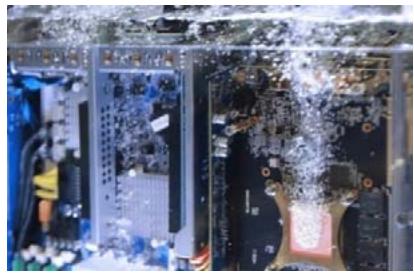

iii. Immersion Cooling & Direct-to-Chip Figure 6

Immersion Cooling: This form of liquid cooling in data centers shows that the servers in the data centers are submerged into a non-conductive liquid where the heat can be transferred directly to the liquid itself without any need for cooling components such as fans or CRAC. Gigabyte said that in [20], the services are packed closely together making their hardware components more compact making it more effective two dissipating heat with immersion cooling and is also set to increase the power usage effectiveness and having minimal maintenance required. There are two types of immersion cooling:

### c) Comparison between Traditional & Modern Cooling Systems

- Single Phase- The server components submerged into a thermally conductive coolant which the heat is transferred to the liquid itself. This liquid does not boil or freeze as it maintains the liquid form.

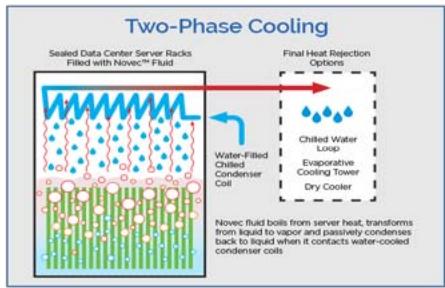

- Two Phase- The server components in the data center are submerged in the thermally conductive coolant. However, the liquid it is submerged in has a low temperature of evaporation where the vapour is then cooled with a heat exchange method using a condenser coil having a recyclable system to cool the server components again. [22].

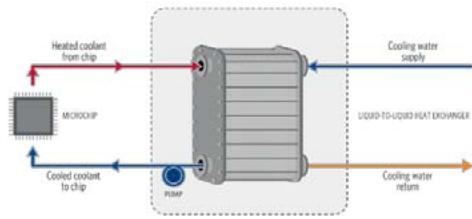

Figure 7

Direct-to-Chip-this component also uses a liquid coolant but rather than completely submerging the servers in the liquid, the liquid is transferred to the heatprone IT infrastructure such as the CPU, GPU, and memory which those components come with a cold plate attached to the chip. The coolant transfers those heat through the liquid itself through a closed looping system which eliminates the need of completely submerging the whole server in the liquid coolant and allows server density to increase effectively where the servers can be compacted in the data center making it effective for certain organisations to carry out their operations such as cloud computing and networking. [23]

Option 1: Rejecting heat to a cooled water loop Figure 8

Table 3

<table><tr><td>Type</td><td>Conventional Methods</td><td>Advanced Methods</td></tr><tr><td>Air Based Cooling</td><td>CRAC

CRAH</td><td>AI Driven Cooling</td></tr><tr><td>Air Flow Management</td><td>Hot/Cold Aisle

Raised Floor</td><td>Free Cooling</td></tr><tr><td>Liquid Based</td><td>Chiller based</td><td>Immersion Cooling

Liquid Cooling</td></tr></table>

### d) Conventional Methods

Table 4 <table><tr><td></td><td>Advantages</td><td>Disadvantages</td></tr><tr><td>CRAC</td><td>[24]Widely used as it has a cheaper initial cost.Precise temperature</td><td>[24]High energy consumptionOperation costs will increase when scaling up</td></tr><tr><td>CRAH</td><td>[24]Widely used in large/hyperscale data centersEffectively beneficial with chiller-based cooling</td><td>[24]High initial costDepends on an external water system</td></tr><tr><td>Hot/Cold Aisle Containment</td><td>[25]Effective airflow managementReduced cooling energy as it takes.</td><td>[25]Server density will make the cooling system require additional cooling.Requires careful architecturefor the air to flow</td></tr><tr><td>Raised Floor</td><td>[26]Better air flow system and managementProvide ample space for cable and power management if the data center is scaling up.</td><td>[26]Installation and initial costs are high.(Strong structural)Risk of obstruction due to cable dusts and debris making it prone to fire and could also block air flow</td></tr><tr><td>Chiller Based</td><td>[15]Effectively beneficial with CRAHEfficiency in costs for large scale data centers</td><td>High energy and maintenance cost due to regular maintenance and surveillanceA large amount of water is used in the chiller for the system to work.</td></tr></table>

<table><tr><td></td><td>Advantages</td><td>Disadvantages</td></tr><tr><td>CRAC</td><td>[24]Widely used as it has a cheaper initial cost.Precise temperature</td><td>[24]High energy consumptionOperation costs will increase when scaling up</td></tr><tr><td>CRAH</td><td>[24]Widely used in large/hyperscale data centersEffectively beneficial with chiller-based cooling</td><td>[24]High initial costDepends on an external water system</td></tr><tr><td>Hot/Cold Aisle Containment</td><td>[25]Effective airflow managementReduced cooling energy as it takes.</td><td>[25]Server density will make the cooling system require additional cooling.Requires careful architecturefor the air to flow</td></tr><tr><td>Raised Floor</td><td>[26]Better air flow system and managementProvide ample space for cable and power management if the data center is scaling up.</td><td>[26]Installation and initial costs are high.(Strong structural)Risk of obstruction due to cable dusts and debris making it prone to fire and could also block air flow</td></tr><tr><td>Chiller Based</td><td>[15]Effectively beneficial with CRAHEfficiency in costs for large scale data centers</td><td>High energy and maintenance cost due to regular maintenance and surveillanceA large amount of water is used in the chiller for the system to work.</td></tr></table>

### e) Advanced Methods

Table 5

<table><tr><td></td><td>Advantages</td><td>Disadvantages</td></tr><tr><td>AI Driven Cooling</td><td>·Dynamic temperaturecontrol reduces energy costs.

·Can predict and enhance efficiency reducing failure.

[16][17]</td><td>·Requires AI and machine learning and real time monitoring which can be complex to implement.

·Initial costs are high.

[16][17]</td></tr><tr><td>Free Cooling</td><td>·Uses the natural air from the outside hence reducing energy costs.

·Cost effective and is sustainable due to eco friendliness.

[27]</td><td>·Only suitable in limited regions due to temperature and humidity

·Requires a contingency during warmer periods.

[27]</td></tr><tr><td>Immersion Cooling</td><td>·Highly efficient as it does not require air.

·Effective form higher server density datacenters.

[20]</td><td>·Higher initial costs

·Requires a complex and significant infrastructure of the data center.

[20]</td></tr><tr><td>Direct-to-Chip</td><td>·Delivers the coolant liquid only two the high heat generating infrastructure.

·Effective on the cooling efficiency leading to high performance workload.

[23]</td><td>·Prone to leaks in the server causing the structural integrity to fail.

·Requires modification on the IT components in the server for cold plate integration.

[23]</td></tr></table>

## IV. CURRENT TRENDS

### a) Meta Data center Cooling System, Singapore

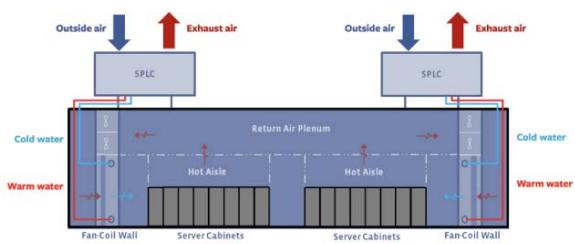

State Point Liquid Cooling (SPLC): SPLC users are patented cooling systems from Nortek to the meta data centers cooling system which is an advanced evaporating cooling system. This eliminates mechanical cooling in the data center making their environmental contribution on pollution redundant while also having flexibility in data center design as their data center in Singapore have not taken up too much space. Therefore, there is room for expansion. It is said that in [28] [29], the PUE at the Meta data center is at 1.19 making it energy efficient. This would mean that when

the PUE of 1.19 shown in the data center energy consumption, 1 unit is used in IT equipment for power while the 0.19 you need are used for other noncomputerised equipment.

Figure 9

### b) Green Mountain Data Center, Norway

Free Cooling: This data center is considered one of the most sustainable and green as data centers in Rennesoy, Norway. The cooling system utilizes the Fjord water which is powered with the expression of natural cooling. The combination of both the cold air and the water from the outside environment keep the several components in the data center at the optimal temperature to maintain the operations of cloud storage. This is a manner of renewable hydro powered free cooling system which does not have or need any energy intensive Power Distribution units (PDU) to regulate energy towards at the components in the data center. This also achieves a PUE of 1.06-1.10 making it one of the most efficient data centers around the world contributing 0 carbon emission in their cooling operations. [30]

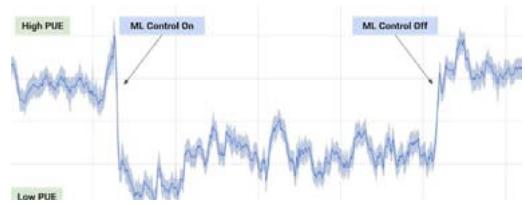

### c) Google Data Center, Maya County, Oklahoma

AI Driven Cooling System: one of the first hyperscale data center that uses Deep Mind AI from their machine learning and artificial intelligence that uses real time data for optimal energy consumption. It is said that [31] shows a $40\%$ reduction in cooling system costs with the integration of Deep Mind AI in 2016. However, to implement the machine learning took two years for it to work out and how their data centers operate efficiently. The dynamic cooling option uses census to dynamically control hyper scale data centers like the one in Oklahoma for Google as it is estimizable unsustainability where Google is trying to achieve net zero emission and can be dynamic to outside temperature holds. [31]

Figure 10

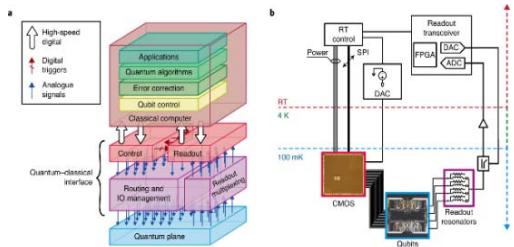

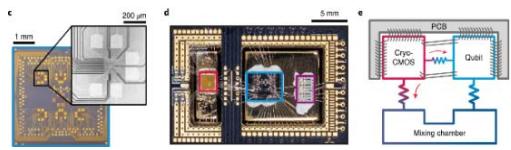

### d) Future Trends- Cryogenic Cooling System

Conventional data centers around the world rely on either air or a liquid state for cooling. Cryogenic takes it a notch higher with possibilities on future data center operations which gives rise to higher performance in computing and quantum computing to take place which would hence rely on cryogenic cooling in the complementary-metal-oxide-semiconductor (CMOS).

Data centers can give services to supercomputers with a higher clock speed, performance on the data service with data allocation and highly increase energy efficiency for a better research path on quantum computing. Cryogenic temperature is below- $150^{\circ}\mathrm{C}$ [33]. Further this would give rise to potential possibilities for sophisticated and complex data centers such as the ones owned by AWS, Microsoft, and Google for cryogenic system accommodation. This would have liquid nitrogen to take into considering a combination of Power Distribution and cryogenic cooling system. [32]

Figure 11

## V. CONCLUSION

As data centers give rise to large scale organisation to reach their hyper scale level for data storage and allocation. However, the demand comes from the data itself therefore organisations who specialised in cloud computing and networking and has the sufficient funds to operate a data center would want to get keep their information from been given without value. TO determine what cooling system is optimal is ambiguous. Therefore, certain cooling systems have their specific advantages and disadvantages depending on their use. For example, a free cooling system works well in colder regions where the data center is at their optimal, small scale, if the server density increases, energy consumption increases, heat generation increases making Free cooling system ineffective resulting in higher PUE. Whereas an evaporative or liquid cooling is better used in warmer, humid regions making them effective in their own operations. This will have a higher PUE, but data centers can scale up with server density, achieving economies of scale in the long run countering the heat to cold transfer cooling systems of the data center is future proofed.

Generating HTML Viewer...

References

29 Cites in Article

F Bray (2023). What Is IT Infrastructure & Why Is It Important?.

S Sundar (2024). Hazards of high temperature in data centers.

Ahmed Alkrush,Mohamed Salem,Osama Abdelrehim,A Hegazi (2024). Data centers cooling: A critical review of techniques, challenges, and energy saving solutions.

B Mckay (2024). Data center Cooling Solutions: A New Sustainable Era.

A Patrizio (2024). Three tech companies eyeing nuclear power for AI energy.

(2023). LAS VEGAS SANDS CORP., a Nevada corporation, Plaintiff, v. UKNOWN REGISTRANTS OF www.wn0000.com, www.wn1111.com, www.wn2222.com, www.wn3333.com, www.wn4444.com, www.wn5555.com, www.wn6666.com, www.wn7777.com, www.wn8888.com, www.wn9999.com, www.112211.com, www.4456888.com, www.4489888.com, www.001148.com, and www.2289888.com, Defendants..

(2024). Direct Liquid Cooling vs. Traditional Air Cooling in Servers.

K Richardson (2025). Four mega trends to watch in 2016.

Dcd (2019). Ten years of liquid cooling.

Egor Karitskii (2024). The Evolution of Data Center Cooling: From Air-Based Methods to Free Cooling.

C Flores (2021). Hot and Cold Aisle Containment Differences -AKCP Monitoring.

(2024). Aisle Containment Capabilities Video.

A Hassler (2021). What Is The Difference Between CRAC & CRAH Units? DataSpan.

L Jose (2019). Data center Cooling Infrastructure.

Shamsun Zaman,Nadia Sharme,Rehnuma Sumona,M Jekrul Islam,Ahmed Reza,Mohammad Arefin (2011). AI-Based Air Cooling System in Data Center.

Siemans (2023). How Artificial Intelligence is Cooling Data Center Operations Dynamically match cooling to IT load in real time.

Shamsun Zaman,Nadia Sharme,Rehnuma Sumona,M Jekrul Islam,Ahmed Reza,Mohammad Arefin (2023). AI-Based Air Cooling System in Data Center.

(2021). Air Cooling in Data Centers: How Does It Work? | 2CRSi.

I Kuncoro,N Pambudi,M Biddinika,I Widiastuti,M Hijriawan,K Wibowo (2019). Immersion cooling as the next technology for data center cooling: A review.

K Cooper Peter (2021). Single-Phase .vs. Two-phase Immersion Cooling | Immersion Cooling.

C Tozzi (2023). Cooling the Data Center.

J Mahan (2023). Hard to CRAC: The mechanisms of CRAC channel gating.

Dave Moody (2017). Hot Aisle Versus Cold Aisle Containment.

T Liquori (2020). Advantages of Data Center Raised Floors.

J Hanák (2015). How Data Center Free Cooling Works and Why it is Brilliant.

V Mulay,; Clay (2018). StatePoint Liquid Cooling system: A new, more efficient way to cool a data center.

Green Mountain (2021). Green Mountain Data Center.

R Evans,J Gao (2016). DeepMind AI reduces google data center cooling bill by 40%.

L Fadhel Ayachi,J Yang,A Sze,Romagnoli (2018). Cryogenic polygeneration for green data center.

No ethics committee approval was required for this article type.

Data Availability

Not applicable for this article.

How to Cite This Article

M. Bakir Faique. 2026. \u201cOptimal Cooling Systems in Current Data Centers\u201d. Global Journal of Computer Science and Technology - G: Interdisciplinary GJCST-G Volume 25 (GJCST Volume 25 Issue G1): .

Explore published articles in an immersive Augmented Reality environment. Our platform converts research papers into interactive 3D books, allowing readers to view and interact with content using AR and VR compatible devices.

Your published article is automatically converted into a realistic 3D book. Flip through pages and read research papers in a more engaging and interactive format.

Our website is actively being updated, and changes may occur frequently. Please clear your browser cache if needed. For feedback or error reporting, please email [email protected]

Thank you for connecting with us. We will respond to you shortly.