As more businesses adopt distributed architectures that require complex optimization techniques, enterprise cloud platforms encounter previously unanticipated challenges in maintaining optimal performance, security, and costeffectiveness. By employing real-time telemetry data, sophisticated machine learning methods, and responsive feedback loops to develop self-optimizing cloud operations, signal-driven decision systems represent a revolutionary approach. Signal classification taxonomies that distinguish performance measurements, resource usage indications, security issues, and user behavior patterns across time scales and data sources are included in the full framework. Algorithmic scoring models incorporate statistical analysis, ensemble methods, and security-aware normalization techniques to transform raw signal data into actionable optimization recommendations while maintaining multi-tenant isolation requirements. Control system architectures apply proportional-integral-derivative principles and adaptive feedback loops operating at multiple organizational levels, from immediate operational responses to strategic platform evolution decisions. The integration of security frameworks and operational maturity modeling enables ongoing monitoring, prompt threat identification, and automated incident management. Implementation strategies concentrate on techniques for a phased rollout that minimize operational disruptions and improve conformance with existing infrastructure. It is anticipated that contemporary technologies such as ensemble-based deep learning techniques, edge computing, and quantum processing would significantly improve signal processing capabilities and optimization accuracy. Businesses’ approaches to cloud governance and optimization are being drastically altered by unprecedented levels of automation and intelligence in cloud platform management, which are made possible by the integration of artificial intelligence, stream processing, and predictive analytics technologies.

## II. SIGNAL CLASSIFICATION AND TAXONOMY FRAMEWORK

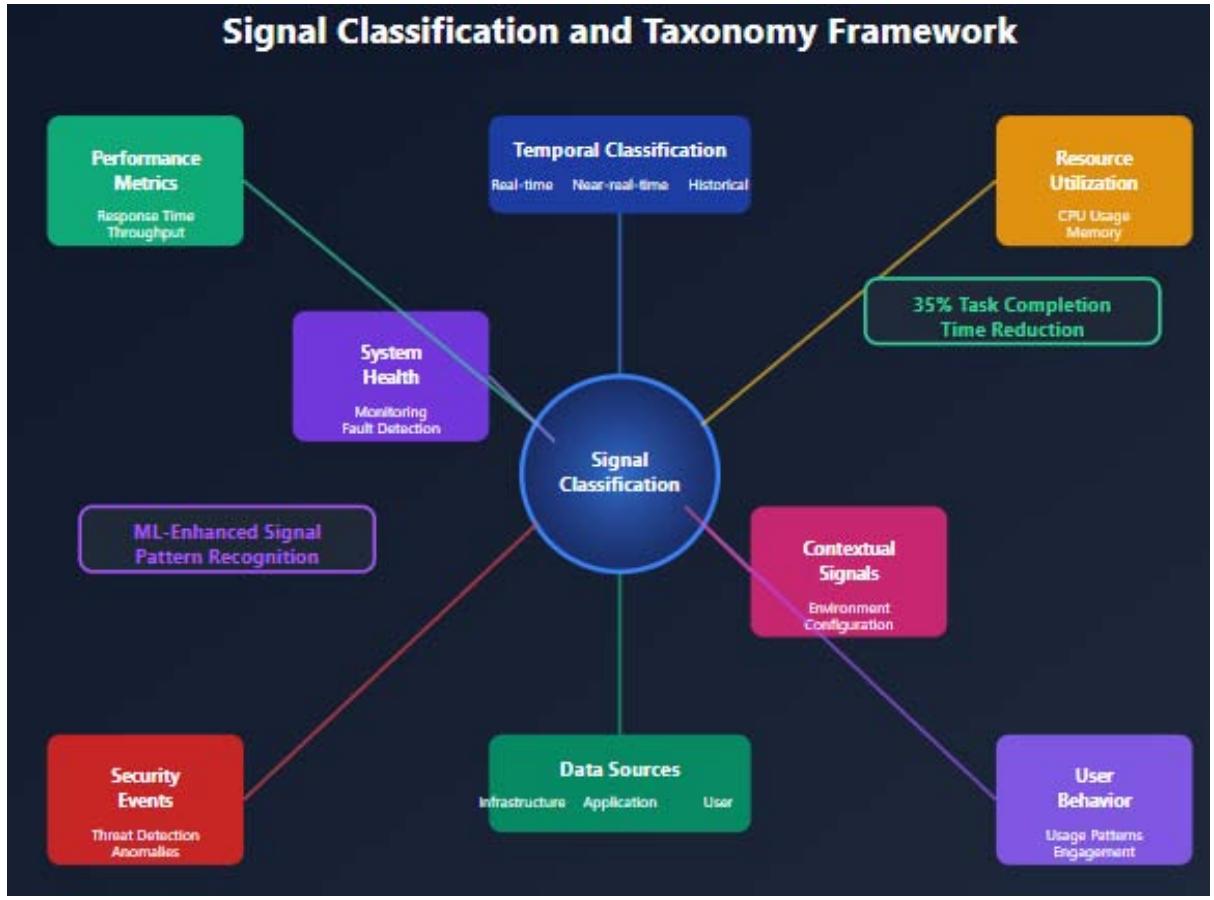

The foundation of effective signal-driven decision systems lies in establishing a comprehensive classification framework that categorizes various types of signals generated within cloud platforms. Normalization-based task scheduling algorithms for heterogeneous multi-cloud environments have demonstrated significant improvements in resource allocation efficiency, with studies showing up to a $35\%$ reduction in task completion times when proper signal classification methodologies are implemented [3]. Signal classification enables systematic analysis of telemetry data and facilitates the development of targeted optimization strategies. The proposed classification matrix organizes signals across multiple dimensions, including temporal characteristics, data sources, criticality levels, and actionability thresholds. Primary signal categories encompass performance metrics, resource utilization indicators, security events, user behavior patterns, and system health diagnostics. Performance metrics include response times, throughput measurements, error rates, and service level agreement compliance indicators. Resource utilization signals capture CPU usage, memory consumption, storage capacity, and network bandwidth patterns across different time scales. Security-related signals encompass threat detection events, access pattern anomalies, compliance violations, and vulnerability assessments. Machine learning approaches to cloud resource allocation optimization have proven particularly effective in processing these complex signal types, with comprehensive studies indicating substantial improvements in efficiency and performance when advanced algorithms are applied to signal analysis and resource management decisions [4]. User behavior signals provide insights into application usage patterns, feature adoption rates, and user engagement metrics that inform capacity planning and feature development decisions. System health diagnostics include infrastructure monitoring data, service availability metrics, and fault detection indicators that enable proactive maintenance and incident prevention. The classification framework also incorporates contextual signals that provide environmental information about deployment configurations, geographical distributions, and organizational policies. Advanced machine learning techniques have shown remarkable success in identifying patterns within these diverse signal types, enabling more precise resource allocation and performance optimization strategies. The temporal

dimension of signal classification distinguishes between real-time signals requiring immediate action, near-realtime signals enabling short-term optimization, and historical signals supporting long-term strategic planning. Real-time signals typically involve critical system failures, security breaches, or performance degradation events that demand immediate response. Near-real-time signals encompass capacity planning indicators, performance trend analysis, and resource optimization opportunities that can be addressed within hours or days. Historical signals provide valuable insights for establishing baseline performance expectations and identifying long-term trends that inform strategic decision-making processes. Data source classification identifies the origin of signals, whether generated by infrastructure components, application layers, user interactions, or external monitoring systems. Infrastructure signals originate from physical and virtual hardware components, including servers, storage systems, and network devices. Application-level signals derive from software components, middleware systems, and business logic implementations. User-generated signals capture interaction patterns, transaction volumes, and usage behaviors that influence platform optimization decisions.

Figure 2: Signal Classification and Taxonomy Framework [3, 4]

## III. ALGORITHMIC SCORING MODELS AND PERFORMANCE NORMALIZATION

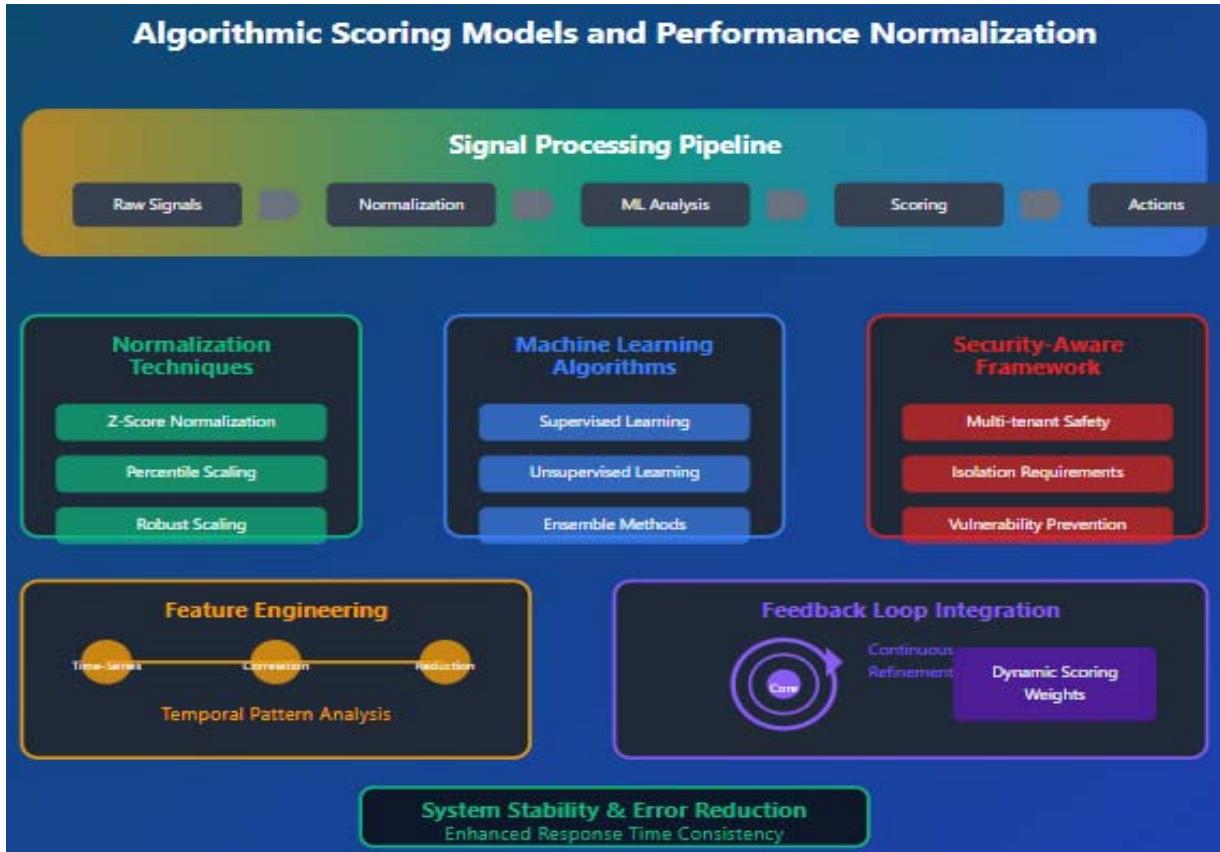

The development of algorithmic scoring models represents a critical component in transforming raw signal data into actionable optimization recommendations. Feedback loops in distributed systems have become essential mechanisms for maintaining system stability and performance, with modern implementations demonstrating significant improvements in response time consistency and error reduction across complex cloud architectures [5]. These models must address the challenge of normalizing performance metrics across heterogeneous cloud configurations while maintaining sensitivity to configuration-specific optimization opportunities. The scoring framework incorporates statistical analysis, machine learning algorithms, and domain-specific heuristics to generate consistent performance assessments. Performance normalization addresses the fundamental challenge of comparing metrics across different cloud configurations, instance types, and deployment architectures. The normalization process involves establishing baseline performance expectations for specific configuration patterns and adjusting observed metrics based on environmental factors. Statistical techniques such as z-score normalization, percentile-based scaling, and robust scaling methods

provide mechanisms for creating comparable performance indicators across diverse environments. Cloud security frameworks for safeguarding multi-tenant cloud architectures have identified performance normalization as a critical security consideration, particularly in environments where resource sharing and isolation requirements must be balanced with optimization objectives [6]. Machine learning algorithms enhance the scoring models by identifying complex patterns in signal data that traditional statistical methods might overlook. Supervised learning approaches leverage historical performance data and known optimization outcomes to train models that predict optimal configuration changes. Unsupervised learning techniques identify anomalous patterns and unexpected correlations that may indicate emerging optimization opportunities or potential issues requiring attention. The integration of security-aware normalization techniques ensures that performance optimization decisions do not compromise the integrity and isolation requirements of multi-tenant cloud environments. The algorithmic framework incorporates ensemble methods that combine multiple scoring approaches to improve prediction accuracy and reduce false positive rates.

Weighted scoring systems assign different importance levels to various signal types based on business impact, technical criticality, and operational priorities. Dynamic weighting mechanisms adjust scoring parameters based on changing system conditions and organizational objectives. Advanced security frameworks emphasize the importance of maintaining consistent scoring methodologies across different tenant environments while ensuring that optimization decisions do not inadvertently create security vulnerabilities or compromise data isolation requirements. Feature engineering plays a crucial role in developing effective scoring models by identifying the most relevant signal characteristics for optimization decision-making. Time-series analysis techniques capture temporal patterns in performance metrics, while correlation analysis identifies relationships between different signal types. Dimensionality reduction methods help manage the complexity of high-dimensional signal spaces while preserving the most informative features for optimization decisions. The implementation of sophisticated feedback mechanisms enables continuous refinement of scoring models based on observed outcomes and changing system dynamics.

Figure 3: Algorithmic Scoring Models and Performance Normalization [5, 6]

## IV. CONTROL SYSTEMS AND FEEDBACK LOOP ARCHITECTURE

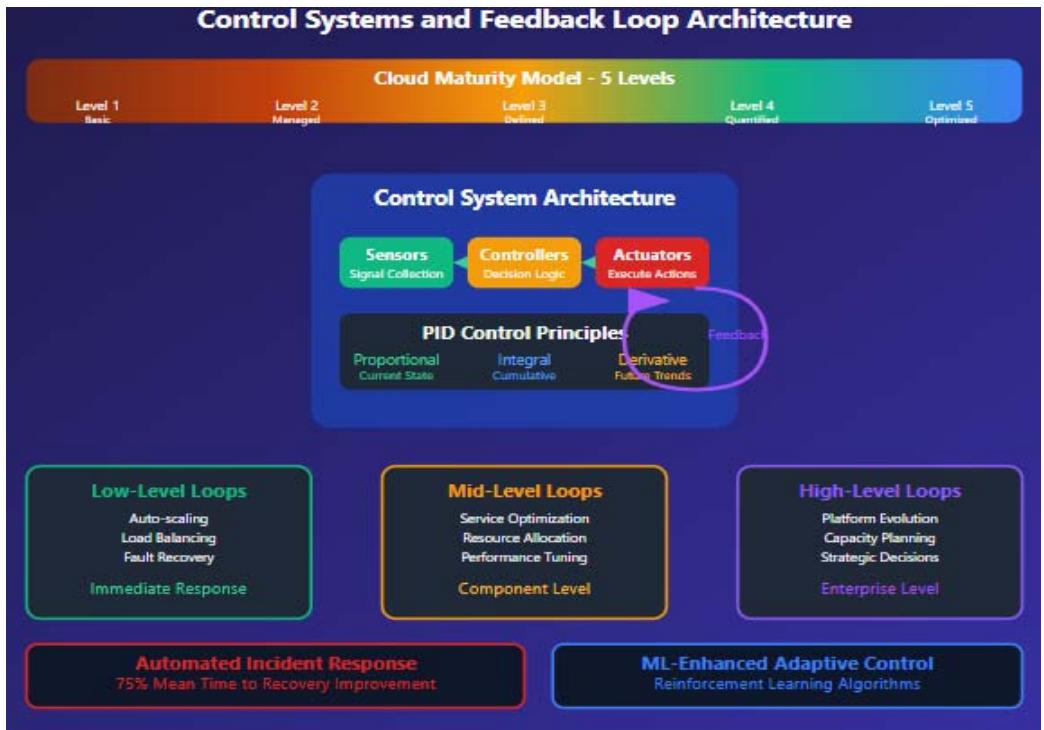

The implementation of signal-driven decision systems draws extensively from control systems theory, applying feedback loop principles to create self-regulating cloud platforms. Cloud maturity models have identified five distinct levels of organizational cloud adoption, progressing from basic infrastructure utilization to fully automated, self-optimizing platforms

that demonstrate advanced operational capabilities and strategic alignment [7]. Control system architectures provide proven frameworks for managing complex systems with multiple inputs, outputs, and feedback mechanisms. The application of these principles to enhance cloud platforms leads to possibilities for self-sufficient operations and ongoing advancements. Cloud platforms have feedback loops that operate at many levels, from service optimization to platform-wide governance and strategic planning. Auto-scaling, load balancing, and fault recovery are examples of urgent operational problems that are handled by low-level feedback loops. Enhancing services, allocating resources, and modifying performance among interconnected system components are the main goals of mid-tier feedback loops. High-level feedback loops encompass strategic decisions about platform evolution, capacity planning, and architectural improvements. Automated incident response systems have revolutionized cloud operations by enabling rapid detection, analysis, and resolution of operational issues, with modern implementations achieving mean time to recovery improvements of up to $75\%$ compared to manual response processes [8]. The control system architecture incorporates sensors, controllers, and actuators that work together to maintain desired system states. Sensors correspond to signal collection mechanisms that monitor various aspects of platform performance and behavior. Controllers implement decision-making logic that analyzes signal data and determines appropriate actions. Actuators perform the chosen actions, like scaling resources, modifying configurations, or initiating maintenance tasks. By integrating automated incident response capabilities into control system architectures, operational issues can be quickly identified and fixed, significantly reducing downtime and improving system reliability. Proportional-integral-derivative (PID) control principles provide mathematical foundations for developing responsive yet stable optimization systems. Proportional control responds to current performance deviations, integral control addresses cumulative performance issues, and derivative control anticipates future performance trends. The adaptation of PID principles to cloud optimization creates controllers that can respond appropriately to various types of performance signals. Advanced maturity models highlight the necessity of employing complex control systems that can adjust to evolving organizational needs and technical landscapes. Adaptive control systems enhance conventional control methods by modifying control parameters in response to varying system characteristics and environmental factors. Machine learning methods allow controllers to gain insights from past performance data and enhance decision-making progressively. Reinforcement learning algorithms enable controllers to investigate diverse optimization techniques and gain insights from the results of different actions. The progression to advanced maturity levels necessitates that organizations establish more complex control systems capable of functioning independently while remaining in sync with business goals and operational needs.

Figure 4: Control Systems and Feedback Loop Architecture [7, 8]

## V. CLOUD SECURITY AND OPERATIONAL MATURITY INTEGRATION

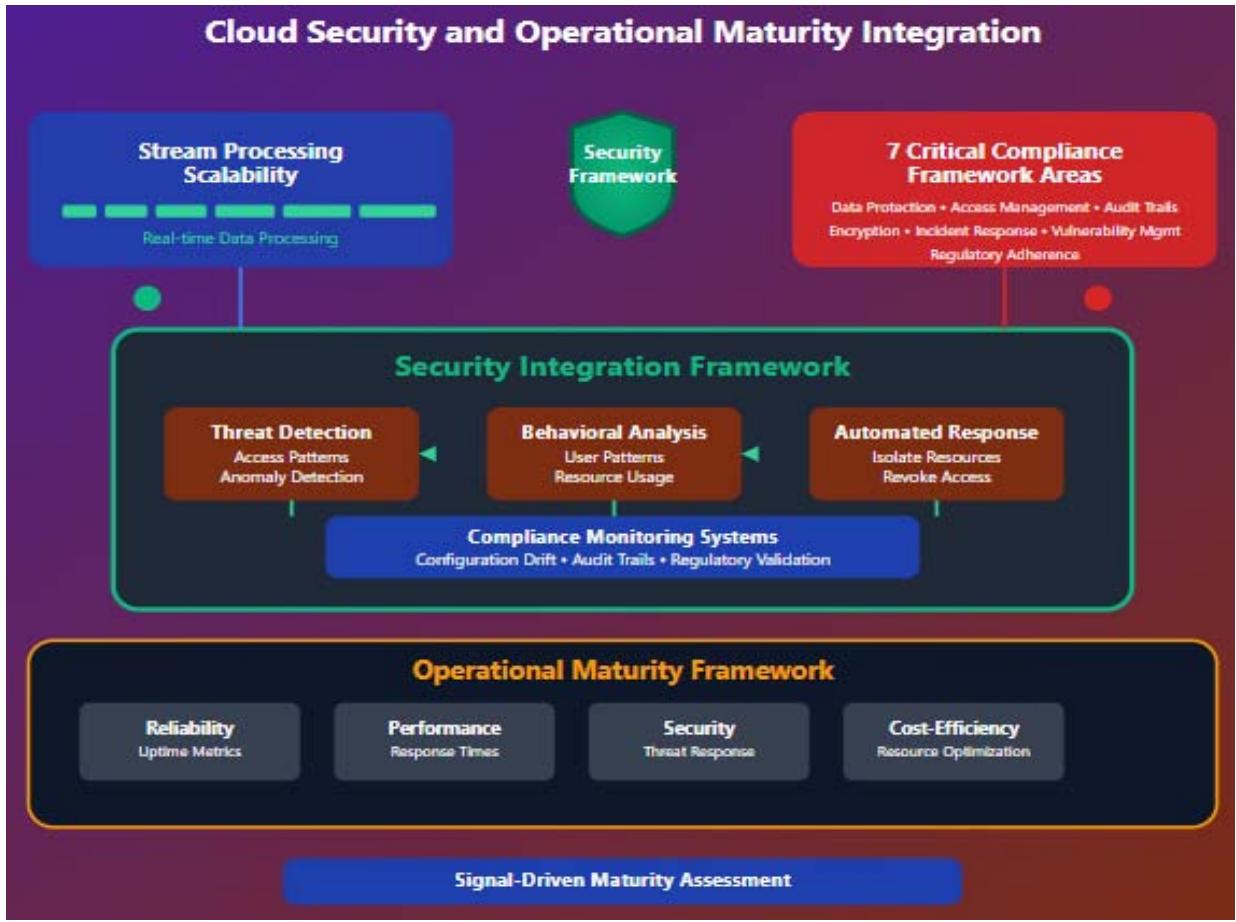

Signal-driven decision systems significantly impact cloud security posture and operational maturity by providing continuous monitoring and adaptive security measures. Stream processing scalability presents unique challenges and solutions for modern cloud architectures, particularly in environments where massive data volumes must be processed in real-time while maintaining security and compliance requirements [9]. The integration of security signals into optimization frameworks creates opportunities for proactive threat detection, automated incident response, and continuous compliance monitoring. Security-focused signals include access pattern analysis, vulnerability assessments, configuration drift detection, and threat intelligence correlation. Operational maturity modeling benefits from signal-driven approaches by establishing measurable criteria for assessing platform sophistication and identifying improvement opportunities. Maturity signals encompass automation levels, incident response times, change management effectiveness, and compliance adherence rates. The systematic collection and analysis of maturity-related signals enable organizations to track progress toward operational excellence and identify areas requiring additional investment. Compliance frameworks for cloud security have identified seven critical areas that cloud teams must address, including data protection, access management, audit trails, encryption standards, incident response procedures, vulnerability management, and regulatory adherence monitoring [10]. The security integration framework incorporates threat modeling techniques that identify potential attack vectors and establish monitoring requirements for detecting suspicious activities. Behavioral analysis algorithms analyze user access patterns, resource usage behaviors, and system interaction patterns to identify potential security anomalies. Automated response systems can detach compromised assets, annul questionable access credentials, and start incident response actions based on the analysis of security signals. The adoption of scalable stream processing frameworks allows for real-time assessment of security incidents while preserving the performance standards necessary for operational settings. Compliance monitoring systems utilize signal-based methods to guarantee adherence to regulatory standards and organizational policies. Configuration monitoring signals observe alterations in system configurations and verify adherence to defined baselines. Audit trail signals offer thorough logs of system operations to meet compliance reporting and forensic analysis requirements. Contemporary compliance frameworks encourage the use of advanced signal-processing capabilities that may promptly identify and address compliance issues, which hhIGHLIGHTs the necessity of automated compliance assessments and ongoing monitoring. The operational maturity framework establishes criteria for assessing platforms' efficacy in a number of domains, such as dependability, performance, security, and cost-effectiveness. Models of maturity progression outline the steps necessary to shift from reactive to proactive and predictive operational skills. Signal-based maturity evaluation offers unbiased metrics of operational efficiency and pinpoints precise areas needing enhancement. Sophisticated stream processing technologies allow organizations to meet the scalability and performance demands essential for deploying extensive security and compliance monitoring systems throughout expansive cloud environments.

Figure 5: Cloud Security and Operational Maturity Integration [9, 10]

## VI. IMPLEMENTATION STRATEGIES AND FUTURE DIRECTIONS

The effective execution of signal-driven decision systems necessitates thoughtful evaluation of architectural designs, choice of technology, and management of organizational change. Cloud resource management utilizing artificial intelligence and predictive analytics has shown significant enhancements in operational efficiency, with sophisticated implementations realizing cost decreases of up to $40\%$ while also boosting performance metrics and system dependability [11]. Technical challenges related to data gathering, processing, and decision-making must be addressed by implementation strategies, which must also guarantee compatibility with existing cloud infrastructure and operating procedures. Organizations can gradually integrate signal-driven functionality while minimizing disruptions to ongoing operations by using the incremental implementation technique. Selecting appropriate technology for signal collection, data processing, and decision-making is an example of technical implementation factors. Scalable solutions for handling massive signal streams are provided by stream processing frameworks such as Apache Kafka and Apache Flink. The development and application of sophisticated scoring models and decision-making algorithms are made easier by machine learning platforms. Incorporating existing cloud management tools guarantees smooth functionality within established operational processes. Feature selection using ensemble methods and optimization-focused deep learning strategies for attack detection in cloud computing environments has demonstrated significant effectiveness, with recent research showing enhancements in detection accuracy reaching as high as $95\%$ when advanced machine learning techniques are effectively applied [12]. Organizational factors include change management, skill enhancement, and cultural shifts necessary for the effective implementation of signal-driven strategies. Training initiatives should cover both technical abilities connected to signal analysis and practical skills involved in understanding and implementing optimization suggestions. Governance structures need to adapt to include signal-based decision-making while ensuring suitable supervision and control systems remain in place. Incorporating predictive analytics allows organizations to foresee resource needs and refine allocation methods prior to performance problems developing, leading to enhanced user experiences and lower operational expenses. Future research avenues involve enhancing the complexity of signal analysis algorithms, establishing standardized signal formats and protocols, and building interoperability frameworks for multi-cloud settings. The transition to edge computing and distributed cloud systems presents new challenges and opportunities for optimization driven by signals. Quantum computing technologies might ultimately facilitate more advanced signal processing and optimization algorithms. Sophisticated deep learning methods for detecting and preventing attacks are continually advancing, with ensemble-based strategies demonstrating notable potential for recognizing intricate security risks in cloud settings. The incorporation of artificial intelligence and machine learning technologies will keep enhancing the functionalities of signal-driven systems. Techniques in natural language processing could facilitate more user-friendly interfaces for engaging with optimization suggestions. Computer vision methods may examine visual depictions of system efficiency and detect trends not apparent in numerical information. The capabilities of predictive analytics will grow more advanced, allowing organizations to foresee and mitigate operational problems prior to their effect on system performance or user experiences. Industry standardization initiatives will enable the creation of portable signal-driven solutions capable of functioning across various cloud platforms and organizational settings. Open-source projects can speed up the use of signal-driven methods by offering reference models and platforms for collaborative development. The creation of industry standards and best practices will assist organizations in deploying effective signal-driven decision-making systems while adhering to security and compliance obligations.

## VII. CONCLUSION

The evolution of enterprise cloud infrastructure toward signal-driven decision architectures constitutes a transformative paradigm shift, transcending conventional reactive management practices to embrace advanced autonomous operational systems that fundamentally reconceptualize organizational excellence and strategic market positioning within contemporary technological ecosystems. Groundbreaking signal processing techniques, when harmoniously combined with next-generation machine learning innovations, empower organizations to achieve remarkable breakthroughs in performance optimization, security reinforcement, and financial efficiency through instantaneous analytical workflows and responsive adaptation mechanisms. The comprehensive structural framework incorporating signal taxonomy methodologies, algorithmic evaluation systems, control architecture designs, and security integration protocols establishes a formidable foundation for self-directed cloud operations capable of continuous metamorphosis in response to developing behavioral patterns and transforming operational prerequisites. Strategic deployment approaches emphasizing progressive implementation tactics ensure flawless integration with established technological infrastructure while dramatically minimizing operational disruptions throughout the complete transformation cycle. The synergistic convergence of artificial intelligence systems, predictive analytical frameworks, and stream processing technologies generates exceptional opportunities for organizations to foresee and eliminate operational obstacles before such challenges can detrimentally influence system performance or customer satisfaction metrics. Revolutionary technological innovations in edge computing architectures, quantum computational methodologies, and ensemble-based deep learning systems demonstrate tremendous potential for substantially amplifying signal processing capabilities and optimization accuracy across multifaceted heterogeneous cloud computing platforms. The comprehensive standardization of signal transmission protocols, data exchange formats, and multi-platform compatibility architectures considerably streamline the development of universally adaptable solutions capable of seamless functionality across numerous cloud platforms and diverse organizational frameworks. Organizations adopting signal-driven architectural methodologies strategically position themselves to accomplish exceptional operational maturity standards, enhanced security infrastructures, and enduring competitive advantages through intelligent automation frameworks that continuously mature and adjust to evolving business demands and progressively advancing technological landscapes.

Generating HTML Viewer...

References

11 Cites in Article

Sophie Cerf (2017). Adaptive Feed forward and Feedback Control for Cloud Services.

Aishah Siti (2024). Advanced Signal Processing Techniques for Real-Time Systems in Edge Computing.

K Sanjaya,Prasanta Panda,Jana (2018). Normalization-Based Task Scheduling Algorithms for Heterogeneous Multi-Cloud Environment.

Sadia Syed,Eid Albalawi (2024). Optimizing Cloud Resource Allocation with Machine Learning: A Comprehensive Approach to Efficiency and Performance.

Geeks Geeks (2024). Feedback Loops in Distributed Systems.

Srinivas Chippagiri (2025). A Study of Cloud Security Frameworks for Safeguarding Multi-Tenant Cloud Architectures.

Reynal Dsouza,Mehul Budasna (2024). What is the Cloud Maturity Model: Guide for Successful Cloud Adoption.

Muhammad Raza (2025). What is Automated Incident Response? Benefits, Processes, and Challenges Explained.

Ververica (2023). Stream Processing Scalability: Challenges and Solutions.

Case Arthur,Marium Yusuff (2023). AI and Predictive Analytics in Cloud Resource Management.

Susila Nagarajan,Daniel Jayapalan,Ponmary Devaraj (2025). Ensemble-based feature selection and optimization-driven deep learning for attack detection in cloud computing.

Explore published articles in an immersive Augmented Reality environment. Our platform converts research papers into interactive 3D books, allowing readers to view and interact with content using AR and VR compatible devices.

Your published article is automatically converted into a realistic 3D book. Flip through pages and read research papers in a more engaging and interactive format.

As more businesses adopt distributed architectures that require complex optimization techniques, enterprise cloud platforms encounter previously unanticipated challenges in maintaining optimal performance, security, and costeffectiveness. By employing real-time telemetry data, sophisticated machine learning methods, and responsive feedback loops to develop self-optimizing cloud operations, signal-driven decision systems represent a revolutionary approach. Signal classification taxonomies that distinguish performance measurements, resource usage indications, security issues, and user behavior patterns across time scales and data sources are included in the full framework. Algorithmic scoring models incorporate statistical analysis, ensemble methods, and security-aware normalization techniques to transform raw signal data into actionable optimization recommendations while maintaining multi-tenant isolation requirements. Control system architectures apply proportional-integral-derivative principles and adaptive feedback loops operating at multiple organizational levels, from immediate operational responses to strategic platform evolution decisions. The integration of security frameworks and operational maturity modeling enables ongoing monitoring, prompt threat identification, and automated incident management. Implementation strategies concentrate on techniques for a phased rollout that minimize operational disruptions and improve conformance with existing infrastructure. It is anticipated that contemporary technologies such as ensemble-based deep learning techniques, edge computing, and quantum processing would significantly improve signal processing capabilities and optimization accuracy. Businesses’ approaches to cloud governance and optimization are being drastically altered by unprecedented levels of automation and intelligence in cloud platform management, which are made possible by the integration of artificial intelligence, stream processing, and predictive analytics technologies.

Our website is actively being updated, and changes may occur frequently. Please clear your browser cache if needed. For feedback or error reporting, please email [email protected]

Thank you for connecting with us. We will respond to you shortly.