The COVID-19 pandemic has posed significant challenges to healthcare systems, particularly in the realm of medical imaging and the diagnosis of COVID-19 pneumonia lung lesions. Artificial intelligence (AI) has become essential in assisting radiologists by swiftly analyzing extensive volumes of computed tomography (CT) scan data to identify lung abnormalities. Radiologists, who typically conduct thorough examinations of CT scans, benefit from AI’s capability to pre-screen images, flag potential issues, and prioritize urgent cases, thereby enhancing efficiency during times of high demand. AI, especially through deep learning, can recognize subtle patterns in lung images that may be overlooked by human eyes, offering valuable second opinions and improving diagnostic accuracy and consistency.

## I. INTRODUCTION

The onset of the COVID-19 pandemic catalyzed unprecedented challenges across the globe, posing significant strains on healthcare systems and demanding rapid, innovative responses. One crucial area where the pandemic's impact was profoundly felt is in medical imaging, specifically in the detection and diagnosis of lung lesions associated with COVID-19 pneumonia. In such environment, where timely and accurate diagnosis is of fundamental importance, the role of artificial intelligence (AI) tools in supporting radiologists has emerged as a transformative paradigm in medical diagnostics.

Artificial intelligence, with its capacity to process and analyze vast amounts of data in a very short period of time, offers a significant advantage in the timely detection of lung lesions. According to Coelho Filho (1), radiologists traditionally rely on CT (computed tomography) scans to observe lung abnormalities caused by COVID-19. However, examining these images meticulously demands considerable time and attention to detail. AI algorithms, trained on extensive datasets of lung images, can assist radiologists by prescreening these images, highlighting areas of concern, and prioritizing cases based on the severity of potential findings. This capability becomes essential in pandemic situations where the volume of imaging studies can overwhelm healthcare facilities. However, even the most experienced radiologists can face difficulties in consistently identifying subtle patterns indicative of COVID-19-induced lung lesions. AI tools, particularly those leveraging deep learning techniques, excel in recognizing patterns that might not be identified by the human eye. They can provide a second opinion, corroborating radiologists' interpretations and potentially identifying lesions that might otherwise be missed. This not only increases diagnostic accuracy but also ensures a higher level of consistency across different cases and practitioners.

Early detection of lung lesions can greatly enhance patient outcomes, especially in the case of COVID-19, where timely intervention can prevent the disease from advancing to more severe stages. AI algorithms are able to identify initial signs of COVID-19-related lung involvement, which may be subtle or diffuse and thus easily missed in preliminary readings. By highlighting these early abnormalities, AI tools facilitate prompt clinical decisions, enabling earlier treatment initiation and potentially reducing the disease's impact. This timely intervention is crucial in preventing complications and improving patient prognosis.

Thus, the aim of this article is to present a review of the scope of the literature currently available. This review is based on the criteria described in the Methodology chapter of this paper and its main objective is to systematically sum up the existing knowledge until then, and, based on the publications available so far, discuss the importance as well as the advantages and disadvantages of the adoption of Artificial Intelligence in supporting the reading of

Computed Tomography images in the diagnosis of patients infected with COVID-19.

## II. THEORETICAL FRAMEWORK

To validate the extent to which the results obtained from applying Artificial Intelligence tools align with radiologists' diagnoses regarding the severity of a patient's COVID-19 condition, an extensive review of current literature was conducted, as discussed in section 4. To underscore the importance of this alignment, several key concepts will be highlighted.

In order to clarify more precisely the various fields of artificial intelligence, it is important not just to present what are the main differences between the terms applied to AI but, with the aim of baselining readers' knowledge about computational science, it's important to compare this novel field with the traditional computer programming. Historically, building a computer system required a lot of human experience, mastery, and participation to allow a machine learning algorithm to detect patterns from raw data. Machine learning is distinct from other types of computer programming in that it transforms data inputs into relevant outputs using data-driven statistical rules that are automatically derived from a large set of examples, rather than being explicitly specified by humans. On the other hand, deep learning algorithms are systems in which a machine fed raw data and develops its own representations necessary for pattern recognition. These various representations are typically arranged sequentially and composed of a large number of operations.

Nagendran et al (2) in their article present the concept of Deep Learning as being a subset of AI that is defined as "computational models that are composed of multiple processing layers to learn data representations with multiple levels of abstraction". In practice, the main distinguishing feature between deep learning and traditional machine learning is that when the data networks employed in deep learning are fed with raw data, they develop their own representations necessary for pattern recognition, without the influence of humans. In other words, the algorithm learns for itself which findings in an image are relevant to ranking, rather than being informed by humans about those findings as can be verified in the so called "Machine Learning (ML)".

According to Esteva et al (3), Deep Learning has been increasingly important especially in the last 6 years, largely driven by increases in computational power and the availability of new datasets. This area of Artificial Intelligence has benefited heavily from major advances in the ability of machines to understand and manipulate data, including images, language, and speech. In addition to this factor, medicine has benefited immensely from developments in this area as well as the increasing proliferation of medical devices and digital recording systems.

In this way, highly complex functions can be learned, and deep learning models scale to large datasets - in part due to their ability to run on specialized computing hardware - and continue to improve as more data becomes available, thus allowing this technique to outperform classical ML approaches.

Having said that, the aim of this article is to present a review of the scope of the literature currently available and based on the publications available so far, discuss the importance as well as the advantages and disadvantages of the adoption of Artificial Intelligence as a Clinical Decision Support tool over the Computed Tomography images in the diagnosis of patients infected with COVID-19.

## III. METHODOLOGY

The research was implemented on the second quarter of 2024 and consisted of terms considered as relevant by the authors to review the literature on the relevance of AI applied to CT Images to help coping with the pandemic. All research was based on PubMed of the National Center for Biotechnology Information (NCBI) which belongs to the National Library of Medicine (NLM) - https://pubmed.ncbi.nlm.nih.gov/advanced/.The search period comprised articles published from 2020 to the 2nd quarter of 2024.

1. First Search: The search for publications on "Artificial Intelligence in Healthcare" (without considering additional filters) resulted in 590 articles from the base of PubMed with the following syntax: Search: artificial intelligence in healthcare Filters: Systematic Review, in the last 5 years. (a)

2. Second Search: The objective of refining the search spectrum by adding the keyword "Diagnosis" allowed to extract only the articles related to the application of the "Artificial Intelligence" applied to the diagnosis phase of the overall continuum of care from the database previously obtained. The syntax adopted was: ("artificial intelligence in healthcare diagnosis Filters: Systematic Review, in the last 5 years) (b). This second research resulted in 262 articles that, like the previous research, are presented in the graphs presented in the chapter "Results"

3. Third Search: To contextualize the volume of published articles focusing more specifically on Computed Tomography, further restricting the focus of the search, we insert one more parameter in the research using the word "Computed Tomography", in order to extract from the selected bibliography, the references object of this article. The syntax mentioned below resulted in 18 articles that were analyzed and used as the basis of this work. Its syntax was: "artificial intelligence in healthcare

diagnosis "computed tomogram phy" Filters: Systematic Review, in the last 5 years (c).

## IV. RESULTS

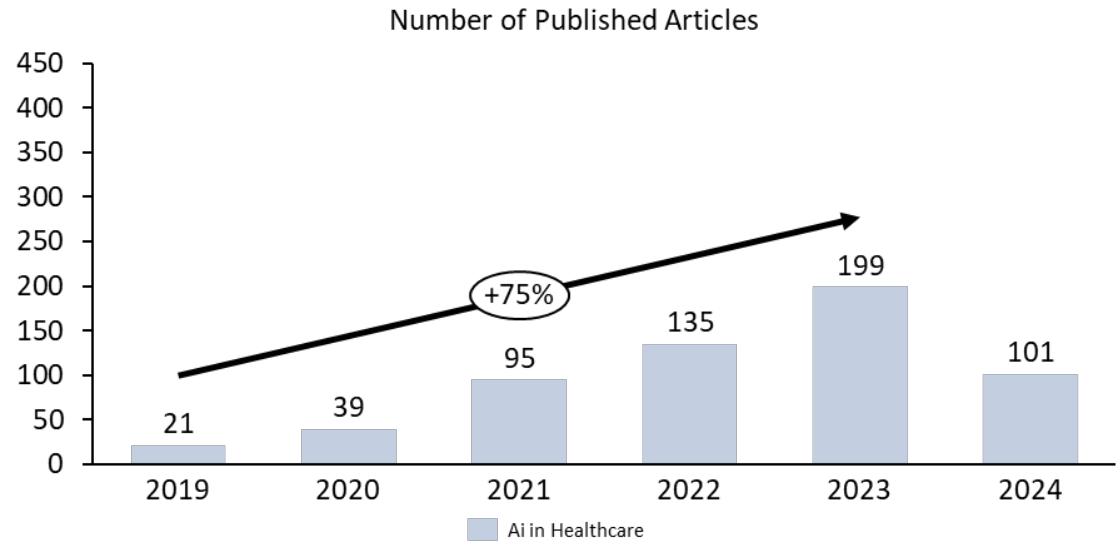

The results of the three search syntaxes described in the item "Method" are detailed below. The number of articles using the term "Artificial Intelligence" has a steadily growth since 2020 as shown in figure 1. Considering the fact that the survey was performed in the second quarter of 2024 and there are already 101 articles published, we can infer that the number of publications by the end of 2024 may reach around 240 articles.

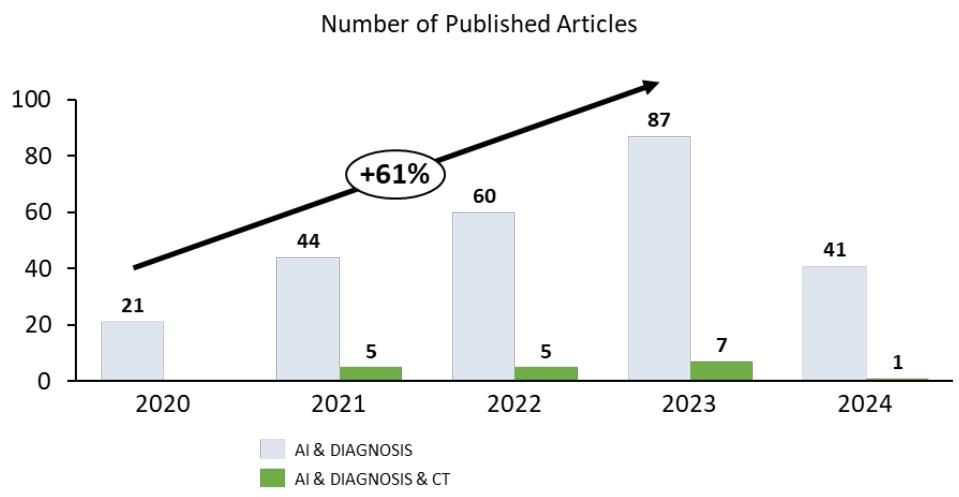

Due to the broad application this technology has, it is expected that when refining the search by inserting the term "Diagnosis" a smaller number of articles is taken as a result but even after selecting a more specific application of Artificial Intelligence, the annual growth rate in the number of articles remains above $60\%$.

Finally, bringing the term "Computed Tomography" combined to previous parameters shows that the application of such technology specifically for COVID-19 has witnessed a slight increase from 2021 until 2023 but considering that the last syntax has shown a very small number of publications, this finding was the main motivator for the authors to decide to develop this work.

Source: Prepared by the authors themselves

Figure 1: Number of Articles Published According to the Date of their Creation According to Item (A)

Figure 2: Number of Articles Published According to the date of their Creation According to item (b) and (c)

Source: Prepared by the authors themselves

## V. DISCUSSIONS

AI improves the lives of patients, physicians, and hospital managers by doing activities usually performed by people but in a fraction of the time and the expense. For example, AI assists physicians in making suggestions by evaluating vast amounts of healthcare data such as electronic health records, symptom data, and physician reports to improve health outcomes and eventually save the patient's life as described by Liu et al. (4)

Nagendran et al (2) defines "deep learning" as a subset of AI where computational models, composed of multiple processing layers, learn representations of data with multiple levels of abstraction. In practice, the main distinguishing feature between convolutional neural networks (CNNs) in deep learning and traditional machine learning is that when CNNs are fed with raw data, they develop their own representations needed for pattern recognition; they do not require domain expertise to structure the data and design feature extractors. In plain language, the algorithm learns for itself the features of an image that are important for classification rather than being told by humans which features to use.

According to Esteva et al (3) Deep learning, which falls under the broader umbrella of machine learning, has experienced a significant revival lately. This resurgence is propelled by advancements in computing power and the emergence of extensive datasets, i.e. the capabilities of machines to analyze and interpret various forms of data, such as images, text, and audio, have considerably advanced. The healthcare industry stands to benefit significantly from deep learning, particularly because of the enormous volume of data it produces and the expanding integration of medical devices and electronic health records systems.. Machine learning in this scenario surpasses the conventional computer programming because it converts the inputs of an algorithm into outputs by using statistical methods and rules that are learned from data, instead of being predefined by humans. Traditionally, building machine learning systems necessitated expert knowledge and human intervention to craft feature extractors, which converted raw data into a format that could allow pattern detection by learning algorithms. However, deep learning offers a paradigm shift with its representation learning approach. In this approach, machines are given raw data and develop their own representations necessary for identifying patterns. This involves numerous layers of processing, typically in a sequence, with each layer conducting numerous simple, nonlinear operations. The representation produced by one layer, starting with the input of raw data, is passed to the next, resulting in increasingly abstract representations.

In this manner, highly complex functions can be learned. Deep learning models excel in handling large datasets, largely due to their ability to operate on dedicated processing hardware designed for complex computational tasks. They also enhance their performance as they are fed with more data, often surpassing the capabilities of many traditional machine learning methods. Deep-learning systems can accept multiple data types as input-an aspect of particular relevance for heterogeneous healthcare data. The most common models are trained using supervised learning, in which datasets are composed of input data points (e.g., skin lesion images) and corresponding output data labels (e.g., 'benign' or 'malignant'). Reinforcement learning (RL), in which computational agents learn by trial and error or by expert demonstration, has progressed with the adoption of deep learning, achieving remarkable feats in areas such as game playing (e.g., Go6). RL can be useful in healthcare whenever learning requires physician demonstration, for instance in learning to suture wounds for robotic-assisted surgery.

Some of the greatest successes of deep learning have been in the field of computer vision (CV). Computer Vision (CV) is dedicated to interpreting images and videos, engaging in functions like identifying objects, pinpointing their location, and delineating their boundaries. These capabilities are crucial in analyzing patient radiographs to detect the presence of malignant tumors. Convolutional neural networks (CNNs), a type of deep-learning algorithm designed to process data that exhibits natural spatial invariance (e.g., images, whose meanings do not change under translation), have grown to be central in this field. Medical imaging, for instance, can greatly benefit from recent advances in image classification and object detection.

Many studies have demonstrated promising results in complex diagnostics spanning dermatology, radiology, ophthalmology, and pathology. Deep-learning systems based on CNN methods have been very successful especially by supporting physicians when offering second opinions and flagging concerning areas in patient's images. This is largely due to the fact that CNNs have achieved human-level performance in object-classification tasks, in which a CNN learns to classify the object contained in an image. In the first step, the algorithm leverages large amounts of data to learn the natural statistics in images—straight lines, curves, colorations, etc.—and in the second step, the higher-level layers of the algorithm are retrained to distinguish between diagnostic cases. Similarly, object detection and segmentation algorithms identify specific parts of an image that correspond to particular objects. CNN methods take image data as input and iteratively change it through a series of convolutional and nonlinear operations until the original raw data matrix is transformed into a probability distribution over potential image classes (e.g., medical diagnostic cases).

The quest to determine how much the answer given by an artificial intelligence algorithm is aligned or close to that given by a group of experienced radiologists has been the subject of permanent study. For example, Biebau et.al. (5) in their study, based on a dataset involving 182 patients, identified a very strong correlation between the results of the radiologists' analysis and the results from the interpretation of images by an artificial intelligence algorithm with regard to the degree of severity of the lung lesions found in these patients. In the same direction, Montazeri et.al. (6) in their systematic review, describe, after analyzing more than 40 related studies, that the predictive performance measures performed by some AI tools showed a very high capacity to detect infection.

In turn, Lessmann et.al (7) in his publication compares the use of the CO-RADS artificial intelligence system with the opinion of 8 (eight) radiologists. In this study, the researchers evaluated the performance of this system based on data obtained from chest computed tomography images of patients with suspected COVID-19, distributing the results according to the system (CORADS) of classifications which is described below.

The CO-RADS model (COVID-19 Reporting and Data System) seeks to identify and classify patients with pulmonary involvement with scores ranging from 1 (very low) to 5 (very high) depending on the type and number of pulmonary findings related to COVID.

Table 1: Tomographic findings and their classification according to the CO-RADS system

<table><tr><td colspan="3">CO-RADS classification for COVID diagnosis through graphic CT images</td></tr><tr><td>Category</td><td>Suspected COVID</td><td>Description</td></tr><tr><td>0</td><td>Inconclusive</td><td>Technically inadequate image</td></tr><tr><td>1</td><td>Too low</td><td>Normal or uninfected</td></tr><tr><td>2</td><td>Low</td><td>Findings of other infections but not COVID</td></tr><tr><td>3</td><td>Probable</td><td>Features of COVID present, however, identified findings typical of other infections</td></tr><tr><td>4</td><td>High</td><td>Some COVID characteristics evidenced</td></tr><tr><td>5</td><td>Too high</td><td>Typical COVID Features Present</td></tr><tr><td>6</td><td>Confirmed</td><td>RT-PCR positive</td></tr></table>

Besides the CO-RADS classification, the percentage of lung parenchyma involvement was also considered in the comparative study between radiologists and the Artificial Intelligence tool. When comparing the distribution of the CORADS classifications between the algorithm and the radiologists, a very high degree of agreement was found between the two groups. Similarly, with regard to lung parenchymal involvement, the results regarding the agreement between the two methods (human vs. machine) were equivalent to those described above, but the human observers, on average, indicated a lung volume damage greater than that indicated by the Artificial Intelligence tool. The explanation may be that the visual estimation of the amount of lung parenchyma affected is subjective and some studies have shown that human readers tend to overestimate the extent of the disease.

Once proved that some AI solutions can be a very valuable tool to support radiologists in the definition of the degree of parenchyma involvement, there are some few other contributions an AI-Suite may positively impact the healthcare delivery especially by supporting Remote and Collaborative Diagnostics.

In a global health crisis, the ability to provide expert radiological services remotely turned to be utmost important and AI tools could facilitate tele-radiology by providing assistance to radiologists operating in diverse and geographically dispersed locations. These tools offered preliminary analyses and diagnostic suggestions, which radiologists could then review and corroborate. This support enabled radiologists to extend their expertise to underserved regions, ensuring that even patients in remote areas received high-quality diagnostic care.

As cited by Tan et.al (9), in order to assist hospitals without chest radiologists and to help provide faster diagnosis, the Hospital das Clínicas Innovation Center in São Paulo, Brazil, in partnership with the private sector and the Brazilian federal government, created the RadVid-19 project. Through this platform, any hospital in Brazil was able to send its chest CT images to a server, where two AI algorithms return a report with a COVID-19 probability analysis and the extent of the affected lung parenchyma. Operating on a 7/24 model, the facility generated reports to the client hospital within 10 minutes, at no cost to the user.

Further, Al-enhanced radiology allows collaborative diagnostics. By integrating AI tools with cloud-based platforms, radiologists can share imaging data and AI-generated insights with colleagues around the world. This collaborative approach enables a more comprehensive diagnostic process, combining human expertise with advanced technological support to improve diagnostic accuracy and patient outcomes.

## VI. CONCLUSION

The COVID-19 pandemic has underscored the critical need for innovative solutions in healthcare. AI tools have proven to be invaluable allies for radiologists, enhancing their ability to detect lung lesions with speed and accuracy that were previously unattainable. By augmenting the capabilities of radiologists, AI ensures a more responsive and resilient healthcare system, better equipped to navigate the challenges of both current and future pandemics. As we continue to advance and refine these technologies, the symbiotic relationship between human expertise and artificial intelligence will undoubtedly pave the way for new milestones in medical diagnostics, ultimately saving lives and improving global health outcomes.

Nevertheless, alongside the many advantages AI offers in aiding radiologists, it's critical to address the ethical considerations and hurdles it presents. It's crucial to safeguard patient data privacy and security, establishing strict measures to secure confidential information, especially with the use of AI tools hosted on the cloud. Furthermore, the clarity and understandability of AI processes are key; radiologists need to comprehend the reasoning behind AI's decisions to build trust and utilize these tools efficiently.

The integration of AI tools into radiology does not necessarily mean replacing radiologists but rather augmenting their capabilities. The adoption of AI in radiology isn't about replacing radiologists but enhancing their expertise. AI can manage the regular, laborious duties, freeing radiologists to concentrate on intricate cases and to make more nuanced judgments. AI can act as a supportive second reviewer, catching potential oversights, thus providing an additional layer of assurance. This collaborative approach can improve diagnostic accuracy, efficiency, and ultimately patient outcomes.

Generating HTML Viewer...

References

9 Cites in Article

Coelho Filho,M Dias,E Cerri,G Dias,M Bego,M (2024). The importance of utilizing CT images at early stages for COVID-19 diagnosis -a Scoping Review.

Myura Nagendran,Yang Chen,Christopher Lovejoy,Anthony Gordon,Matthieu Komorowski,Hugh Harvey,Eric Topol,John Ioannidis,Gary Collins,Mahiben Maruthappu (2020). Artificial intelligence versus clinicians: systematic review of design, reporting standards, and claims of deep learning studies.

A Esteva,A Robicquet,B Ramsundar,V Kuleshov,M Depristo,K Chou (2019). A guide to deep learning in healthcare.

Yun Liu,Timo Kohlberger,Mohammad Norouzi,George Dahl,Jenny Smith,Arash Mohtashamian,Niels Olson,Lily Peng,Jason Hipp,Martin Stumpe (2019). Artificial Intelligence–Based Breast Cancer Nodal Metastasis Detection: Insights Into the Black Box for Pathologists.

Charlotte Biebau,Adriana Dubbeldam,Lesley Cockmartin,Walter Coudyze,Johan Coolen,Johny Verschakelen,Walter De Wever (2021). Comparing Visual Scoring of Lung Injury with a Quantifying AI-Based Scoring in Patients with COVID-19.

M Montazeri,R Zahedinasab,A Farahani,H Mohseni,F Ghasemian (2021). Machine learning models for image-based diagnosis and prognosis of COVID-19: Systematic review.

N Lessmann,C Sánchez,L Beenen,L Boulogne (2020). Radiology In A Minute: Automated Assessment of CO-RADS and Chest CT Severity Scores in Patients with Suspected COVID-19.

S Schalekamp,C Bleeker-Rovers,Lfm Beenen,Quarles Van Ufford,Hme Gietema,H Stöger,J (2021). Chest CT in the Emergency Department for Diagnosis of COVID-19 Pneumonia: Dutch Experience.

Bien Tan,N Dunnick,Afshin Gangi,Stacy Goergen,Zheng-Yu Jin,Emanuele Neri,Cesar Nomura,R Pitcher,Judy Yee,Umar Mahmood (2021). RSNA International Trends: A Global Perspective on the COVID-19 Pandemic and Radiology in Late 2020.

No ethics committee approval was required for this article type.

Data Availability

Not applicable for this article.

How to Cite This Article

Manuel Pereira Coelho Filho. 2026. \u201cThe Role of Artificial Intelligence in Supporting Radiologists in Detecting Lung Lesions Caused by COVID-19 – a Scoping Review\u201d. Global Journal of Computer Science and Technology - D: Neural & AI GJCST-D Volume 24 (GJCST Volume 24 Issue D1).

Explore published articles in an immersive Augmented Reality environment. Our platform converts research papers into interactive 3D books, allowing readers to view and interact with content using AR and VR compatible devices.

Your published article is automatically converted into a realistic 3D book. Flip through pages and read research papers in a more engaging and interactive format.

Our website is actively being updated, and changes may occur frequently. Please clear your browser cache if needed. For feedback or error reporting, please email [email protected]

Thank you for connecting with us. We will respond to you shortly.