A phenomenologically correct formulation of the problem is more than half the solution, since the resulting solution provides a rigorous description of the observed effects in the first approximation. Meanwhile, many modern theories describe basic effects using crude multiparameter models with corrections for the Landau smallness parameter. This is most clearly demonstrated in the purely relativistic effect of magnetism, which, it was assumed, is determined by a linear dependence on the charge velocity and, as a result, the description of which contains many phenomenological errors. This led not to an UNDERSTANDING of magnetism, but only to its formal mathematization, carried out in a non-rigorous manner. This led to the mathematical Physicists, without a proper analysis of the classical description of magnetism, “concluded” its quantum nature. Thus, by formally using Euler’s equations without a proper physical analysis, magnetism was incorporated into Maxwell’s equations. The resulting errors in its description were extended into both the Theory of Relativity and Quantum Mechanics itself.

## I. EPIGRAPH

But Science, by translating Mysticism and "It is very difficult to look for a black cat in a dark room when there is no cat there."

Confucius.

Archimedes said: "Give me a Fulcrum, and I will move the whole world." But he didn't say he needed a rope strong enough to support the weight of the entire world. And such a "Rope" is the quintessence of human thought—Science. But a strong rope is woven from strong, solid threads, not from tangled felt. After all, a searchlight with a conventional incandescent lamp can only scan and diagnose objects a few kilometers away with "entangled" photons, whereas a laser beam "woven" from coherent, rather than entangled, photons has made it possible to diagnose—receive a reflected signal—from a corner reflector on the Moon. And this is only from Earth, whose atmosphere explodes when the beam is refocused.

But Science, by translating Mysticism and Miracles into a logically consistent Description of Effects, if it isn't itself lost, illuminates not just the cobblestones under the wheels on the road, but the Path to the Future!

But now Science itself is CONFUSED. Fragmentary, disjointed Descriptions of various Physical Effects, natural at the initial stage of Science's development, cannot serve as a "searchlight" into the Future. Moreover, the accumulation of contradictions in particular Descriptions results in Fundamental Problems. And the correct formulation of a Physical Problem is difficult and often takes much more time than the time it takes to present the found Solution to the Problem. And as I've noted many times, at the current intensive stage of scientific development, artisanal researchers usually don't bother with Fundamental Problems, but focus on Local Regularities, which are not INVARIANT and often contradict Fundamental Laws. Moreover, the NORMAL chain of Fundamental Science-Applied Science-Technology-Money has undergone an inversion, and Fundamental Science has found itself on the sidelines, acting as a backdrop.

So Fundamental Science survives by feigning gigantomania, such as the construction of the Large Hadron Collider and pompous statements like "humanity lacks 42 orders of magnitude in energy to test my theory." Even the accomplished theorist Richard Feynman stooped so low as to classify only Elementary Particle Physics as Fundamental Science. True, he wrote a book, "The Character of Physical Laws," as if to justify this. But "prominent" theorists tried to ignore it in order to continue constructing schizophrenic models mindlessly, unconcerned about their contradictions with the Basic Principles.

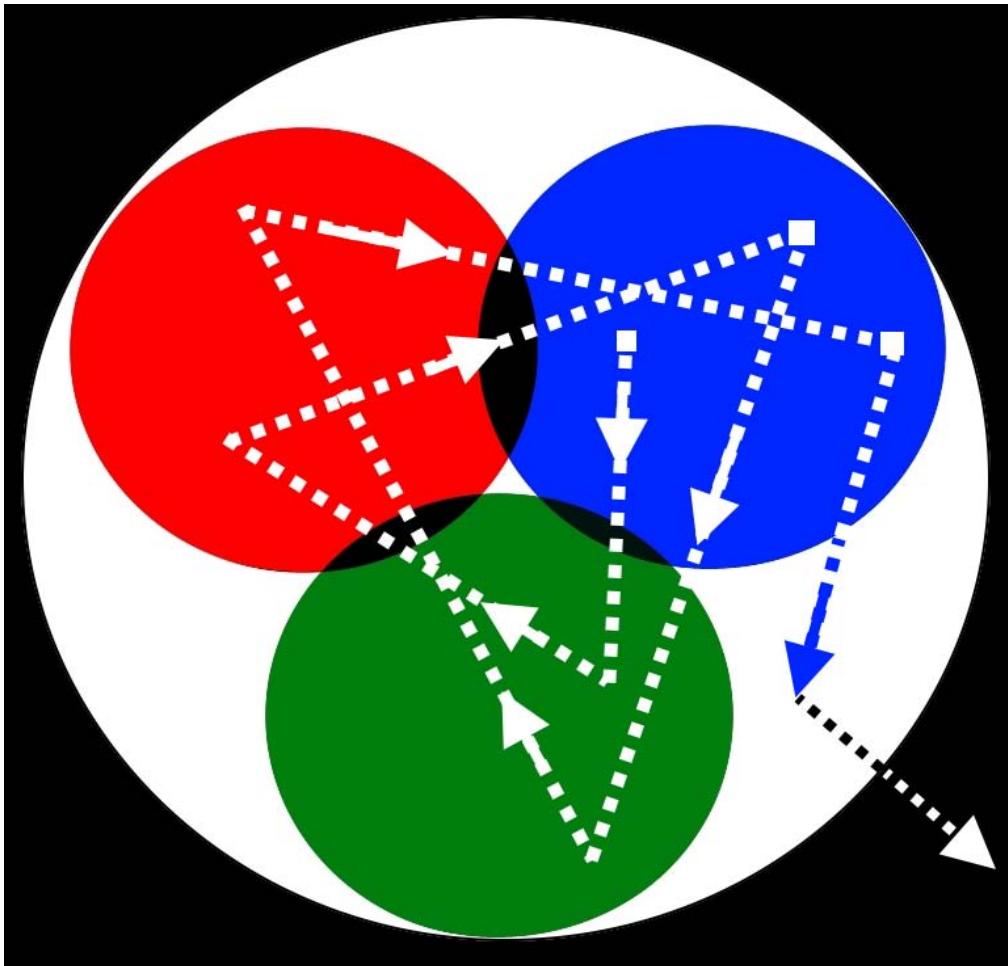

But without a Unified Phenomenology built on the foundation of Fundamental Laws and INVARIANTS, Physics, Production, Science in general, and Society as a whole found themselves like a traveler lost in a dense forest without a map. Wandering in search of Truth, sometimes in circles, led to a number of fundamental errors in the description of Nature and to the disunity of the phenomenologies of different sciences. And not only to the Aristotelian division into Physics and Non-Physics. Even in such strict Sciences as Mathematics and Physics, the disunity of Phenomenologies occurred even in their different sections (Fig. 1).

Fig. 1: Schematic representation in the form of colored circles of different Phenomenologies used to describe the Unified Phenomenology of Nature: intersections-contradictions are the dark fragments of the circles, phenomenological gaps are the white areas of the drawing, dotted lines are theories based on particular phenomenologies, the surrounding darkness is miracles and mysticism, filled, under the guise of Theories, by schizophrenic fantasies.

Furthermore, within the single-color phenomenological disks shown in Fig. 1 there are also partially overlapping "sub-disks," so that the single-color disks themselves are in reality pockmarked and riddled with holes like a sieve, and the theories—the dotted lines within each disk—are not straight lines at all, but rather noise tracks. And the Particular Phenomenologies themselves are not circles, but rather diverse fragments in a kaleidoscope.

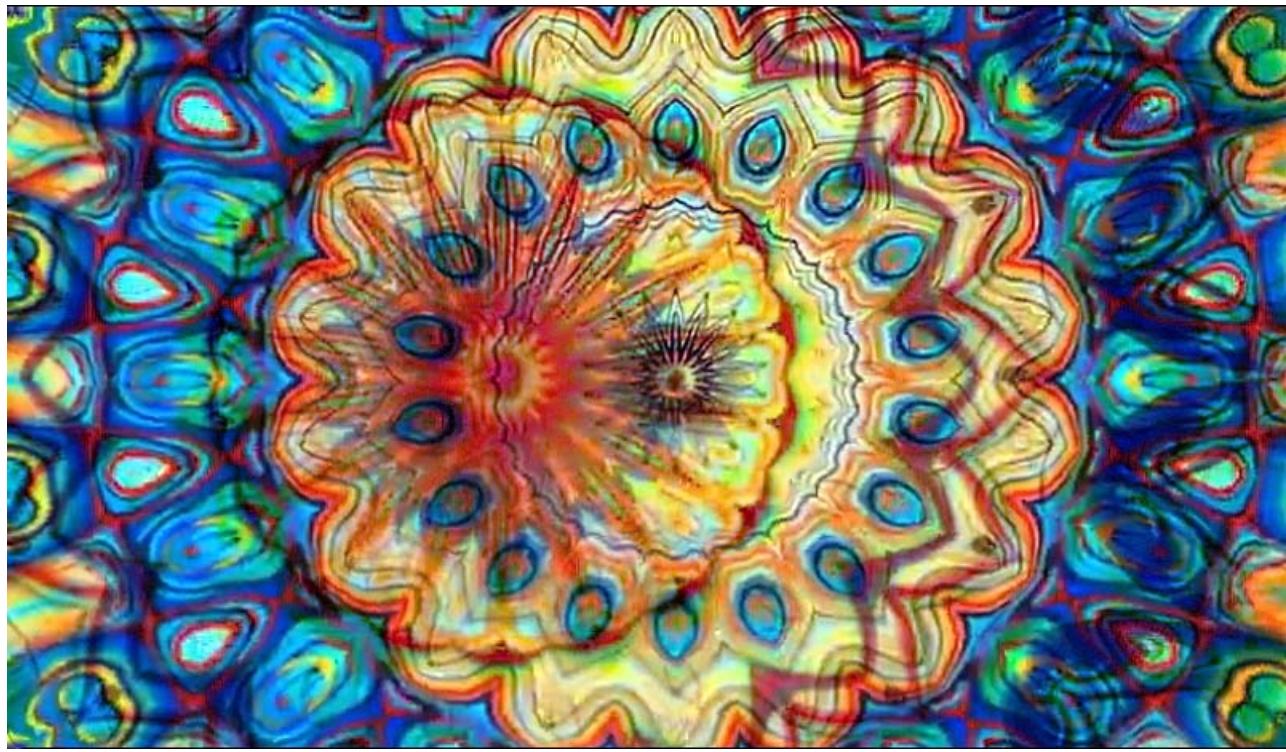

So, a complete modern phenomenological Description of Nature is more accurately depicted as a multicolored kaleidoscope with Particular Theories bouncing across its tiny fragments. Such an Eclectic Picture even has a symmetry that doesn't correspond to Nature, but rather to the shape of the fragments. Ultimately, instead of a Unified Description of Nature, we have intricate images reflecting the struggle between Chaos and Harmony in human Consciousness (Fig. 2).

Fig. 2: The random superposition of random kaleidoscope images gives only the illusion of "seeing" a Unified Picture of patterns in Nature

Thus, canonized, outdated Concepts imposed a Taboo on their elimination by professional scientists, and the "elimination" of the resulting "paradoxes" was shifted to amateurs.

But, paradoxically, a Taboo does not apply to the continuation of Particular Theories built on the basis of canonized Phenomenology, without expanding its generalization (into the white area of Fig. 1). Such Theories are already schizophrenic in themselves. But the Results obtained on their basis are used and perceived only because they follow (mindlessly) from the Formula, as "New" Concepts. And from such schizophrenic "Cubes" modern nonsense is constructed, whether in Cosmology or in the "Quantum" microcosm.

The crisis of Fundamental Science has reached such a degree that its various branches are now spoken of as distinct, independent worlds. This is how it is portrayed, both as an independent Classical Picture of the World, and as an independent Quantum Picture of the World, and also as an independent Picture of the World of Relativity. But Nature is ONE, and these "different" Pictures are nothing more than Theories attempting to describe one side of the Unified Physical Picture of the World. And there are also dozens of "Alternative Concepts" unrecognized by official science, one of which, "New Physics," already fills dozens of volumes.

But contrasting them with each other is as different as contrasting the Theory of Numbers and Sets with Differential and Integral Calculus in Mathematics.

And if we continue this contrast, we can contrast Physics with Mathematics, and Biology with Life and Medicine.

Consequently, we are now witnessing Conceptual Madness in theories. Meanwhile, theories have become mere decoration, while in practice, bare empiricism is used.

And I, having initially trained as a mathematician, personally experienced this, encountering the phenomenological problems in thermoelectricity, which even the highly-educated artisans of science no longer considered science - simply the rules for calculating thermoelectric devices, written by loffe and now immutable. I discovered them 40 years ago [1], but it took decades of precision experiments and scrupulous analysis to break down the wall erected by the classics between the associated effects and which artisans still rely on. Thus, thermoelectricity, from the time of the classic works of Onsager [2] and loffe [3] to the present day, uses in its phenomenology a pair of canonical forces: electrical - determined by the potential gradient and thermal - determined by the temperature gradient. And Thermoemission, which arose from Thermoelectricity based on the pioneering work of Andrei Ivanovich Anselm "Vacuum Thermoelement" [4], phenomenologically departed from the pair of canonical thermodynamic Forces used in Thermoelectricity and uses its own Forces in its theories and calculations: Thermal - entropy production and Concentration - concentration gradient [5]. The theory of the p-n junction [6] was generally built on a third pair of phenomenological Forces: Electrical and Concentration. So, when a transistor operates, the temperature gradients arising in it are not taken into account in the theory. This determined the limitations of all three of these "special" Theories, revealed during the analysis of contact phenomena. And the unification of these three Phenomenologies made it possible to go beyond the description of only diffuse processes [7, 8, 9] and describe the Physics of the Missing Scale, between Macroscopics and Quantum Theory - to describe NANO-Effects [10]. Other Phenomenological Fallacies exist and even regularly emerge in Solid State Physics, which have repeatedly led speculative scientists astray. As, most recently, with the same "Graphene," graphenologists used the concept of inert gases at ultra-low temperatures to describe refractory, heat-resistant graphite [11, 12]. Phenomenological Fallacies have also led Medicine, which is entirely built on the MEMORIZATION of Particular Laws, into the beautiful, but distant world of programming [13]. There is no Unified Functional Description of the Organism.

Whereas everything hinges on EVIDENCE. And without EVIDENCE, everything, including the officially recognized sections of Science, are nothing more than speculative fantasies that misrepresent Reality.

Numerous Phenomenological Fallacies have also entered into Modern Quantum Theory. Schrödinger's mathematical random walks on the Complex Plane, without understanding the MEANING of Imagination [14-19], led theoretical physicists to the conclusion that Modern Quantum Theory has resulted in a set of incoherent, schizophrenic fantasies, divorced from Reality, and in demand mainly by the tabloid press. After combing through the FOUNDATIONS of Quantization [20] - returning it to the Planck-Einstein Principles, I planned to quickly comb through the Foundations of the Theory of Relativity. But I discovered that its phenomenological wanderings are not at all a consequence of the Quantum Fallacies noted in my book, but a consequence of UNCERTAINTIES in the DEFINITION of Magnetism (which, naturally, also entered into Quantization). Thus, it turned out that the Magnetic Field, widely used in practice, does not even have a correct Definition. So, the REDEFINITION of the Magnetic Field itself has already resulted in a series of articles [21-23]. And this article on Magnetism is its continuation, but not the last. And yet, at present, Magnetic Force has found wide application, from effective microelements for storing information in hard drives to giant solenoids in Tokamaks, at the LHC. And in magnetic levitation trains. But in practice, they often encounter insurmountable "theoretical" Limitations and use purely Empirical Laws. And this is because in UNDERSTANDING Magnetic Force, Physics has still not advanced far from the mystical Force of the Tao introduced by the ancient Chinese – it simply hides this MISUNDERSTANDING behind mathematical formulas and Quantization. [24]. As I've already mentioned, we're currently in an Intensive Stage of Scientific Development, and without Artificial Intelligence (AI), even mentally grasping the entire flow of scientific information has become extremely difficult, much less analyzing it deeply. But AI is fundamentally no solution, as "DIGITAL" only allows for approximate analog information processing, while "DEAD DIGITAL" fundamentally cannot go beyond the common knowledge. And whether modern humans will be able to teach AI CREATIVITY is unlikely. Moreover, as Elon Musk's Gologpedia, built with the help of Artificial Intelligence, shows, the center of gravity of AI "creativity" lies in the past, not in the groundbreaking pioneering works published in Open Access using Phenomenologies built on Fundamental Laws. Moreover, after looking through the "Magnetism" section of Gologpedia, I saw that the only additional "benefit" of AI so far is that it has dispassionately demonstrated that the canonized descriptions and theories of Magnetism by humans merely demonstrate "How to Think WRONG"[25-28]. Meanwhile, real mathematicians themselves have concluded that the crisis of Theoretical Physics stems from Mathematics itself, which has, by and large, departed from Geometry. Thus, Mathematics itself has become focused on the manipulation of Abstract Terms through formulas, and Mathematical Physics has evolved solely from this branch of Mathematics.

## II. CONCLUSION

To further construct the Description of the Magnetic Field and correct the equations hastily copied by Maxwell from Heaviside, the senior telegraph operator, required a more careful analysis of the Relativity of Ampere's Law, as presented in the previous article. Laplace, with his Laplacian, which "came to hand" in Magnetism, proved to be simply a lucky find for introducing a formal abstract Mathematics without a deep UNDERSTANDING of the effects discovered. Phenomenologically, the situation in Magnetism is similar to that in Quantum Mechanics, where formal Vector Spaces and Matrices stretched the Imaginary Solutions of the Schrödinger Equation onto the Real, in which the physical Orthogonal (i.e., Imaginary) Term, determined by the Magnetic Field (i.e., Relativity), was omitted [29].

Phenomenology is nothing more than a CORRECT Description of an Effect, used to construct its Mathematics of First Approximation, i.e., a minimal set of equations that provide a CORRECT Description of Basic Experiments. In this regard, strictly speaking, there is no Phenomenology of Magnetism to date. It is a crude attempt to force the various manifestations of

Magnetism into a set of abstract formulas. Tellingly, when Hertz, during his demonstration of the transmission of electromagnetic oscillations over a distance, was asked to explain how electromagnetic waves are formed and behave, he replied, "Ask Maxwell for an explanation." But the problem is that Maxwell's canonized equations CORRECTLY (in first approximation) do not even describe the simplest (single) electromagnetic wave. In practice, even the simplest electromagnetic devices are calculated using empirical laws and introducing a host of adjustable parameters into the "Theory" of Magnetism. This theory, as shown in my previous works, was itself initially based on the Coulomb and Ampère equations, which ignore relativism.

But without a deeper UNDERSTANDING of Magnetism, the already achieved limits in both the generation and use of a Magnetic Field cannot be improved. Nor can a consistent and universally CLEAR Electrodynamics, Quantum Mechanics, and Theory of Relativity be constructed. To construct a Unified, consistent Phenomenology of Magnetism, we must start from the very beginning. Moreover, in addition to the previously identified fundamental contradictions in the Fundamentals of Magnetism, there is another fundamental assumption, included in the definition of the Lorentz Force: that the Ampère and Oersted Forces are equal in magnitude!

For a virtually new construction of the Phenomenology of Magnetism, the Real Connection of these Forces will be analyzed in future work.

But for a Complete Definition of the Magnetic Field it is necessary to eliminate the CONTRADICTION with the Curie theorem, which prohibits in principle its EXISTENCE.

Generating HTML Viewer...

References

28 Cites in Article

S Ordin (1997). Optimization of operating conditions of thermocouples allowing for nonlinearity of temperature distribution.

Lars Onsager (1931). Reciprocal Relations in Irreversible Processes. I..

A Anselm (1951). the Thermionic vacuum thermocouple.

L Stilbans (1957). The thermo-electric phenomena.

Boris Moizhes (1973). Effectiveness of Low-Temperature Thermionic Converters for Topping the Rankine Cycle.

S Sze (1981). Physics of Semiconductor Devices.

S Ordin (1997). Optimization of the operating condition of thermocouples.

S Ordin (1997). XVI ICT '97. Proceedings ICT'97. 16th International Conference on Thermoelectrics (Cat. No.97TH8291).

S Ordin (2017). Refinement and Supplement of Phenomenology of Thermoelectricity.

Stanislav Ordin (1029). Physical Bases of Nano.

Stanislav Ordin (2025). Anti-Graphene.

Stanislav Ordin (2025). Quantization Essence.

Stanislav Ordin (2021). Non-Elementary ELEMENTARY Harmonic Oscillator.

Stanislav Ordin (2018). Quasinuclear Foundation for the Expansion of Quantum Mechanics.

Stanislav Ordin Gaps and Errors of the Schrödinger Equation.

Stanislav Ordin (2022). Imagination and real quantization.

Stanislav Ordin (2024). Non-Schroedinger Orbitals.

Stanislav Ordin Comprehensive Analysis of the ELEMENTARY Oscillator.

Stanislav Ordin,* (2024). Mini Review, Elementary Physics of Magnetic Field (monopolies).

Stanislav Ordin (2024). Reasons for Redefining the Magnetic Field.

S Ordin (2024). Foundations of Magnetic Field Definition.

C Daniel,Mattis (1965). The Theory of Magnetism (An Introduction to the Study of Cooperative Phenomena).

Stanislav Ordin (2025). Absoluteness and Relativity of the Coulomb Force.

(0005). Unknown Title.

Stanislav Ordin (2018). Quantization Essence.

Stanislav Ordin (2023). Introduction to Thermo-Photo-Electronics.

S Ordin (2024). Local Thermo-EMF and Nano-Limits of Efficiency.

Stanislav,Ordin (2024). Foundations of Quantization.

No ethics committee approval was required for this article type.

Data Availability

Not applicable for this article.

How to Cite This Article

Dr. Stanislav V. Ordin. 2026. \u201cUnraveling Phenomenological Misconceptions and Magnetism\u201d. Global Journal of Science Frontier Research - A: Physics & Space Science GJSFR-A Volume 25 (GJSFR Volume 25 Issue A6): .

Explore published articles in an immersive Augmented Reality environment. Our platform converts research papers into interactive 3D books, allowing readers to view and interact with content using AR and VR compatible devices.

Your published article is automatically converted into a realistic 3D book. Flip through pages and read research papers in a more engaging and interactive format.

A phenomenologically correct formulation of the problem is more than half the solution, since the resulting solution provides a rigorous description of the observed effects in the first approximation. Meanwhile, many modern theories describe basic effects using crude multiparameter models with corrections for the Landau smallness parameter. This is most clearly demonstrated in the purely relativistic effect of magnetism, which, it was assumed, is determined by a linear dependence on the charge velocity and, as a result, the description of which contains many phenomenological errors. This led not to an UNDERSTANDING of magnetism, but only to its formal mathematization, carried out in a non-rigorous manner. This led to the mathematical Physicists, without a proper analysis of the classical description of magnetism, “concluded” its quantum nature. Thus, by formally using Euler’s equations without a proper physical analysis, magnetism was incorporated into Maxwell’s equations. The resulting errors in its description were extended into both the Theory of Relativity and Quantum Mechanics itself.

Our website is actively being updated, and changes may occur frequently. Please clear your browser cache if needed. For feedback or error reporting, please email [email protected]

Thank you for connecting with us. We will respond to you shortly.