AI integration into medicine seems to automate previously inconceivable parts of decision making and offers new possibilities in the use of diagnosis and patient care. From the onset of AI in the healthcare system, there have been complex ethical and legal questions that need to be addressed to guarantee patient safety, equity, and responsible care. This paper identifies the ethical and legal issues surrounding AI decision making in healthcare while underscoring the need for more robust accountability structures. It outlines primary ethical concerns including bias in algorithms, the need for transparency, patient agency, and the legalities of consent and outlines the healthcare system and patient relationship. It further outlines accountability issues that arise from AI decision making that adversely affect individuals and existing accountability response models between the developers, healthcare institutions, and even patients. It does so while leveraging case studies of the use of AI in healthcare to examine from a legal perspective the GDPR and HIPAA, and more recent legislation like the EU’s AI Act. The paper tries to provide recommendations that include ethical frameworks ai ethical guidelines, ai explainability focus, promising also more advanced responsible ai regulations, specialty applying regulations.

## I. INTRODUCTION

The most striking development of artificial and human intelligence integration is in the field of medicine. Within the past few decades, artificial intelligence has transformed from an unfeasible prospect to transformative technology poised to fundamentally reshape clinical medicine. From clinical diagnostic algorithms to the ability to effortlessly analyze vast troves of medical imaging, and from algorithms that can "predict" patient outcomes to electronic medical record systems that utilize predictive analytics, and from digital assistants that chat with patients and incessantly "call" to follow-up with them, AI is rapidly spreading in global healthcare systems. Its unparalleled ability to augment the human capacity for clinical decision-making in a manner that enables clinicians to offer more accurate and efficient services for better tailored solutions to patients is rapidly being appreciated. Yet, as these AI systems become more capable and impactful within the clinical space, nuanced ethical and legal questions are starting to be raised.

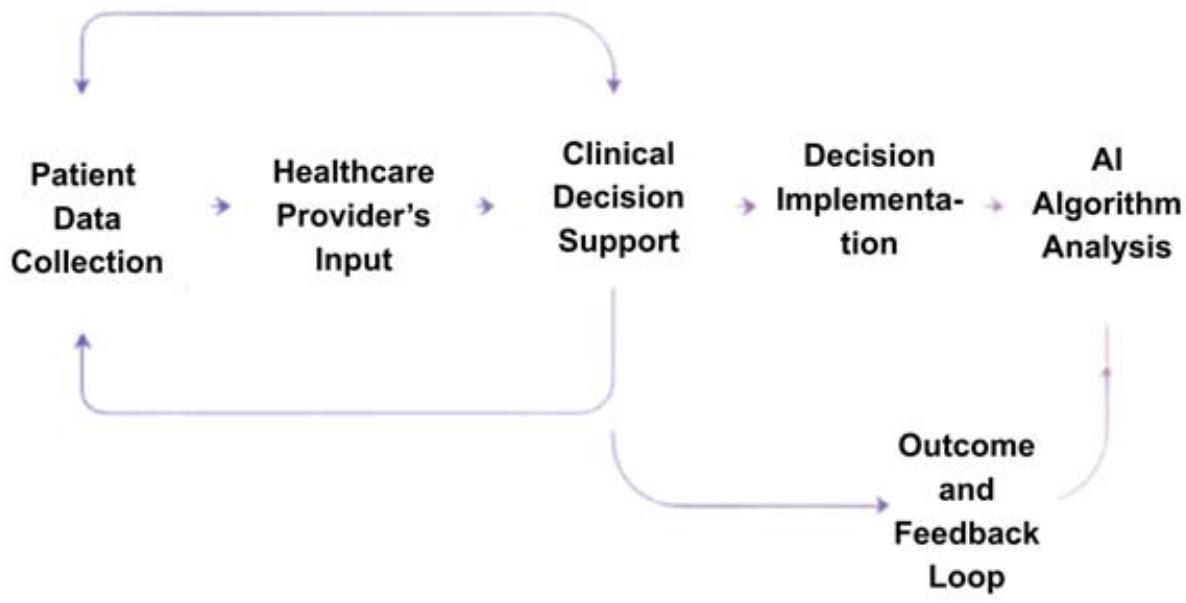

AI supported clinical decision is another leap in the evolution of medicine, which brings another critical ethical dilemma posed by many lawyers: who, if anyone, is responsible, when and if, any, the AI processes data and makes a decision that may impact patient wellbeing? In stark contrast to AI-supported decision making, AI systems are used in a number of processes in patient care that hinge on AI technology, and in these cases the caregiver is responsible AI systems is used in systems in patient care processes that hinge on AI technology, in these cases the caregiver is responsible. Prescribed care is the first step in which the caregiver has the sole responsibility. Implementing care in these situations is the first step where the AI does not make a decision rationally, where AI does not possess the capability that any human would be able to rationally explain-describe the situation "black-box" scenarios that AI systems fabricate. We face great ethical obstacles- a lack of sight into a system, a "black box"- a single step AI can take which is not as terrible as many may picture it, the responder is more concerned where no decision is a decision, no reality in an AI scenario, there is a legal framework AI must operate in. AI knows the system. As AI is woven into the fabric of present-day medicine, there are urgent questions waiting to be answered on the legal and ethical sides of these systems, these are social questions.

In this respect, the focus of this study will be on determining the ethical and legal responsibilities of clinical care in relation to AI in a decision making role. The research will examine problems at the intersection of AI in healthcare with the patients' rights to autonomy, informed consent, equity, and the legal obligations of AI developers, physicians, and healthcare establishments. It will also analyze the shortcomings of existing systems and suggest ways to align the use of AI to legal provisions formulated to provide patients with care that is ethical and reasonable AI.

This research needs emphasis and advocacy. Given that AI has a role in influencing clinical judgments, the ethical and legal aspects are crucial not simply to effective governance, but also to fostering public confidence in AI systems. These goals, along with the safeguarding of patients' legal and ethical rights, can be defended by effective rational discourse. These needs the addition of healthcare AI that can be ethically applied to realign the existing approaches to governance, obligation, and responsibility to AI use in clinical care.

The paper is structured as follows: the literature review chapter on the application of AI in healthcare outlines issues with ethics and the rule of law and the existing accountability frameworks. The paper then addresses the specific implementation challenges of AI for clinical decision-making and offers recommendations for better accountability. Finally, the study examines the proposed methods for enhancing transparency, fairness, and accountability of AI in healthcare. This approach should help determine the ethical and legal boundaries of using AI in clinical medicine.

## II. LITERATURE REVIEW

The AI and medical sciences partnership has long since left the confines of a clinical niche. It has been, and continues to be, one of the most transformative AI application domains in the entire value chain of healthcare delivery. Its evolution from a mere theoretical proposition to one that is commonplace in daily practices attests to how deeply rooted its tentacles have spread. Today, and with the ultimate objective of improving the precision, efficiency, and personalization of healthcare delivery, it is clinically used for a wide multitude of purposes, ranging from sophisticated diagnostic and prognostic frameworks to systems that assist physicians in treatment, monitoring, and follow-up, thereby revolutionizing the traditional practices of medicine. For example, using artificial intelligence in medical imaging has allowed complexity radiographs to be analyzed for diseases such as cancer or heart disease with almost complete accuracy, and in some cases more than a human expert, using only algorithms (Gupta, 2025). Additionally, the advanced predictive analytical features of AI to healthcare predictive analytics have allowed algorithms to determine when patients in critical care will deteriorate and allow for appropriate actions to be taken (Pham, 2025). AI in Medicine has one of the most advanced forms of healthcare artificial intelligence being the automated AI-assisted surgery, where the robotic precision in the AI streamlines the more complex procedures, which often have a high rate of being loaded with human error (Fahad et al. 2024). These instances demonstrate both the transformative potential of AI and the ethical and legal issues some of its applications pose, which need to be resolved.

AI systems are advancing and being more embedded into clinical decision-making, and with such advancements more ethical issues are arising. One of the most relevant ethical issues surrounding the use of artificial intelligence in a decision-making capacity is the embedded bias an algorithm may contain. AI systems are populated with enormous data sets, and should the data used to class a set be flawed or misguided, the algorithm is likely to use those biases to inform a clinical decision. For instance, in medical imaging, AI systems have advanced considerably, allowing radiographic images to be interpreted through algorithms that detect ailments such as cancer and cardiovascular conditions at a level of accuracy that matches, or even surpasses, that of human experts (Gupta, 2025). In addition, healthcare providers have greatly revamped the predictive analytical aspects of AI to cater to patient needs in critical care settings, where algorithms predict deterioration and permit timely interventions (Pham, 2025). One of the more advanced forms of AI in healthcare is AI-assisted surgery, where robotic assurance in the AI makes complex procedures more precise and less prone to human error (Fahad et al., 2024). Such examples show AI's transformational ability but also bring ethical and legal concerns that ought to be addressed.

With the deeper embedment of AI systems in clinical decision-making, ethical questions concerning their application are gathering momentum. One of the primary ethical challenges is the potential bias carried within an AI algorithm. Train AI systems on large datasets; this means that if an AI is trained on biased data, an algorithm might perpetuate its biases into clinical decision-making. In the healthcare sector, this is indeed worrying because these biased AI systems might discriminate against patients, particularly those from marginalized groups (Smith, 2021). For instance, a study in 2019 revealed that AI systems widely used in healthcare were less likely to diagnose diseases for Black patients than White patients, hence causing disparity in treatment recommendations (Grote & Berens, 2019). Implicitly, these biases can lead to misdiagnoses, to incorrect treatments being administered, and hence to harm to vulnerable populations. The creation and use of an AI system must, therefore, ensure that these biases are minimized so that the care rendered is equitable for all patients.

It is these biases which are particularly troubling in medicine. Biased AI assistants could adversely affect the quality of care provided to holistic patients, particularly those in more diverse communities (Smith, 2021). One 2019 study discovered that Black patients were subject to less accurate diagnoses owing to AI models in healthcare as compared to White patients (Grote & Berens, 2019). The ramifications of these biases are profoundly consequential on society though, as it can rest in the realm of misdiagnosing, creating disruptive treatment modalities, or even inflicting harm onto the more defenseless of society. These patients need to be prioritized in the discussion, though, since we owe it to them to affirm that AI systems are in place to help rather than hinder access to care.

The issue of the precision and clarity of the AI systems is also of paramount importance. As systems become more elaborate, especially with respect to savings in cost and time during complex and crucial clinical assessments, more focus will be required on their clarity and precision. AI systems need more focus on lower accuracy in reasoning than on the trust desired to regain their functionality in medicine. From these estimates of trust which are more behavioral, the assumption is particularly troubling since patients are not the only impacted people. The consequences affect all patients, though, since it is these policies and their consequences which also adversely affect marginalized populations (Smith, 2021). As an illustration, one 2019 study found AI models employed in healthcare to be less diagnostic clarity with respect to patients of Black descent as compared to those of Caucasian descent (Grote & Berens, 2019). The concern here, however, is not only the trustworthiness of the AI, but the concern of an ethical responsibility to allow patients to understand how decision regarding their care is brought about, and to address these concerns, there is an increase of interest towards explainable AI (XAI), and for these give with AI systems, greater understanding, and control, are needed, to performance (Jenko et al., 2025). It remains the ethical obligation not to disclose, but, should know their patients about the extent at which AI works to support their care, and obtain formal permission, thus an ethical issue of informed consent arises.

In the issue of patient care, the matter of respect for autonomy and consent is self-evident. The use of AI technology at this stage of clinical decision making gives rise to questions regarding the autonomy of the patient, which is in contrast to the conventional model of healthcare where patients make decisions with the assistance of providers after analyzing the information. The concern for patients is that, due to the use of AI in the diagnostic and decision making processes, patients may not understand the role of AI in their care, and the decisions made about their care. It is vitally important to make sure the patients still remain a focal point in the decision making with the AI being a focus to assist, not to replace human decision making. This highlights the importance of informed consent where patients are not only informed, but are also educated on how AI affects their treatment.

The issue of accountability within AI as its integration continues to deepen in clinical settings become direer. In the case an AI system identifies a disease and wrongly concludes its diagnosis, or there is an adverse reaction to a treatment system, who is in charge of an AI system in such scenarios, and how would you explain the reasoning behind such decisions? The elements of how these types of questions will be answered form steps along a continuum of accountability, and in this type of case, multiple components and parties are involved. Liability in such situations will sometimes have to be directed to the people who developed the AI system, when such a system malfunctioned or was designed poorly. This is true, but the responsibility also falls on the healthcare providers and the institutions in case the systems are not properly implemented, or sufficient ethical and legal standards are not adhered to (Smith, 2021). In situations where AI systems commit mistakes because of faulty algorithms or erroneous data, it is yet undetermined whether the developers, the healthcare systems, or both bear responsibility. Such a gray area in legal responsibility greatly undermines the ethical implementation of AI in healthcare.

Like in other areas, the legal implications of AI in healthcare is still a work in progress, and the speed at which AI is developing, laws and regulations have yet to catch up. In the European Union, the General Data Protection Regulation (GDPR) is a legal framework on data privacy and patient consent, yet elusive on the legal implications of AI (European Commission, 2018). In the United States, laws like HIPAA (Health Insurance Portability and Accountability Act) safeguard patient privacy, but do little, if anything, regarding the responsibility of Als. The need to develop legal frameworks in the area of healthcare that deal with the specific issues of AI are rapidly becoming more essential. These frameworks would need to address the accountability gap AI systems are creating, and more importantly, the protective measures that need to be in place to guard patients from the adverse effects of AI in healthcare (Gerke et al., 2020).

Table 1: Key Ethical Concerns and Legal Frameworks in AI Healthcare Applications

<table><tr><td>Ethical Concerns</td><td>Legal Frameworks</td><td>Key References</td></tr><tr><td>Informed Consent</td><td>Data Privacy (HIPAA, GDPR)</td><td>Grote & Berens (2019)</td></tr><tr><td>Algorithmic Transparency and Explainability</td><td>Liability for AI errors (FDA, EU AI Act)</td><td>Smith (2021), Pham (2025)</td></tr><tr><td>Bias and Fairness</td><td>Regulatory Oversight</td><td>Nasir et al. (2025), Gupta (2025)</td></tr><tr><td>Patient Autonomy</td><td>Legal Accountability in Healthcare</td><td>Yadav Kasula (2021)</td></tr></table>

The use of AI in various spheres of life as from October 2023 with special focus on Nursing and Patient Care has made and will continue making extraordinary breakthroughs. However, such incredible shifts in healthcare brought on by AI in fields like diagnosis, treatment planning, and patient monitoring, have overly benefited and/or unfairly stigmatized certain groups in the healthcare ecosystem.

This informs the need for possible policies and regulations, if necessary, to earn the use of AI in healthcare responsidarily and borderlessly.

AI-driven technologies in clinical decision making are associated with various potential problems which include, but are not limited to, ethical, legal, bias, transparency, autonomy, and accountability issues. All these problems need to be successfully resolved in order to protect the rights of the clinicians while taking care of the clinical responsibilities.

The focus of this literature review tethered on the integration of AI in healthcare under various branches which includes its possible application, ethical problems, and legal complications.

However, the marketing of AI in healthcare emerges from the collaboration of patients, policy makers, the developers, and the Integrated AI in Healthcare professionals.

## III. ETHICAL AND LEGAL CHALLENGES IN CLINICAL AI

AI in Integrated Healthcare as from October 2023 has made advances in Clinical Decision making like Diagnosis and Treatment planning, and Patient Monitoring. New AI programs, however, are becoming ubiquitous in new settings, and bring a set of new ethical and legal considerations. For instance, in a relatively new area of concern, what becomes of the specialist's patient centered approach and the new technological innovations being brought to the clinic? There would increasingly be worries about the use of AI in a clinic with regard to obviating human contribution, the physician-patient dynamics, and the clinician's obligations to ensure the AI use does not undermine the patient's right to self-determination and self-governance. More critically, as AI begins to take over decisions that were previously made by a clinician, the question of ethical an AI makes choices for the clinician becomes relevant. In what situations would it be ethically justifiable to allow an AI (not controlled by the clinician) to make a decision and in what situations does the clinician have to intervene in an AI controlled scenario? This is especially relevant in situations of critical care.

In a positive way, Als are becoming more sophisticated in the predictions, systems, and algorithms employed in healthcare, especially regarding decision systems. They do more and more to plan the strategies of complex care. For instance, AI systems like algorithms now serve as the primary means by which medical practitioners scan images of patients to determine and diagnose early stages of cancers and related disorders, like neural and cardiovascular (Gupta, 2025). Although AI technology is great at processing all kinds of data, the clinicians still face the ethical problem of having to use an AI machine, that is a model, who is accurate but AI does not really 'understand' the contextual existence of a 'patient'. AI decisions in some situations run counter to the principal strategy of the healthcare practice which is patient-centred. AI systems are usually programmed to apply algorithms and follow an 'evidence based' model of care regardless of a patient's value system, wish or the broader culture surrounding the individual (Fahad et al., 2024). This creates a gap between technological effectiveness and the fundamental ethical problem of not respecting the patient's identity. AI in healthcare creates loss of individual autonomy. People in the care industry might be put in harsh situations where the AI captures might be better than the actual system, but the decisions that AI arrives at do not consider the AI holistic nature of the patient.

Another ethical issue in AI healthcare is responsibility and duty of care. With adverse patient outcomes resulting from decisions made by an AI system, the issue of who legally is 'correct' loses all meaning. The healthcare model, in almost all its shapes and forms, assigns the fault to the health care practitioner in the event of a medical mistake. Placing responsibility on someone can be difficult because AI can cause ambiguity and fault can be placed on the creators of the AI or the healthcare practitioners per the healthcare institution threshold (Smith, 2021). Say an AI system causes harm because it misdiagnoses a patient, who is liable to take the blame, the algorithm's creator or the healthcare institution having no system supervision? Things get more difficult when someone has to understand and unpack an AI system's black-box model (Nasir et al., 2025). Lack of accountability is more punitive to those on the receiving end of the AI system, mainly because of the ambiguity surrounding an error.

Table 2: Key Ethical Dilemmas in AI Decision-Making

<table><tr><td>Ethical Dilemma</td><td>Description</td><td>Examples</td></tr><tr><td>Informed Consent</td><td>The challenge of explaining AI-driven decisions to patients</td><td>AI in diagnostic imaging</td></tr><tr><td>Bias and Fairness</td><td>The potential for bias in AI algorithms affecting outcomes</td><td>Disparities in diagnostic accuracy</td></tr><tr><td>Transparency and Explainability</td><td>Lack of transparency in AI decision-making processes</td><td>"Black-box" nature of deep learning models</td></tr><tr><td>Accountability and Responsibility</td><td>Who is accountable for AI errors in clinical care?</td><td>Misdiagnosis due to AI's recommendation</td></tr></table>

When considering the utilization of AI systems in the field of healthcare, who is liable when something goes wrong? Placing AI systems in the hands of service providers becomes a puzzle, strategic enough to challenge their ethical principles. With enhancements in Artificial intelligence technology, one of the most critical issues in the domain is concerning the responsibility of the accuracy, safety, and overall execution of an AI technology and whether responsibilities lie with the developer or the institution that utilizes and monitors its functionality (Gerke et al, 2020). There are as of now no overarching regulations that offer clear directives tackling this framework. As an example, when an AI system leads to an incorrect diagnosis or treatment, no clear attribution can be made as to whether the error stemmed from the AI itself or from the healthcare professionals in its implementation, execution, or interpretation. With no clear attribution there could be legal and ethical repercussions that are troubling, particularly in cases of malpractices or damages incurred to a patient.

The use of Artificial intelligence in the field of healthcare is still in its early stages and a great deal of progress is still yet to be made. In the EU there are regulations that include the General Data Protection and Regulation (GDPR) and in the US as the Health Insurance Portability and Accountability Act (HIPAA) which work to ensure the privacy and data security of sensitive patient records when AI technology is used (European Commission, 2018). Nevertheless, there still is a lack of legal frameworks regarding the use of clinical AI decision making as well as more ethical issues which are yet to be unaddressed. To what extent the General Data Protection Regulation (GDPR) requires a personal data subject to be informed about the processing of their personal data shall be conducted fairly and without undue delay, and such data would be used, however, is a matter still under debate, because, in its present formulation, the Regulation simply ignored the questions of accountability of artificial intelligence (AI) systems, and what to do about the situations when such systems function in the context of bottom-line flaws (European Commission, 2018). In like manner, the HIPAA Privacy Rule and subsequently enacted HIPAA regulations conclusively spell out the minimum standards of safeguarding patient data but fall short of addressing the more overarching questions of how artificial intelligence systems should be managed in the domain of practices of medicine. The more embedded AI systems become in the standard practice and workflows, the more the regulatory frameworks, which so far have been mainly structurally descriptive, need to become functional and responsive to the issues addressed. Newer initiatives, like the proposed AI Act in the European Union, aim to expand the regulation of the AI to include primary areas of concern, such as the

safety of the user, the AI, transparency, and accountability (Schmidt et al, 2024). The problem here is that the regulations are still half-baked and AI in healthcare remains a contested issue with abundant controversy as to how such regulations would spur profitable advances and protect the patient.

AI technologies have advanced in leaps and bounds in the recent past, yet in healthcare, many of its applications have fallen short because certain ethical and legal matters have been ignored, leading to negative impacts. A particularly alarming instance is that of biased AI systems. In a 2019 report, an AI system was found to systematically under-refer black patients for additional care in comparison with white patients, despite having equal or more health needs (Smith 2021). The reason for this was historically biased data reflecting inequities in access to care and health outcomes. This example aptly demonstrates the potential for AI in its current state to reinforce inequities in the healthcare system, and the requirement for developers to bias in the training datasets. Another example is the use of AI in diagnostic tools. A faulty AI algorithm that leads to a misdiagnosis A consequence of such a failure could be life-threatening. There are cases of patients suing healthcare providers because, in their opinion, AI-assisted diagnostic tools were the reason for the prolonged treatment, wrongful diagnosis, or triage that could be avoided by human input. Such cases are a point of focus for the legal literature on AI in healthcare, especially on the question of whose liability it is when things go wrong (Gerke et al 2020).

In other cases, failure to obtain reasoned consent results in ethical and legal problems. In healthcare powered by AI, patients, more often than not, are unaware of the degree to which their care is governed by AI. Hence, the inadequate informing of patients about the operations of AI systems and subsequent influence in clinical decision-making undermines their ability to give informed consent. Patients and healthcare professionals alike often find the complex nature of AI systems challenging to comprehend, thereby spawning yet another difficulty with the obtaining of informed consent. This situation has led to calls for clearer channels of communication concerning AI involvement in patient care, ensuring that the patient is provided with all the relevant information relating to the risks and benefits. Ethical and legal quandaries with the use of AI in healthcare need just reforms to ensure development, implementation, and monitoring of AI tools with patient safety and equity at the forefront. In the absence of specific and lucid guidelines from regulatory authorities that address the issues of clinical-based AI, liabilities from AI-related decisions, methods of enforcement of transparency, principles for ensuring non-bias, and methods of ensuring accountability, the intrusion of AI into medicine is likely to do more harm than good, further eroding public trust and deepening healthcare disparities.

In conclusion, while AI will continue to evolve in healthcare, the associated ethical and legal dilemmas will need to be resolved. Through legislation, transparency, and accountability, the healthcare industry can protect patient rights and uphold ethical and responsible uses of AI whilst allowing the technology to be positively harnessed in service of medicine. These challenges must, however, be met with new and imaginative ways to bind an unethical practice, lest they threaten to turn an AI-dedicated good into harm.

## IV. AI AND HEALTHCARE: THE ROLE OF TRANSPARENCY AND EXPLAINABILITY

In modern medicine, clinical decision-making processes are constantly evolving with the introduction of novel technology, such as artificial intelligence (AI). As AI technology institutionalizes shifts in diagnostics, therapeutics and management of patients, it becomes extremely important that AI systems are not just efficient, but also, clear and justifiable in the decision-making processes. Explainable Artificial Intelligence (XAI) is a new framework focused on the "black box" problem of AI systems, in this case in medicine, with the purpose of clarification, to facilitate greater understanding of the steps taken and the decision reached. These steps are important in understanding the answer to issues in the field, such as ethical and legal responsibility, autonomy over patients, and informed consent.

AI is employed widely in the field of medicine in areas, such as medical imaging, predictive analytics, and patient monitoring. In these areas, AI systems make intricate decisions that often leave healthcare professionals baffled, resulting in reluctance to fully embrace the technology. Explainable AI can cause shifts if it can illuminate the thought processes of AI models wherein it is able to explain and defend a decision. This is extremely valuable in medicine, as the risk in the decision made, can cause significant harm to the patients, especially in the case of life-threatening diseases. For example, clinicians may be hesitant to use an AI generated treatment plan which AI formulates without providing reasoning for its conclusion. XAI addresses this issue by allowing clinicians to assess AI's reasoning and ensure its helpfulness, without needing to trust its reasoning, and not being sure if it is helpful (Fahad et al., 2024).

Table 3: Case Studies and Real-World Examples of AI Accountability in Healthcare

<table><tr><td>Case Study/Example</td><td>AI System Used</td><td>Ethical Issues</td><td>Legal Implications</td><td>Outcome/Impact</td></tr><tr><td>AI in Diagnostic Imaging</td><td>AI algorithms for diagnosing cancer</td><td>Bias in algorithms, lack of transparency in decision-making</td><td>Accountability for misdiagnosis, liability for harm due to inaccurate AI results</td><td>Increased scrutiny on AI in diagnostic processes, calls for explainability in AI</td></tr><tr><td>AI in Predictive Analytics</td><td>AI systems predicting patient deterioration</td><td>Bias against marginalized groups, patient autonomy concerns</td><td>GDPR compliance, need for patient consent, transparency requirements</td><td>Identification of potential disparities, highlighted need for fairer models</td></tr><tr><td>AI-Assisted Surgery</td><td>Robotic surgery systems powered by AI</td><td>Responsibility for errors, bias in procedural recombinations</td><td>Legal responsibility for AI errors during surgery, ethical concerns regarding trust</td><td>Enhanced precision, but legal debates on accountability after complications</td></tr></table>

The use of AI for clinical decision-making further underscores the need for explainable and transparent AI for informed consent. Patients undergoing treatment may be the subject of decisions made by AI, and it is the patient's right to know the influence AI might be having on the decisions made regarding their treatment. It is essential patients know how AI is being used to their benefit in their treatment. The absence of knowledge leads to uninformed decisions which are not right. Patients may feel dejected by the absence of ostensible control. It is likely also that patients may feel undue suspicion or lack of trust toward the technology if systems are operating in a "black-box" mode without explanations of decision making logic (Smith, 2021). Patients need to understand the logic and reasoning of an AI system's decision-making in order to have their autonomy respected and improve their decision making.

The challenges of attaining transparency in an AI system are profound. The challenges with the deep learning models, which encompass a range of opaque algorithms, are among the most pronounced, which form a part of clinical practice. These models are very good at pattern recognition and analyzing large datasets and can assist medical staff in areas they may miss, but the actions taken in order to reach a conclusion are often very vague and unexplained. Deep learning models, unlike traditional rule-based AI systems, reason through and arrive at conclusions based on several layers of data and so form complex structures which logically makes the reasoning very hard to pinpoint (Gupta, 2025). This opacity is a major issue in healthcare, where trust in the system's reasoning is paramount. The system also has to be trustworthy and patient centric on a matter of principle. Wisely, the clinical ethics have to be observed. There is a concern about the dichotomy of performance and explainability. The matter is, the more complex the AI systems are, the

Less visible to the human eye, their reasoning and rationale are, and predicting and diagnosing using complex AI systems may be a challenge. There are also models which predict at an un-hearable rate in order to make the explanation visible. They fall on the other end of the reasoning and accuracy spectrum. There is also a dilemma about the explainability of the systems and the performance needed, which irrationally defaults to the explainable rational systems. AI systems' performance and explainability, which are ethical and legal guidelines, are autonomous. Also, even when models are made more interpretable, they become unreasonably unexplainable to the clinician, which may be a paradox, devoid of any training. Therefore, rational transparent AI is a matter of strategy to be undertaken consciously in unison with technical measures but also invested deeply in training the healthcare professionals.

Improving the transparency of AI systems within the healthcare industry has the potential of fostering a positive relationship between the patients and the technology being used. It is crucial that patients and clinicians have the utmost confidence that the AI systems being deployed are both equitable and trustworthy. It has been noted that patients are more trusting of the healthcare systems and AI technology being utilized, when there is a transparency and explainability to the AI systems (Gerke et al., 2020). Trust in the healthcare system alone is one of the heavy determinants in the engagement and adherence to the treatment regimens thus having a positive impact on the health outcome of the patients. Moreover, the more healthcare professionals trust AI systems, the more they will be willing to use AI computational outputs in their decisions, thus improving the quality of care provided to patients.

That being said, no matter how useful the technology is, transparency is only one of the factors of a system that has the potential to foster trust. The AI systems also have to be designed in a way that there is no base of systemic inequities that could compromise fairness. An AI system may be explainable, however, if the AI system has been trained with biased data, it may lead to unjust treatment. For instance, one research highlighted that a specific AI system for healthcare decision making was making more misinformation for the black patients than it was for the white patients thus putting people at risk (Smith, 2021). Addressing this issue needs not just making AI systems more explainable, but also capturing their fairness and impartiality. Nasir et al. (2025) explain how researchers are striving to shape AI technologies that can offer elucidations without deepening the biases so that AI can be trusted to render fair decisions across varied patient populations.

In the end, the trustworthiness of AI systems hinges on their ability to explain themselves and offer justifiable reasoning for their outputs. This functionality can enhance the willingness of care providers to integrate AI technologies into their practice, and subsequently, strengthen the confidence of patients in the care they receive. The willingness to incorporate AI into the workflow predictably marks a stepwise augmentation in the collaboration between professionals in the field of medicine and AI developers. The future of AI in medicine will heavily depend on the explainable AI (XAI) that upholds ethical standards and is used responsibly and ethically.

## V. ACCOUNTABILITY FRAMEWORKS AND RECOMMENDATIONS

With the deployment of AI (artificial intelligence) systems in direct patient care, there is an urgent need to design clear accountability frameworks to support ethical reasoning and patient safety. The use of AI decision making, especially in clinical settings, requires fresh accountability approaches. These approaches have to be both just and transparent. AI involvement in healthcare needs defined and allocated responsibility to developers, healthcare givers, and patients. This rational responsibility helps to respond to ethical issues of patient autonomy and legal issues like liability for negligence.

The AI in healthcare accountability model suggests shared accountability to all three, healthcare givers, developers and patients. Developers owe it to society to ensure AI is designed within ethical AI constraints which include fairness, reliability, and ethical transparency (Fahad et al, 2024). The responsibility for legal and ethical compliance in clinical AI application is the responsibility of the healthcare provider. This model suggests that healthcare professionals still have the final say and responsibility for patient care, irrespective of the AI assistance. Governance also extends to patients who have a fundamental right to say whether AI can be used in their care. By dividing the tasks of different parties, shared accountability encourages cooperation and assures that an AI is deployed in a responsible manner.

Ethical boundaries in AI integration remain necessary within the field of healthcare. These boundaries serve the welfare of patients and ethical frameworks. One important element of these boundaries is informed consent. Patients need to comprehend the influence of Artificial Intelligence in their treatment plans as the technology becomes a dominant figure in decision-making. Patients need to be made aware of the "AI paradox" where their questions and concerns about the risks and advantages faced regarding AI are surpassed prior to decision-making regarding treatment. This paradigm shift of informed consent is necessary as a safeguard towards patient autonomy.

Another prohibition facing ethics AI in healthcare relates to developing and instituting policies on errors associated with the use of AI. While Artificial Intelligence can greatly improve decision-making in clinics, it is not devoid of mistakes. Diagnostic errors and therapy AI errors have to be deliberately resolved aimed at fostering patient safety. Healthcare institutions ought to establish mechanisms for tracking and reporting AI errors and correcting them. In addition, when mistakes happen, responsibility should be addressed to someone specific. Developers should be answerable for their designs and healthcare delivers for proper clinical conduct if AI systems do not perform as intended by default and/or exhibit biases (Smith, 2021).

Technological measures, as well as ethical considerations, can foster responsibility for AI in healthcare. AI systems should be developed so they can be explained and clinicians can follow the rationale behind the decisions made. Explainable AI (XAI) allows healthcare providers and patients to understand the recommendation and the rationale behind it. This understanding is essential for clinicians to be able to judge the AI outputs and decide on proper actions. Moreover, AI algorithms need to be systematically evaluated to ensure that they do not harbor biases and that they can make the right decisions equitably among different patients (Gerke et al., 2020).

With regard to these frameworks, the General Data Protection Regulation and HIPAA regulations, while having areas of concern on data privacy, are silent on the particular problems of AI and healthcare. More recent regulations, such as the EU's AI Act, try to fill the gaps in oversight on AI systems. These regulations try to address AI safety, and transparency fairness of the system (Schmidt et al. 2024). However, these frameworks need to be updated as the technological advances in AI keep shifting in unforeseeable directions.

In conclusion, the issue of accountability is increasingly worrying alongside the advancements of AI and technology, and calls for extreme ethical and legislative changes in the healthcare industry. Models of shared accountability, streamlined informed consent procedures, and policies to amend AI system errors proposed for AI in clinical practice offer greater transparency. Furthermore, the new frameworks of explainable AI and regulatory revisions will ensure consistent regard for fairness and safety to the patients. Thus, the healthcare industry could enjoy the incorporation of AI technology while sustaining the faith and trust of patients.

## VI. CONCLUSION

Ever since the introduction of AI into different industries, it has gradually been implemented into the healthcare sector, it has also been accompanied by an increasing number of ethical and legal problems. This research has investigated the importance of allocating responsibility for the decisions made by AI, and particularly the application of AI in the medical field. The paper discusses, among other things, the potential for bias in AI systems and the associated inequitable and discriminatory treatment in the treatment of certain vulnerable groups. Not to mention, the ethical issues of AI in the healthcare field is already fairly advanced AI and still grappling with issues concerning transparency and the right to make decisions. Most of these issues come with the absence of the clear mental framework or legal parameters which would make it feasible to pinpoint the individual in charge when systems make harmful or erroneous decisions.

It is these issues that make it all the more important to provide a framework that would ensure that AI designers in cooperation with healthcare practitioners and patients bear the reasonable blame for the made decisions and that the ethical framework is upheld when considering the application of healthcare AI.

The importance and implications of these findings are multidisciplinary. Healthcare practitioners are not just expected to use the AI as a mere decorative tool, but to also appreciate the significance that it is AI that, in the which is expected of it, alongside the clinical experts, to perform rational and intelligent activities. AI uses technology in transformative ways and therefore its positive and more targeted use in patient care is equally important. There needs to be more research on regulatory frameworks that evolve with AI technologies and how they can be integrated in diverse areas like automation and machine learning. More effort is also needed to understand how automation can be done in AI systems ethics and integrate AI systems ethics at the design stage.

Technological adoption in the healthcare systems is often delayed, which is why strengthening the socio-political and economic systems that drive them will also drive health change. More democratic systems promote healthy competition which is beneficial in expanding private healthcare systems. Thus, more policies that encourage diversity in the socio-political and economic systems are needed. Investing in these spheres will be imperative for the complete potential of AI driven change in the industry while protecting the rights and safety of the patients.

As AI becomes more prominent being used in the health sector, the tools and frameworks will also need to be more cognizantly structured. This will also hoist the ethical and legal boundaries when it comes to pursuing these technologies.

Generating HTML Viewer...

References

23 Cites in Article

Ahmed Abdel,Qader Mohammed,S Mahmoud Moussa Osman,Y Ibrahim,A Shaban,M (2023). Ethical and regulatory considerations in the use of AI and machine learning in nursing: A systematic review.

Anwar Albalawi,Mohammad Yassen,Khaled Almuraydhi,Ahmed Althobaiti,Hadeel Alzahrani,Khalid Alqahtani (2024). Ethical Obligations and Patient Consent in the Integration of Artificial Intelligence in Clinical Decision-Making.

H Alzahrani,Others (2022). Legal and ethical consideration in artificial intelligence in healthcare: Who takes responsibility?.

P Bagave,M Westberg,M Janssen,A Ding (2025). Accountability framework for healthcare AI systems: Towards joint accountability in decision making.

J Bentham (1789). An introduction to the principles of morals and legislation.

F Busch,J Kather,C Johner,M Moser,D Truhn,L Adams,K Bressem (2024). Navigating the European Union artificial intelligence act for healthcare.

L Floridi,A Jobin,Z Obermeyer,S Gerke (2024). The ethics of artificial intelligence systems in healthcare and medicine: From a local to a global perspective, and back.

A Fahad,Y Hamzah,A Mohammed,A Dhaifallah,H Hassan,A Mohammad,Others (2024). Ethical obligations and patient consent in the integration of artificial intelligence in clinical decision-making.

S Gerke,T Minssen,G Cohen (2020). Ethical and legal challenges of artificial intelligence-driven.

Nikhil Gupta (2025). Explainable AI for Regulatory Compliance in Financial and Healthcare Sectors: A comprehensive review.

Thomas Grote,Philipp Berens (2019). On the ethics of algorithmic decision-making in healthcare.

M Hoque,M Ali,S Ferdausi,K Fatema,M Mahmud (2025). Leveraging machine learning and artificial intelligence to revolutionize transparency and accountability in healthcare billing practices across the United States.

Sasa Jenko,Elsa Papadopoulou,Vikas Kumar,Steven Overman,Katarina Krepelkova,Joseph Wilson,Elizabeth Dunbar,Carolin Spice,Themis Exarchos (2025). Artificial Intelligence in Healthcare: How to Develop and Implement Safe, Ethical and Trustworthy AI Systems.

Mohammad Nasir,Kaif Siddiqui,Samreen Ahmed (2025). Ethical-legal implications of AI-powered healthcare in critical perspective.

Tuan Pham (2025). Ethical and legal considerations in healthcare AI: innovation and policy for safe and fair use.

Eleanor Roy,Sara Malafa,Lina Adwer,Houda Tabache,Tanishqa Sheth,Vasudha Mishra,Moaz Abouelmagd,Andrea Cushieri,Sajjad Ahmed Khan,Mihnea-Alexandru Gaman,Juan Puyana,Francisco Bonilla-Escobar (2024). Artificial Intelligence in Healthcare: Medical Students' Perspectives on Balancing Innovation, Ethics, and Patient-Centered Care.

Jelena Schmidt,Nienke Schutte,Stefan Buttigieg,David Novillo-Ortiz,Eric Sutherland,Michael Anderson,Bart De Witte,Michael Peolsson,Brigid Unim,Milena Pavlova,Ariel Stern,Elias Mossialos,Robin Van Kessel (2024). Mapping the regulatory landscape for artificial intelligence in health within the European Union.

Helen Smith (2021). Clinical AI: opacity, accountability, responsibility and liability.

H Smith,Others (2023). AI and machine learning ethics, law, diversity, and global impact.

Sudhi Sinha,Young Lee (2024). Challenges with developing and deploying AI models and applications in industrial systems.

Daiju Ueda,Taichi Kakinuma,Shohei Fujita,Koji Kamagata,Yasutaka Fushimi,Rintaro Ito,Yusuke Matsui,Taiki Nozaki,Takeshi Nakaura,Noriyuki Fujima,Fuminari Tatsugami,Masahiro Yanagawa,Kenji Hirata,Akira Yamada,Takahiro Tsuboyama,Mariko Kawamura,Tomoyuki Fujioka,Shinji Naganawa (2023). Fairness of artificial intelligence in healthcare: review and recommendations.

B Yadav Kasula (2021). Ethical and regulatory considerations in AI-driven healthcare solutions.

V Xafis (2019). AI-assisted decision-making in healthcare: The application of an ethics framework for big data in health and research.

No ethics committee approval was required for this article type.

Data Availability

Not applicable for this article.

How to Cite This Article

uma shanmugam. 2026. \u201cEthical and Legal Accountability of AI Decisions in Clinical Care\u201d. Global Journal of Computer Science and Technology - C: Software & Data Engineering GJCST-C Volume 25 (GJCST Volume 25 Issue C1): .

Explore published articles in an immersive Augmented Reality environment. Our platform converts research papers into interactive 3D books, allowing readers to view and interact with content using AR and VR compatible devices.

Your published article is automatically converted into a realistic 3D book. Flip through pages and read research papers in a more engaging and interactive format.

AI integration into medicine seems to automate previously inconceivable parts of decision making and offers new possibilities in the use of diagnosis and patient care. From the onset of AI in the healthcare system, there have been complex ethical and legal questions that need to be addressed to guarantee patient safety, equity, and responsible care. This paper identifies the ethical and legal issues surrounding AI decision making in healthcare while underscoring the need for more robust accountability structures. It outlines primary ethical concerns including bias in algorithms, the need for transparency, patient agency, and the legalities of consent and outlines the healthcare system and patient relationship. It further outlines accountability issues that arise from AI decision making that adversely affect individuals and existing accountability response models between the developers, healthcare institutions, and even patients. It does so while leveraging case studies of the use of AI in healthcare to examine from a legal perspective the GDPR and HIPAA, and more recent legislation like the EU’s AI Act. The paper tries to provide recommendations that include ethical frameworks ai ethical guidelines, ai explainability focus, promising also more advanced responsible ai regulations, specialty applying regulations.

Our website is actively being updated, and changes may occur frequently. Please clear your browser cache if needed. For feedback or error reporting, please email [email protected]

Thank you for connecting with us. We will respond to you shortly.